VMLOps, a platform focused on developer tools for machine learning operations, has launched Algorithm Explorer, a new interactive visualization tool designed to let engineers and researchers watch machine learning algorithms train step-by-step. The tool, announced via a social media post, aims to demystify the training process by providing real-time visual feedback on core components like gradients, weights, and the evolution of decision boundaries, all within a single interface that combines mathematical notation, visual plots, and executable code.

What Happened

VMLOps has released a new web-based application called Algorithm Explorer. The core promise is an interactive environment where users can run classic machine learning algorithms—likely starting with simpler models like linear regression, logistic regression, or small neural networks—and observe the training process unfold visually in real-time.

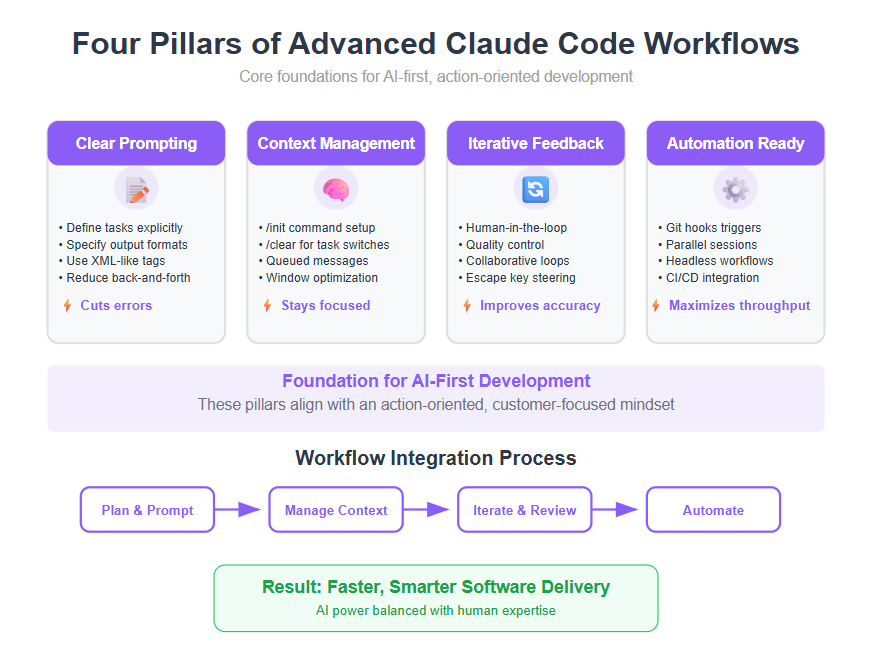

The key advertised features are:

- Step-by-Step Training Visualization: Users can watch the algorithm's state update with each training iteration (epoch or batch).

- Core Component Tracking: The tool visualizes fundamental concepts like gradients (the direction and magnitude of updates), weights/parameters (their values over time), and the evolution of decision boundaries (how the model's classification thresholds change).

- Integrated Interface: It combines three elements crucial for understanding: the underlying mathematical equations, the resulting visualizations and plots, and the executable code that drives the process.

Context & Technical Details

While the initial announcement is brief, the tool appears to be an educational and debugging aid targeted at students, ML engineers, and researchers. Manually instrumenting training loops to visualize these internal states is a common but tedious practice for debugging model convergence issues, understanding learning dynamics, or explaining concepts.

Tools like TensorBoard (from TensorFlow) and Weights & Biases offer extensive logging and visualization for large-scale experiments but are often complex and post-hoc. Algorithm Explorer seems positioned as a more immediate, pedagogical tool for smaller-scale, canonical algorithms. Its value proposition is lowering the barrier to intuitive understanding of how optimization algorithms like gradient descent actually work.

By making gradients and weight updates visually apparent, it could help diagnose problems like vanishing/exploding gradients, poor learning rate choices, or overfitting as they happen. The inclusion of decision boundary evolution is particularly useful for explaining classification algorithms.

gentic.news Analysis

This launch by VMLOps fits into a clear and growing trend of ML observability and developer experience (DevX) tools. While the major cloud platforms and frameworks offer monitoring, there's a persistent gap in intuitive, real-time tools for the learning process itself, especially for those new to the field or working on model debugging. This follows a pattern of increased activity in the MLOps tooling space, where companies like Weights & Biases, Comet.ml, and Neptune.ai have seen significant growth by focusing on experiment tracking and visualization, albeit typically for larger, production-bound models.

Algorithm Explorer's focus on fundamental algorithms suggests a strategic positioning. It may serve as an on-ramp for students and junior developers, potentially driving user acquisition for VMLOps's broader MLOps platform suite. If successful, we would expect the tool to quickly expand from classic algorithms (linear models, SVMs, small neural nets) to visualize components of contemporary architectures like attention mechanisms in transformers or convolutional filters in CNNs.

The emphasis on code, math, and visuals in one place addresses a genuine pain point in ML education and communication. Explaining a backpropagation update rule from a textbook is one thing; showing the weight delta, the gradient that caused it, and the resulting shift in a classification plot simultaneously is far more powerful. For practitioners, this could shorten debugging cycles for custom loss functions or novel architectures where expected training behavior isn't well-documented.

Looking at the competitive landscape, this tool does not directly compete with heavyweights like TensorBoard but instead complements them by focusing on explainability and intuition at the algorithmic level. Its success will likely depend on the breadth of algorithms supported, the smoothness of the interactive experience, and whether it can bridge the gap from pedagogical toy examples to providing insights for real, if smaller, research models.

Frequently Asked Questions

What is VMLOps Algorithm Explorer?

Algorithm Explorer is an interactive web-based tool from VMLOps that allows users to visualize machine learning algorithms as they train. It shows real-time updates to gradients, model weights, and decision boundaries, combining visual plots with the related mathematical concepts and code.

How is this different from TensorBoard or Weights & Biases?

Tools like TensorBoard and Weights & Biases are designed for logging, tracking, and visualizing metrics and results from large-scale, production-oriented training runs, often after the fact. Algorithm Explorer appears focused on the real-time, step-by-step educational and debugging process for fundamental ML algorithms, emphasizing intuitive understanding of the core mechanics of learning.

Who is the target user for this tool?

The primary users are likely students learning machine learning, junior ML engineers debugging their first models, and researchers or educators who need to demonstrate or understand the detailed internal behavior of an algorithm during training. It's designed for those who want to see how learning happens, not just the final result.

Can Algorithm Explorer be used for large language models (LLMs) or diffusion models?

Based on the initial announcement, the tool is likely focused on smaller, classic machine learning algorithms where the entire state (weights, gradients) can be feasibly visualized. Visualizing the billions of parameters in an LLM in real-time is not currently practical. However, the concept could be applied to specific components or smaller sub-modules of large models for educational purposes.