Key Takeaways

- A new paper introduces VoteGCL, a framework that uses few-shot LLM prompting and majority voting to create high-confidence synthetic data for graph-based recommendation systems.

- It integrates this data via graph contrastive learning to improve accuracy and mitigate bias, outperforming existing baselines.

What Happened

A new research paper, "VoteGCL: Enhancing Graph-based Recommendations with Majority-Voting LLM-Rerank Augmentation," was published on arXiv. The work addresses a fundamental problem in recommendation systems: data sparsity. Limited user-item interactions degrade performance and amplify popularity bias, where a system over-recommends already popular items.

The core innovation is a data augmentation framework that leverages Large Language Models (LLMs) and item textual descriptions (like product titles, blurbs, or attributes) to artificially create new, high-quality interaction signals.

Technical Details

The VoteGCL framework operates in two main stages:

LLM-Rerank Augmentation with Majority Voting: For a given user, the system presents an LLM with a list of candidate items and their textual descriptions. Using few-shot prompting, the LLM is asked to rerank these items based on their relevance to the user's historical profile. Crucially, this process is run multiple times (with different prompt variations or model random seeds). The final synthetic "interaction" is generated via majority voting—only items consistently ranked highly across multiple LLM trials are selected. The authors provide theoretical guarantees that this voting mechanism, based on the concentration of measure, yields high-confidence synthetic data.

Graph Contrastive Learning Integration: The newly generated synthetic interactions are not simply appended to the training data, as this could cause a distributional shift. Instead, they are integrated into a Graph Contrastive Learning (GCL) framework. GCL is a self-supervised technique that learns node (user/item) representations by maximizing agreement between differently augmented views of the same graph. By treating the original sparse graph and the LLM-augmented graph as two views, the model learns robust representations that are invariant to the data sparsity, effectively leveraging the new signals while mitigating bias.

Results: The paper reports "extensive experiments" showing the method improves standard recommendation accuracy metrics (like Recall and NDCG) and, importantly, reduces popularity bias compared to strong baselines.

Retail & Luxury Implications

This research is directly applicable to the core challenge of personalization at scale in retail and luxury. The implications are significant for several key scenarios:

- Cold-Start Problem for New Products: A luxury house launches a new handbag line with no purchase history. VoteGCL could use the item's detailed description (materials, craftsmanship, design inspiration) and LLM understanding to connect it to users with profiles indicating a taste for similar attributes, jump-starting its recommendation lifecycle.

- Long-Tail Discovery: High-end retailers often carry unique, niche items that rarely get clicked or purchased. This framework can synthetically create plausible connections between these long-tail items and suitable customers, driving discovery and increasing inventory turnover beyond the bestselling classics.

- Mitigating the "Rich-Get-Richer" Bias: Popular luxury items (e.g., iconic monogram bags) naturally dominate recommendations. By generating synthetic interest in less-popular but highly relevant items, the system can create a more balanced, exploratory experience that aligns with a luxury client's desire for uniqueness and personal curation.

- Enhancing Digital Wardrobing & Styling: For services that recommend complete looks or complementary items, data sparsity is acute (users rarely buy full outfits at once). LLM-augmentation can infer holistic style compatibility from textual attributes, creating synthetic outfit-level interactions to train better stylist agents.

The Critical Gap: The research is promising but academic. The cost, latency, and operational complexity of running multiple LLM inferences per user/candidate set for majority voting in a production, real-time system are non-trivial. The immediate application is more likely in offline batch processing to enrich training data for existing models, or in hybrid systems where the LLM-augmented graph provides a rich prior for a lighter-weight real-time ranker.

gentic.news Analysis

This paper arrives amidst a significant week of activity on arXiv focused on the limits and applications of LLMs. It directly contrasts with the argument published just a day earlier by a Columbia professor that LLMs are fundamentally limited for scientific discovery due to their interpolation-based nature. VoteGCL cleverly sidesteps the demand for true generation or discovery; it uses the LLM as a sophisticated interpolation engine over existing item semantics and user patterns, a task perfectly suited to its strengths. It also provides a potential solution to the critical failure modes of LLM-based rerankers in cold-start scenarios diagnosed in another paper published the same day, by employing ensemble-like majority voting for robustness.

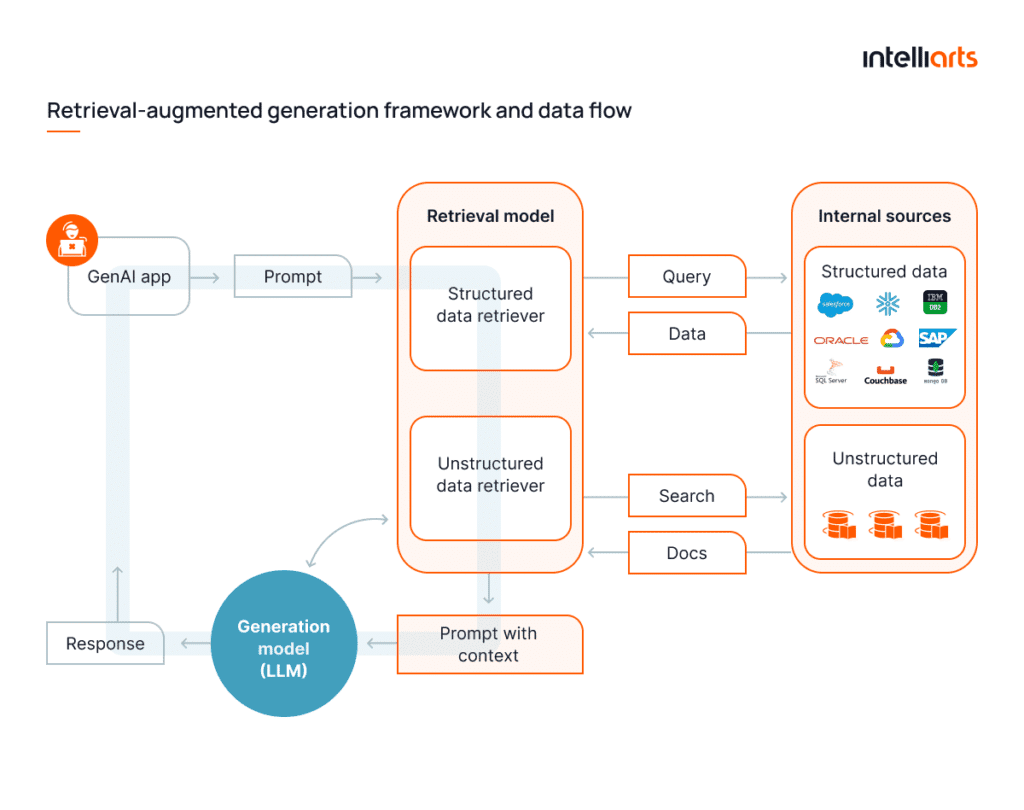

The framework sits at the convergence of three major trends we track: large language models, Retrieval-Augmented Generation (as the LLM is "retrieving" knowledge from item text), and Recommender Systems. It represents a move beyond using LLMs as mere chat interfaces or zero-shot rankers, toward integrating them as core, reasoning-based data augmentation engines within traditional ML pipelines. This aligns with the broader industry shift towards agentic hybrid systems, as seen in our coverage of reference architectures for dataset search.

For luxury retail AI leaders, the takeaway is that the frontier of recommendation is moving from collaborative filtering alone to semantic- and knowledge-augmented systems. The value of rich, well-structured product metadata (textual descriptions, attributes) is exponentially increasing, as it becomes the fuel for LLM-based reasoning. The next step is to operationalize such research while rigorously managing the risks—including cost, latency, and the potential for the LLM to inject its own subtle biases—highlighted in our recent coverage of security frameworks for autonomous AI agents in commerce.