The Technique — What Works After Six Months

After using Claude Code as his primary Python development tool for half a year, developer Peyton Green has distilled what actually works versus what's just noise. The rapid patch cycle (v2.1.85 arrived after three patches in 24 hours) prompted him to share practical, actionable advice for developers who want to move beyond basic tutorials.

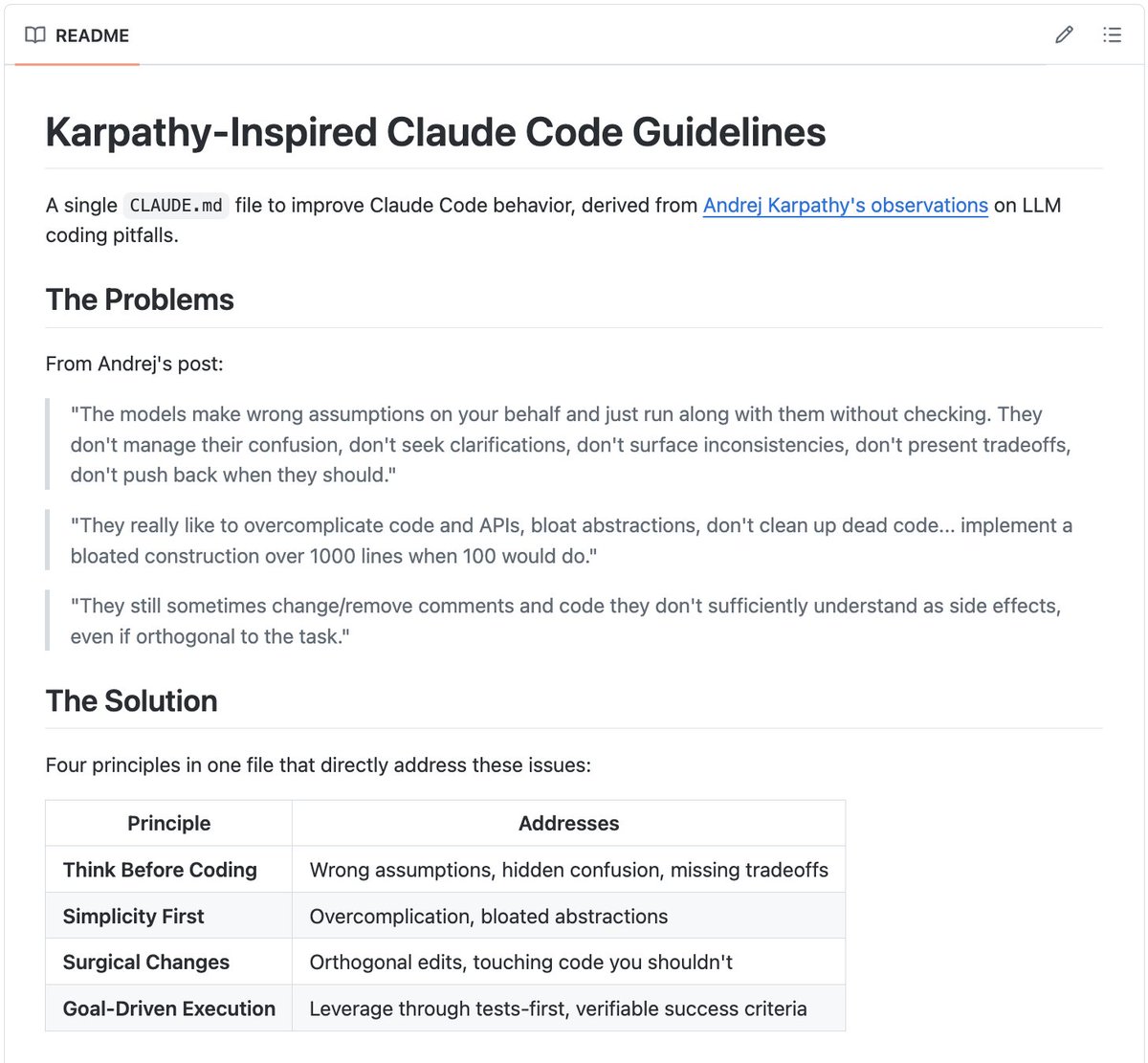

Why It Works — The CLAUDE.md Foundation

Most developers know about CLAUDE.md but underestimate its impact. Without it, Claude Code makes educated guesses about your project. With a well-structured one, it knows your exact toolchain, project structure, and boundaries. This follows Anthropic's emphasis on CLAUDE.md as a persistent configuration system that optimizes Claude Code for complex, multi-step development tasks.

Green's template includes critical sections most developers miss:

## Don't touch

- `migrations/` — hands off unless explicitly asked

- `.env` files — never read or modify

- `pyproject.toml` dependency versions — propose changes, don't apply

This "Don't touch" section prevents Claude Code's helpfulness from becoming a liability by editing adjacent files you didn't intend to change.

How To Apply It — Three Essential Commands

1. Interactive Mode: claude

Run plain claude in your project root for complex, multi-step tasks. Green notes: "The approval flow feels slow the first week, then becomes natural — you're reviewing diffs, not babysitting output."

2. One-Shot Mode: claude -p "task description"

Use this for well-defined, specific tasks:

claude -p "Write a pytest fixture that creates a temp SQLite database for testing, tears it down after each test, and lives in tests/conftest.py"

The key is precision — vague prompts produce vague results.

3. Session Continuation: claude --continue

Resumes your previous session. While not perfect context restoration, it's better than re-explaining everything when you return to a half-done task.

The Test-First Workflow That Actually Works

Green's most effective pattern: describe desired behavior as a test, then have Claude Code implement the function.

# Your prompt:

"Write the test first. I want a function `parse_config(path: str) -> Config`

that reads a YAML file and returns a validated Config dataclass.

The test should cover: valid input, missing required field,

invalid type for a field, and file not found."

# After Claude writes tests/test_config.py:

"Now implement parse_config to pass those tests."

This works because tests constrain the solution space. Claude Code is better at implementing against a spec than guessing what you want.

Two Critical Best Practices

1. Ask for the Plan First

For non-trivial tasks, ask "what's your plan?" before "write the code." This catches misunderstandings early. "I'd add a Redis-backed rate limiter..." when you don't have Redis is much cheaper to correct as text than as 200 lines of code.

2. Keep Sessions Focused

Claude Code's context window is large but not infinite. Green recommends:

- Start a new session for each distinct task

- Don't drag in unrelated files "just in case"

- Use

--continueonly for the same task, not adjacent ones

One task per session sounds inefficient but proves faster in practice.

What Doesn't Work

- Broad improvement requests: "Look at the codebase and suggest improvements" yields obvious or irrelevant suggestions

- Auto-accept edits: Keep

autoAcceptEdits: falseto review changes one at a time - Complex architecture first drafts: Claude Code excels at implementation, not high-level system design

The Prompt Library Solution

After months of repetition, Green compiled his working prompts into the AI Developer Toolkit — 272 prompts organized by task type (testing, refactoring, debugging, etc.). The key insight: prompts that work best give Claude Code clear output formats, explicit constraints, and examples.

gentic.news Analysis

This developer's experience aligns with trends we've observed across 400 Claude Code articles. The emphasis on CLAUDE.md configuration echoes our coverage of Anthropic's push toward persistent project context — a strategy that leverages Claude Opus 4.6's complex reasoning capabilities while constraining its output to project-specific patterns.

The test-first workflow Green describes connects directly to Claude Code's strength in retrieval-augmented generation (RAG) for coding tasks. By providing test specifications first, developers give Claude Code concrete requirements to retrieve and implement against, rather than asking it to generate architecture from scratch.

This practical approach contrasts with broader "AI will replace developers" narratives, instead positioning Claude Code as a tool that amplifies developer productivity when used with specific, battle-tested workflows. The rapid patch cycle Green mentions (three versions in 24 hours) reflects Anthropic's aggressive iteration on Claude Code, which we've tracked through multiple version jumps as they compete in the crowded AI coding assistant space.

Green's warning against letting Claude Code run multiple steps without checkpoints aligns with our previous analysis of Claude Agent frameworks — while multi-agent collaboration shows promise for complex workflows, individual Claude Code sessions benefit from focused, incremental progress with human oversight at key decision points.