A GitHub repository containing a single markdown file—CLAUDE.md—has rapidly gained 15,000 stars, highlighting a growing developer trend: systematically engineering the behavior of AI coding assistants rather than accepting their raw output.

The file is a direct application of observations made by Andrej Karpathy, former Senior Director of AI at Tesla and a founding member of OpenAI. Karpathy has frequently noted that Large Language Models (LLMs) make predictable mistakes when generating code, such as unnecessary over-engineering, ignoring a project's existing patterns, and adding unrequested dependencies.

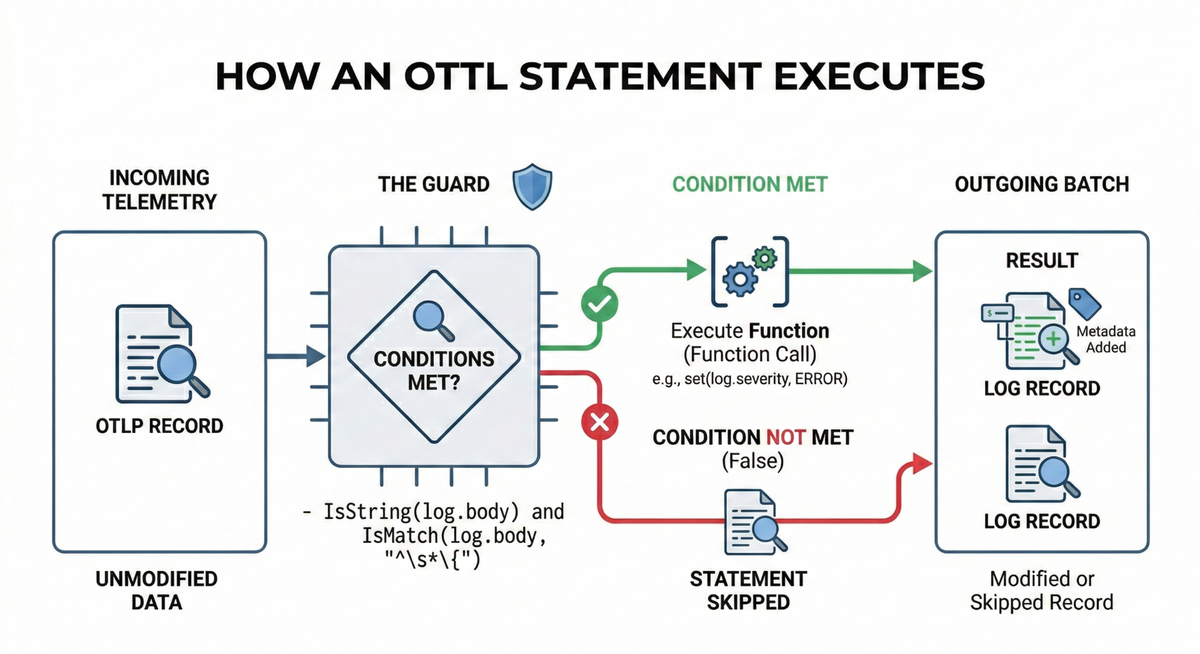

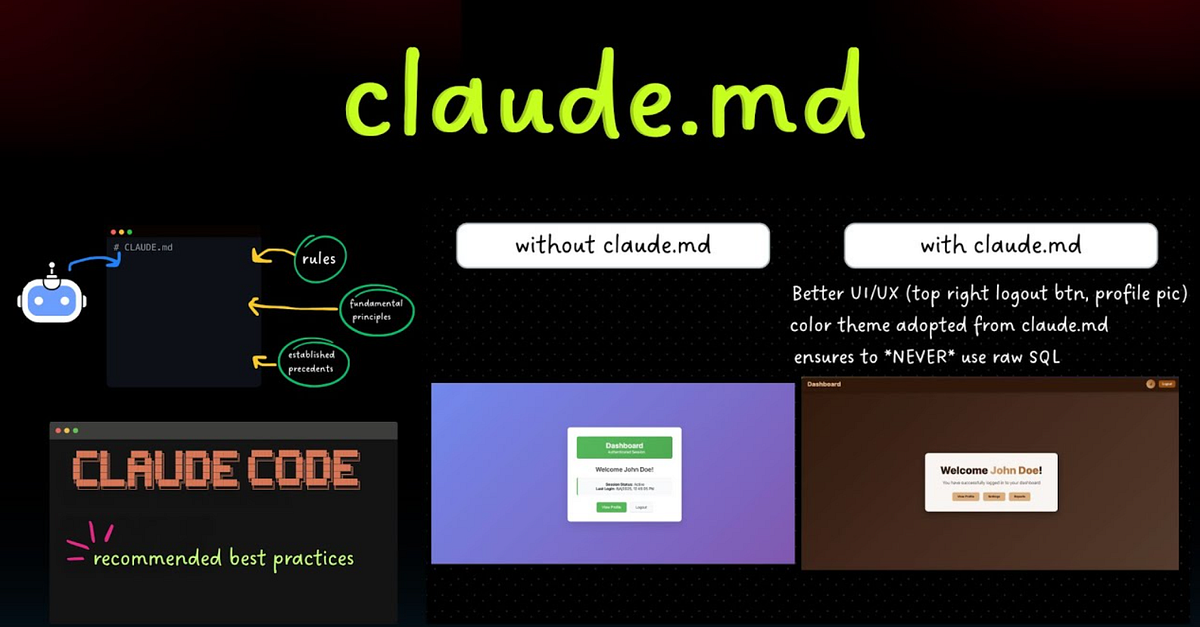

The CLAUDE.md file acts as a project-specific set of instructions for Anthropic's Claude Code (and potentially other assistants). By placing this file in a repository's root directory, developers can provide structured, contextual guidelines that shape how the AI interacts with and writes code for that entire project.

Key Takeaways

- A GitHub repo containing a single CLAUDE.md file, inspired by Andrej Karpathy's observations on predictable LLM coding errors, has reached 15,000 stars.

- It represents a move from simply using AI to write code to engineering its behavior for better output.

What's in the File?

The CLAUDE.md file is a prompt-engineering artifact. It doesn't contain any executable code, frameworks, or complex tooling. Instead, it's a curated list of behavioral directives. Based on Karpathy's stated principles, its rules likely aim to counteract common LLM failures:

- Demand Simplicity: Instructions to avoid over-engineering solutions, prefer straightforward logic, and resist adding unnecessary abstraction layers.

- Enforce Consistency: Guidelines to match the project's existing coding style, naming conventions, and architectural patterns rather than introducing new ones.

- Control Dependencies: Explicit rules to not add new libraries, packages, or modules without explicit permission, preventing "dependency creep."

- Project-Specific Context: It can define the project's tech stack, primary patterns, and even "philosophies" (e.g., "this is a lean API server, not a monolithic app").

Why a Simple Markdown File is Gaining Traction

This approach resonates because it solves a practical, widespread pain point. Developers using Copilot, Claude Code, or ChatGPT for coding have universally encountered these frustrating, time-wasting behaviors. The CLAUDE.md file offers a lightweight, version-controlled solution.

- Zero Tooling Overhead: It requires no installation, no new CLI tools, and no changes to the development environment. It's just a text file.

- Project-Specific & Portable: Guidelines travel with the repo. Every contributor (human or AI) benefits from the same context, improving consistency.

- Prompt Engineering as Code: It treats the "ideal behavior" of the AI as a declarative specification that can be refined, reviewed, and merged like any other source artifact.

The Bigger Shift: From Code Generation to Behavior Engineering

The viral success of this single file signals a maturation in how developers leverage AI. The initial phase was about generation ("write a function that does X"). The emerging phase is about governance ("here is how you should think while writing all functions for this project").

Developers are moving past seeing AI as a mere code-completion tool and beginning to treat it as a team member that needs onboarding, style guides, and clear operational boundaries. The best "tools" in this new ecosystem aren't always software; sometimes, they are precisely crafted instructions.

gentic.news Analysis

This development is a concrete manifestation of a trend we've tracked since the rise of Claude 3.5 Sonnet and its integrated Claude Code feature in mid-2024. The launch of Claude Code, which offered a more code-native interface within the IDE, created a new user base hungry for ways to optimize its output. The 15K stars for CLAUDE.md indicate this community is rapidly developing its own best practices, independent of Anthropic's official tooling.

This aligns with the broader industry move toward prompt management systems and AI agent frameworks, but takes a strikingly minimalist, developer-centric approach. While companies like LangChain and LlamaIndex build complex orchestration layers, this trend shows that for many everyday coding tasks, a well-engineered static prompt can be a highly effective solution. It also creates a fascinating new artifact for software projects: the AI policy file, which may become as standard as a README.md or docker-compose.yml.

Furthermore, this connects to Andrej Karpathy's ongoing influence since leaving OpenAI in early 2025 to focus on educational content and grassroots AI tools. His observations, shared via his widely-followed social media and blog, continue to directly shape developer behavior and tool creation, demonstrating his unique role as a trendsetter in practical AI application.

Frequently Asked Questions

What is a CLAUDE.md file?

A CLAUDE.md file is a plain text markdown file placed in the root of a software project. It contains structured instructions and behavioral guidelines designed to steer AI coding assistants, like Anthropic's Claude Code, toward producing code that is simpler, more consistent, and better aligned with the project's existing patterns and dependencies.

How do I use the CLAUDE.md file?

You simply copy the CLAUDE.md file from the GitHub repository into the root directory of your own project. When you use an AI coding assistant within that project, you can reference the file or, ideally, the assistant will automatically read and adhere to its guidelines as part of its context window, leading to more appropriate code suggestions.

Does this only work with Claude Code?

While specifically designed for and named after Claude Code, the principles and format are transferable. The concepts can be adapted into similar files (e.g., COPILOT.md, AI_GUIDELINES.md) for use with GitHub Copilot, ChatGPT, or other AI coding assistants. The core idea is providing structured project context.

Is this related to OpenAI's o1 or other reasoning models?

The approach is complementary but different. Advanced reasoning models like OpenAI's o1 series aim to reduce errors through better internal reasoning. CLAUDE.md operates externally, attempting to prevent predictable errors through explicit instruction. They represent two sides of the same problem: improving AI code quality, one through model architecture and the other through user-provided context.