When AI researcher and educator Andrej Karpathy shared his setup for a personal LLM-powered knowledge base, it sparked a practical question in the community: how do you turn that dense, textual knowledge into compelling, visual presentations for sharing?

Omar S. (@omarsar0), a prominent figure in applied AI and agent workflows, has built and shared a solution. He created an automated pipeline that transforms his extensive, curated wiki of AI research papers—containing over 1,000 papers across 20 AI agent topics—into polished slide presentations using Gamma, a modern presentation tool.

Key Takeaways

- automated the creation of slide presentations from a personal wiki of 1,000+ AI papers.

- The pipeline uses the Gamma MCP connector for Claude to generate polished decks on demand.

What Happened: From Obsidian Vault to Inline Slides

The core of the system is a personal knowledge base managed in Obsidian, a popular note-taking and wiki application. This vault serves as the structured source of truth for the research.

Omar's pipeline leverages the Model Context Protocol (MCP), an open standard developed by Anthropic that allows LLMs like Claude to securely connect to external tools and data sources. He uses the official Gamma MCP connector, which provides Claude with the tools needed to generate and edit Gamma presentations.

Here’s the automated flow:

- Command: Omar issues a single command to his custom AI agent (e.g., "create a presentation on the top papers for reasoning agents").

- Retrieval: The agent queries his Obsidian wiki to pull the most relevant papers from specified topics.

- Generation: The retrieved content is fed to Claude, which uses the Gamma MCP tools to structure and design a complete slide deck.

- Preview: The final presentation is rendered and embedded inline within Omar's dashboard using an iframe, providing immediate visual feedback.

Omar built his agent orchestrator using the Claude Agent SDK, Anthropic's framework for creating persistent, tool-using AI agents. This allows for a seamless integration where the agent has persistent access to both his knowledge base and the Gamma slide-creation tools.

Technical Details: MCP as the Glue

The key enabling technology is the Model Context Protocol. By adding the official Gamma connector to a Claude instance, developers grant the LLM direct API access to Gamma's features. This moves beyond simple prompt-and-copy workflows, enabling dynamic, programmatic creation of rich content.

"The Gamma connector for Claude is a great choice for generating beautiful and professional slides. Easy to use. Go to your Claude instance and add the official Gamma connector. That's it!" Omar noted in his post.

This setup exemplifies the Retrieval-Augmented Generation (RAG) pattern applied to a personal workflow: a specialized knowledge base (the Obsidian vault) is retrieved from, and an LLM (Claude) synthesizes the retrieved information into a new format (a Gamma presentation) using a dedicated tool.

Why It Matters: Automating Knowledge Sharing

For researchers, engineers, and educators, the friction between deep research synthesis and creating shareable summaries is a significant time sink. This pipeline demonstrates a practical automation of that "last mile" of knowledge work.

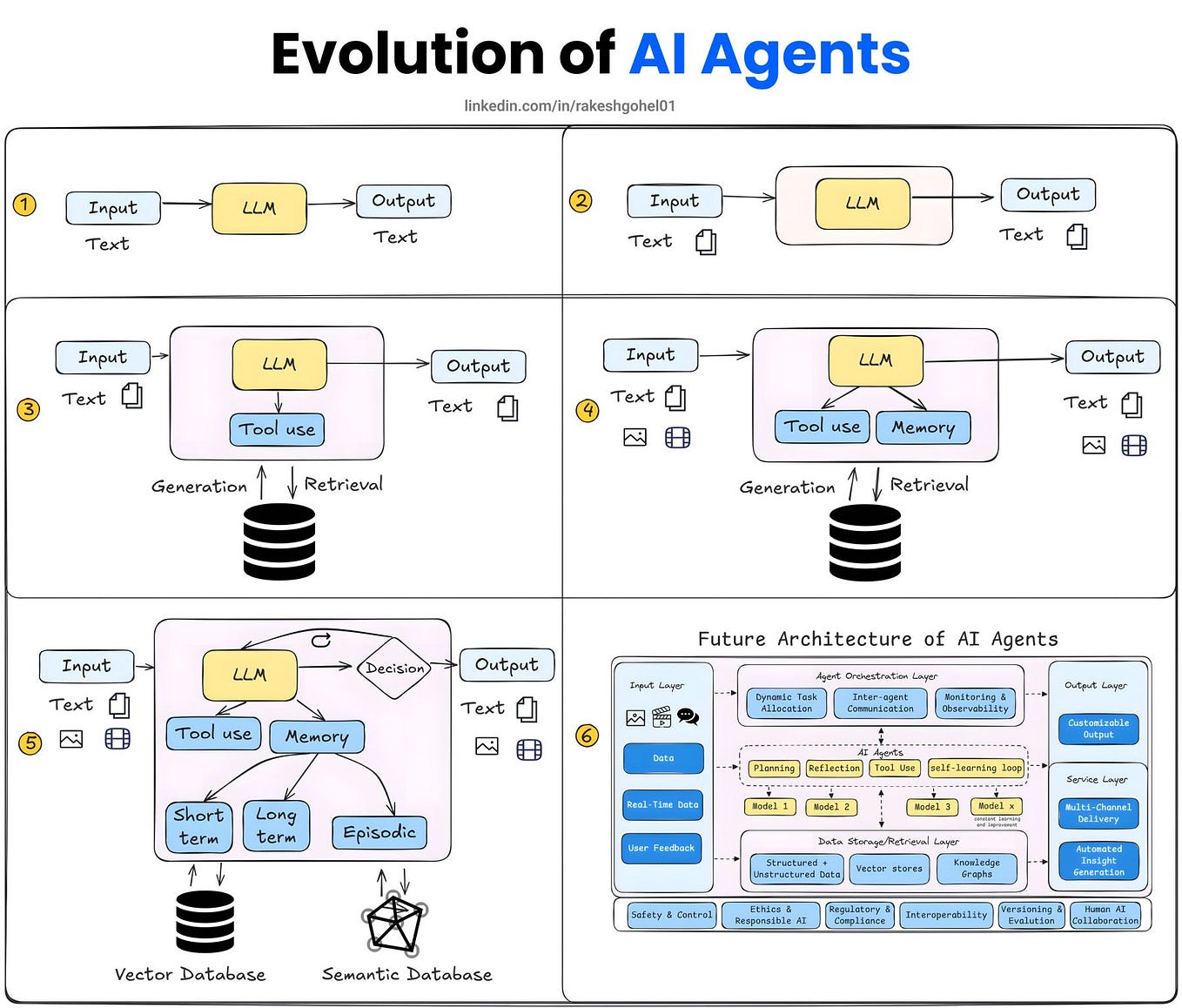

It showcases a move from LLMs as mere chat interfaces toward them serving as the core reasoning engine in automated systems that manage personal data and execute complex, multi-step tasks involving external applications. The use of MCP is particularly significant, as it represents a standardized, secure way for LLMs to interact with a growing ecosystem of tools beyond their training data.

gentic.news Analysis

This project sits at the convergence of several major trends we've been tracking: the rise of personal AI agents, the standardization of tool-use via protocols like MCP, and the maturation of RAG for private knowledge management. Omar's workflow is a tangible implementation of the vision Karpathy outlined, moving from a static knowledge base to a dynamic content generation system.

It aligns with a broader industry shift towards agentic workflows, where LLMs are tasked with executing multi-step processes. As we covered in our analysis of Anthropic's Claude Agent SDK launch, the ability to create persistent, tool-using agents is becoming a foundational capability. Omar's use of the SDK with Gamma's MCP connector is a textbook example of this paradigm in action.

The choice of Gamma is also notable. It reflects a trend where next-generation AI-native content tools (like Gamma for presentations, Tome for decks, or Diagram for diagrams) are becoming preferred endpoints for AI-generated output over traditional office suites, due to their better APIs, modern design, and often built-in AI features. This pipeline effectively treats Gamma as a rendering engine for structured knowledge.

For practitioners, the takeaway is the power of MCP as an integration layer. Instead of building custom API integrations for every tool, developers can now rely on a growing library of standardized MCP servers (like the one for Gamma) to quickly equip their agents with new capabilities. This significantly lowers the barrier to creating sophisticated, multi-tool automations for personal productivity and knowledge management.

Frequently Asked Questions

What is the Model Context Protocol (MCP)?

The Model Context Protocol is an open standard developed by Anthropic. It defines a secure way for LLMs (like Claude) to connect to external data sources, tools, and APIs. Think of it as a universal adapter that allows an AI to safely interact with your codebase, database, or third-party apps like Gamma, without needing custom, one-off integrations for each tool.

How is this different from just asking ChatGPT to make slides?

The key difference is automation and data integration. Asking a chatbot to make slides requires manually providing it the content and then copying the output. This pipeline is fully automated: the agent autonomously retrieves the latest data from a personal, curated knowledge base (the Obsidian vault) and uses a direct tool connection (MCP) to generate and render the slides in a specific application (Gamma), all from a single command.

Do I need to be a programmer to set this up?

Implementing a full pipeline like Omar's requires technical skill, including familiarity with the Claude Agent SDK, Obsidian, and potentially some scripting. However, the barrier is lowering. Any user can already add the Gamma MCP connector to their Claude Desktop instance and start asking Claude to create Gamma presentations directly, which is the foundational step of this workflow.

What are other use cases for MCP beyond making slides?

MCP is designed for any tool-augmented LLM task. Common use cases include connecting an LLM to a company's code repository for code analysis, linking it to internal databases for business intelligence queries, integrating with project management tools like Jira to summarize tickets, or connecting to design tools like Figma. It turns the LLM into a unified interface for a suite of specialized tools.