What Happened

A social media post from user @hasantoxr claims that "someone built an AI system that takes a research idea and outputs a full academic paper." The post further states the generated papers include "real citations" and "real experimental sections."

The post, which has gained attention on X (formerly Twitter), does not identify the developers, provide a paper or technical report, link to a repository, or name the system. No benchmarks, validation studies, or example outputs are provided. The claim remains an unverified assertion circulating on social media.

Context

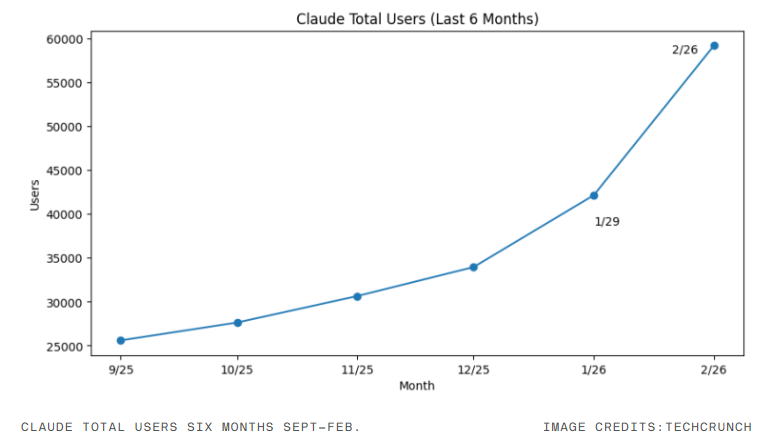

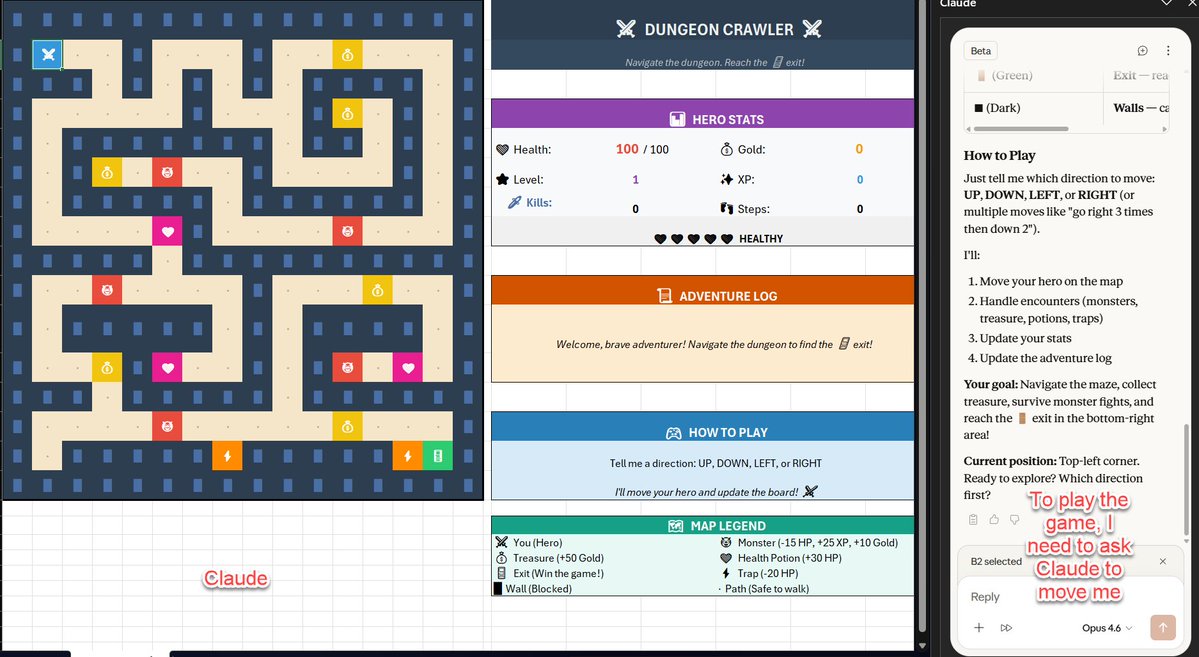

The claim fits into a growing category of AI-assisted scientific writing tools. Existing systems like ChatGPT, Claude, and specialized tools like Elicit or Scite.ai can help with literature review, drafting, and citation management. However, fully automating the end-to-end process of taking a novel research idea and producing a complete, valid academic paper—with coherent experiments and correct citations—represents a significantly more ambitious claim.

Major challenges for such a system would include:

- Generating novel, logically sound hypotheses

- Designing and describing valid experimental methodologies

- Synthesizing and accurately citing relevant prior work without hallucination

- Interpreting and discussing results in the context of the field

No current publicly available model is known to reliably perform this full pipeline without extensive human oversight for fact-checking, methodological soundness, and academic rigor.

Given the complete absence of supporting evidence—no model name, architecture details, training data, or evaluation metrics—this should be treated as an interesting but unsubstantiated rumor until formal documentation appears.