A new open-source tool called AirTrain is challenging the economics of cloud GPU training by enabling distributed machine learning across Apple Silicon MacBooks using ordinary Wi-Fi connections. Built on Apple's MLX framework and leveraging the DiLoCo algorithm, AirTrain reduces network bandwidth requirements by 500x compared to traditional distributed training methods.

Key Takeaways

- Developer @AlexanderCodes_ open-sourced AirTrain, a tool that enables distributed ML training across Apple Silicon MacBooks using Wi-Fi by syncing gradients every 500 steps instead of every step.

- This makes personal device training feasible for models up to 70B parameters without cloud GPU costs.

What AirTrain Does

AirTrain enables multiple Apple Silicon Macs (M1-M4) to collaboratively train machine learning models by pooling their computational resources over a local network. The core innovation isn't in raw compute power but in radically reducing network communication requirements.

Traditional distributed data-parallel (DDP) training requires synchronizing gradients after every single training step. For a 124-million parameter model, this means exchanging approximately 500MB of data per step, requiring sustained bandwidth of 50 GB/s—far beyond what Wi-Fi can provide.

AirTrain implements the DiLoCo (Distributed Low-Communication) algorithm, where each device trains independently for 500 steps before synchronizing only the accumulated differences. This reduces network communication frequency by 500x, bringing bandwidth requirements down to approximately 0.1 GB/s—well within the capabilities of modern Wi-Fi networks.

Technical Implementation

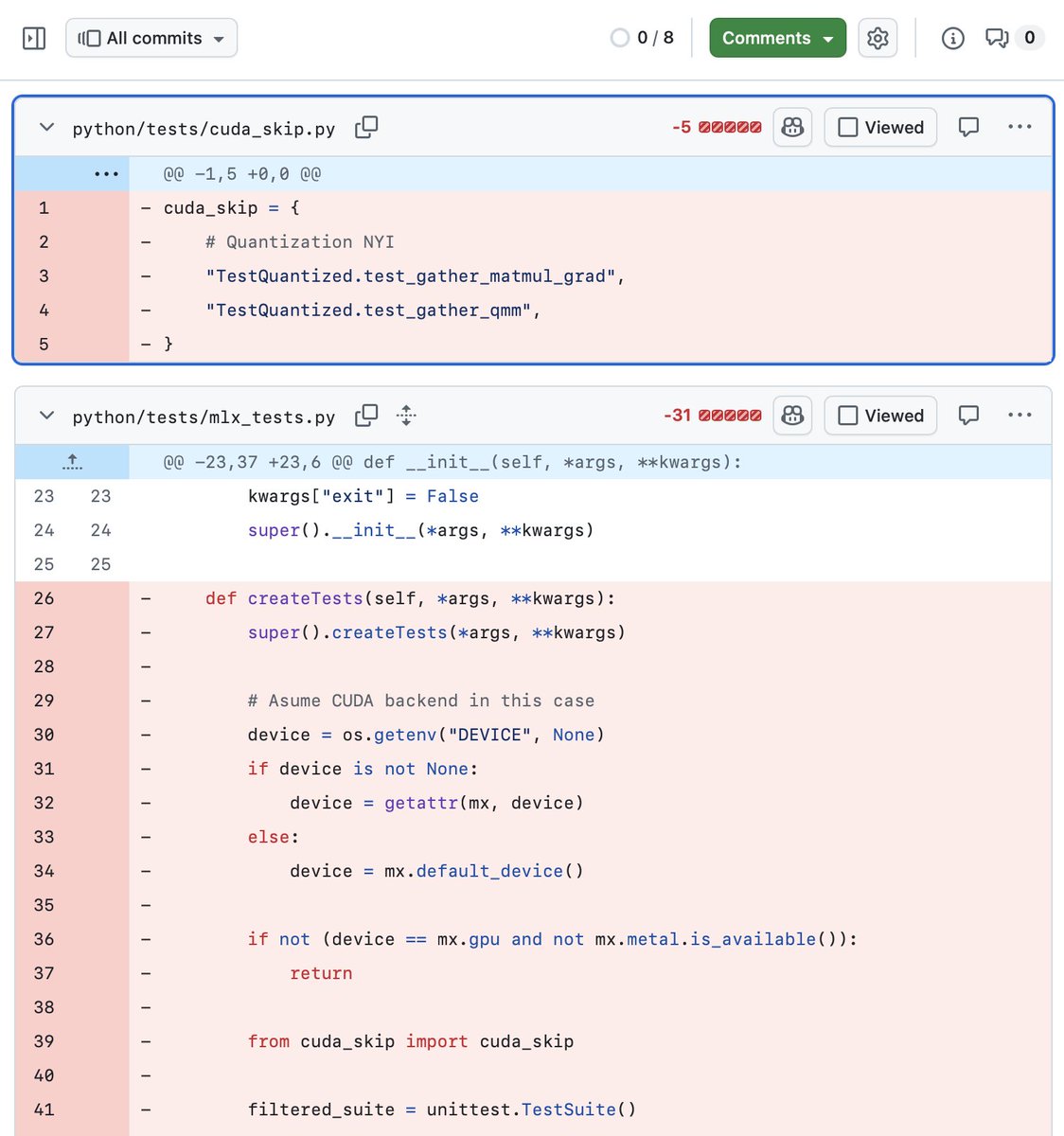

Built entirely in Python and MIT-licensed, AirTrain leverages several key technologies:

- Apple MLX Framework: Native execution on Apple Silicon's unified memory architecture eliminates host-to-device copy overhead

- DiLoCo Algorithm: Enables infrequent synchronization (every 500 steps) while maintaining training stability

- Zero-Configuration Discovery: Uses mDNS/Bonjour (same protocol as AirDrop) for automatic peer discovery

- Fault Tolerance: Nodes can join or leave mid-training without crashing the entire run

- Checkpoint Relay: Allows training sessions to be passed between devices like a relay race

Each synchronization event takes approximately 2 seconds over Wi-Fi, making the overhead negligible compared to the 500-step training intervals.

Hardware Advantages of Apple Silicon

The economics become compelling when considering Apple Silicon's characteristics:

Unified Memory Up to 128GB 24GB VRAM Power Efficiency 245-460 GFLOPS/watt ~100 GFLOPS/watt Peak TFLOPS (M4) 2.9 TFLOPS ~82 TFLOPS (FP32)Key Numbers:

- 7 M4 MacBooks ≈ 1 NVIDIA A100 in raw TFLOPS

- M4 Max with 128GB can train 70B parameter models without offloading

- Wi-Fi requirement: 0.1 GB/s vs. DDP's 50 GB/s

Practical Implications

For small to medium-scale training tasks, AirTrain creates viable alternatives to cloud GPU rentals:

- 124M parameter GPT-2: Instead of renting cloud GPUs at ~$3/hour, pool 3 MacBooks in a coffee shop

- Community Platform: https://train.airkit.ai provides session browsing, checkpoint sharing, and contributor leaderboards

- Energy Costs: Apple Silicon's power efficiency makes electricity costs negligible compared to cloud expenses

Limitations and Current State

AirTrain is early-stage software with one primary contributor (@AlexanderCodes_). While the concept demonstrates feasibility for certain workloads, several limitations exist:

- Currently optimized for Apple Silicon via MLX framework

- Best suited for models that fit within unified memory constraints

- Performance compared to optimized cloud GPU clusters for large-scale training remains unverified

- Limited to research and small-scale production use cases

What This Means in Practice

For AI practitioners with access to multiple Apple Silicon devices, AirTrain enables collaborative training without cloud infrastructure. The checkpoint relay feature particularly suits educational settings, hackathons, or distributed research teams where continuous training across different locations is valuable.

gentic.news Analysis

AirTrain represents the latest development in the growing trend of edge and personal device AI training, a movement that gained significant momentum with Apple's release of the MLX framework in late 2023. This follows Apple's strategic push to position its silicon as viable for ML workloads, competing directly with NVIDIA's CUDA ecosystem.

The timing is particularly interesting given the current cloud GPU shortage and rising compute costs that have plagued AI developers since 2024. While AirTrain won't replace cloud training for billion-parameter models, it creates a legitimate alternative for the long tail of smaller models and experiments where cloud economics don't make sense.

Technically, AirTrain's implementation of DiLoCo is noteworthy. The original DiLoCo paper from Google Research demonstrated the algorithm's effectiveness in low-bandwidth environments, but AirTrain represents one of the first practical, open-source implementations targeting consumer hardware. This aligns with our previous coverage of "MLX-Community" projects that are building an alternative AI stack outside the NVIDIA ecosystem.

The most significant implication may be for AI education and accessibility. By lowering the barrier to distributed training, AirTrain could enable more hands-on learning experiences without requiring cloud credits or institutional resources. The checkpoint relay feature specifically addresses a practical problem in collaborative learning environments.

However, practitioners should approach this with realistic expectations. The "7 MacBooks = 1 A100" comparison only holds for raw TFLOPS, not memory bandwidth, specialized tensor cores, or the mature software ecosystem surrounding NVIDIA GPUs. For certain workloads—particularly those bottlenecked by memory capacity rather than pure compute—Apple Silicon's unified memory architecture provides genuine advantages that AirTrain effectively leverages.

Frequently Asked Questions

Can AirTrain train models larger than 70B parameters?

No, the current limitation is Apple Silicon's maximum unified memory of 128GB (available on M4 Max configurations). Models larger than approximately 70B parameters would require model parallelism techniques not currently implemented in AirTrain's DiLoCo approach.

How does AirTrain compare to traditional cloud GPU training for production workloads?

For small to medium research models and experiments, AirTrain offers compelling cost advantages (near-zero marginal cost if you already own the hardware). For large-scale production training requiring thousands of GPU hours, cloud or dedicated GPU clusters still provide better performance, scalability, and tooling maturity.

What types of models work best with AirTrain?

AirTrain is particularly well-suited for transformer-based language models that fit within Apple Silicon's memory constraints. The DiLoCo algorithm works best with stable optimization problems where infrequent synchronization doesn't degrade convergence—typically smaller models rather than cutting-edge frontier models.

Is AirTrain compatible with non-Apple hardware?

Currently, AirTrain is built specifically for Apple's MLX framework, which only runs on Apple Silicon. The underlying DiLoCo algorithm could theoretically be implemented for other hardware, but the current implementation is Apple-specific.

AirTrain is MIT-licensed and available on GitHub. The community platform at https://train.airkit.ai provides additional resources, live session browsing, and checkpoint sharing.