Key Takeaways

- Anthropic banned an entire agtech org with no warning.

- For Claude Code users, this means your API keys and team access can vanish instantly.

- Here's how to build redundancy now.

What Changed

A Reddit post from an agricultural technology company reports that on Monday, all ~110 users in their Anthropic organization woke up to suspended Claude accounts — including the API account, which continued billing despite the ban. No warning was given. No explanation was provided. The Google Form appeal process is described as "a black hole."

The post specifically notes that the API account was paid for separately from the Team plan but shared the same admins. After the Team suspension, those admins could no longer view usage or billing, even though API keys continued working and a renewal bill was sent.

This is not an isolated incident. A Twitter thread from another user (linked in the post) describes a similar experience, and an Anthropic employee engaged there but has since gone silent.

What This Means For Claude Code Users

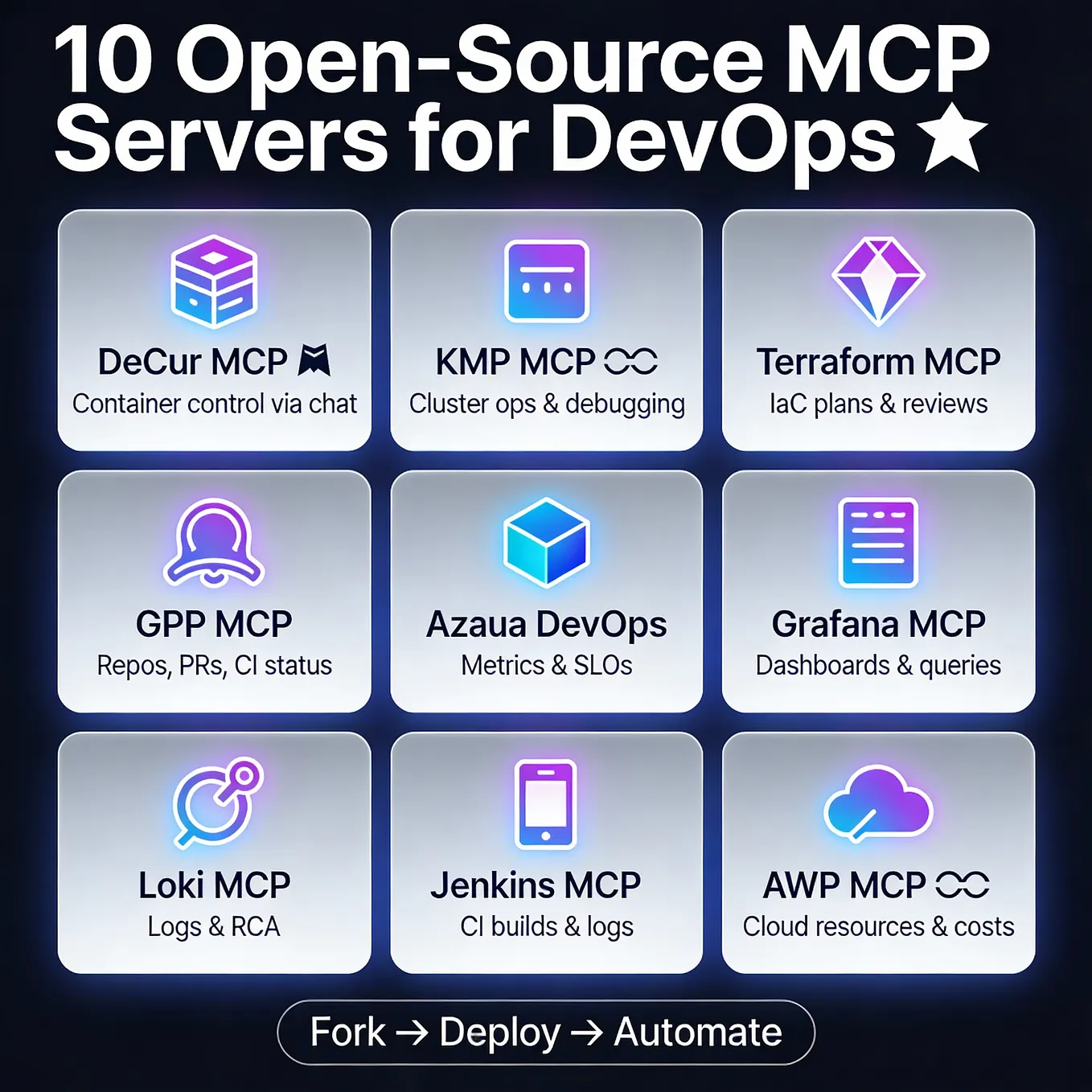

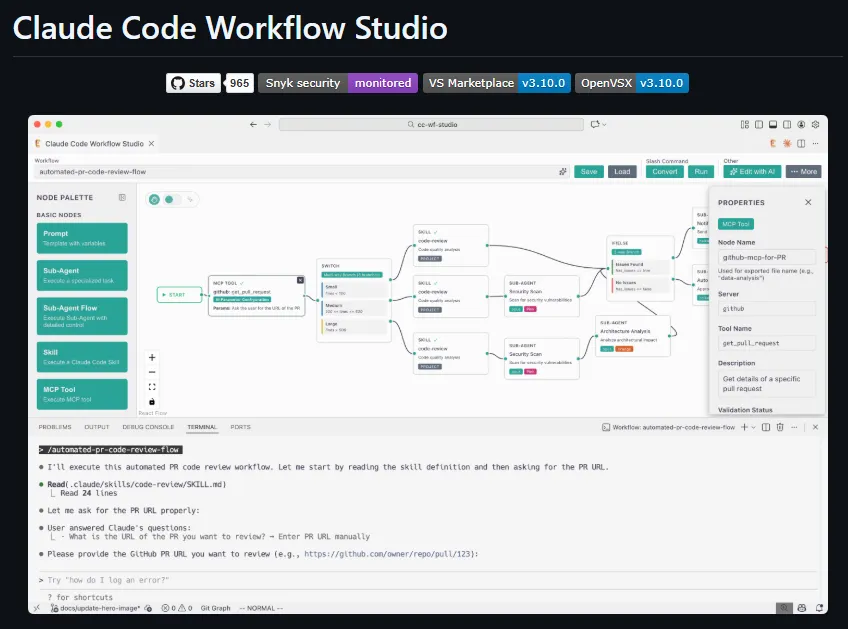

If you're using Claude Code in a business context, this is a wake-up call. Your entire workflow — including your CLAUDE.md configs, custom prompts, MCP servers, and project contexts — could become inaccessible overnight if your organization gets flagged.

The risk is amplified because:

- Guilt by association: One user's activity (a disgruntled employee, an intern testing edge cases, or even legitimate agricultural queries) can trigger a blanket ban.

- No recourse: The appeal process is opaque and slow. The poster reports radio silence after a day and a half.

- API keys are not immune: Even if your API account is separate, the ban on admin email addresses locks you out of billing and usage dashboards.

How To Protect Your Claude Code Workflows

1. Decouple your Claude Code API keys from your Team/Enterprise account

If your Claude Code usage relies on a Team plan, consider moving API key management to a separate Anthropic account that isn't tied to your organization's Team billing. This way, an org-wide ban doesn't kill your API access.

Example (add to your .env or CLAUDE.md):

# In CLAUDE.md:

# Use a dedicated API key from a separate account for Claude Code

# Do NOT rely on the Team account's API keys

2. Export your CLAUDE.md and project configs weekly

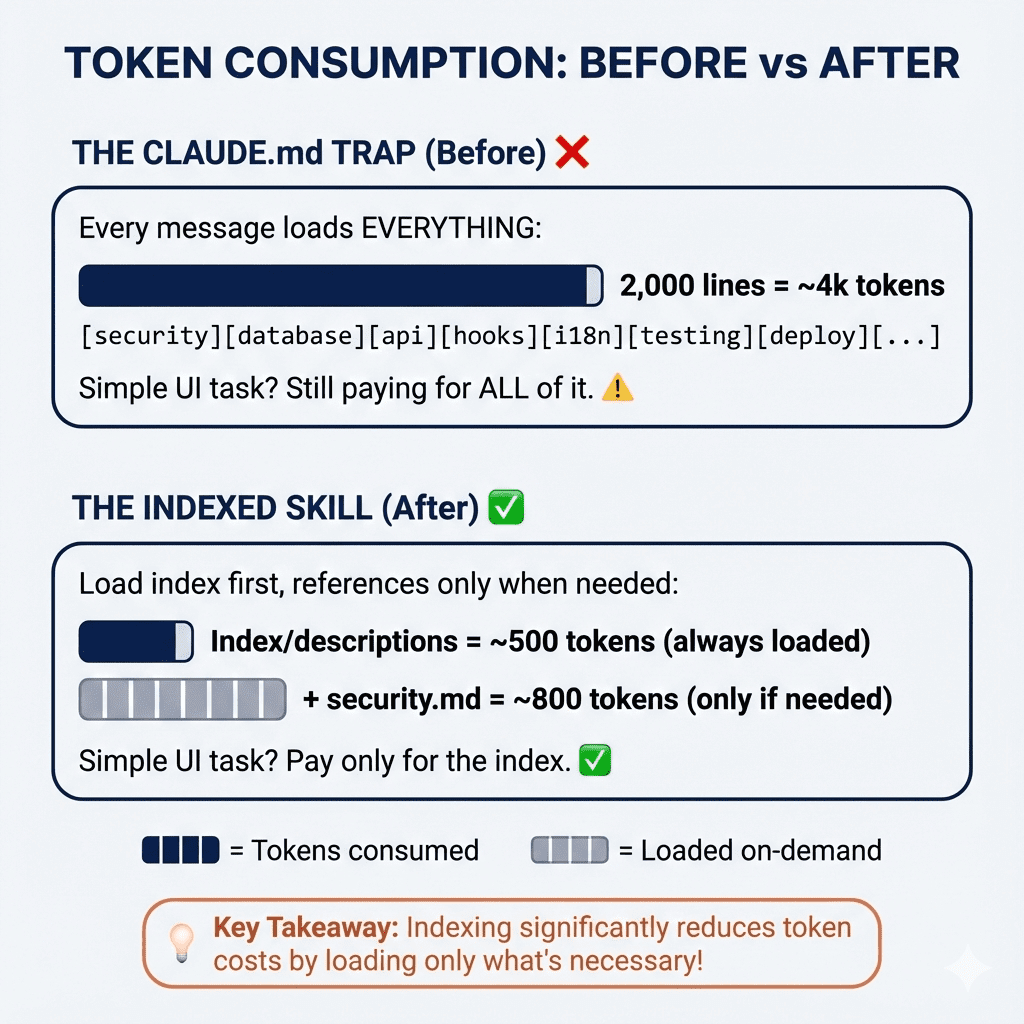

Your CLAUDE.md files, MCP server configs, and project-specific prompts are the brain of your Claude Code setup. If your account gets banned, you lose access to these.

Automate this with a cron job:

# Backup all CLAUDE.md files to a local git repo

find . -name "CLAUDE.md" -exec cp {} ~/claude-backups/ \;

git -C ~/claude-backups add .

git -C ~/claude-backups commit -m "Weekly backup $(date)"

3. Maintain a local fallback model

If Claude Code becomes unavailable, have a fallback plan. Keep a local LLM (like Ollama with a capable model) configured in your dev environment so you can continue working while you resolve the ban.

In your CLAUDE.md:

# If Claude Code is unavailable, use:

# ollama run codellama:13b --context 8192

# This won't match Claude Code's quality, but it keeps you unblocked.

4. Use separate accounts for sensitive domains

If your work involves agriculture, defense, healthcare, or any domain that might trigger automated flagging, consider using a separate Anthropic account with no connection to your main organization for those specific queries.

The Bigger Picture

This incident highlights a fundamental risk in relying on cloud AI services for daily development. Claude Code is powerful, but it's a black box from a compliance standpoint. You don't know what triggers a ban, and you have no SLA on account recovery.

For teams, the lesson is clear: treat your Claude Code setup as a critical business tool that could disappear at any moment. Build redundancy, export configs, and decouple API access points.

gentic.news Analysis

This incident follows a pattern of aggressive enforcement we've seen across AI providers. In February, OpenAI similarly banned accounts without warning during a policy sweep. Anthropic's approach here is especially concerning because it targets the organization level — a design choice that prioritizes risk containment over customer trust.

For Claude Code users specifically, this is the first major public report of an org-wide ban affecting developer workflows. Previous bans (covered in our earlier article on Anthropic's enforcement policies) were individual. The shift to org-level bans suggests Anthropic is tightening its compliance posture, possibly in response to regulatory pressure or internal security incidents.

If this becomes standard practice, expect to see more teams building multi-provider fallbacks for their AI-assisted development pipelines. The era of single-provider dependency for Claude Code may be ending.