Anthropic has published a technical blog post from its engineering team detailing an internal system called a "multi-agent harness" designed to push its Claude models further in complex, long-running software engineering tasks, with a specific focus on frontend design and implementation. The system represents a shift from using a single AI model instance to orchestrating multiple, specialized Claude agents to break down and solve larger problems.

The blog post, titled "How we use a multi-agent harness to push Claude further in frontend design and long-running autonomous software engineering," provides a rare look at the internal tooling and scaffolding Anthropic is building to scale AI capabilities beyond chat interfaces and simple code completion.

What Anthropic Built: A Multi-Agent Orchestration System

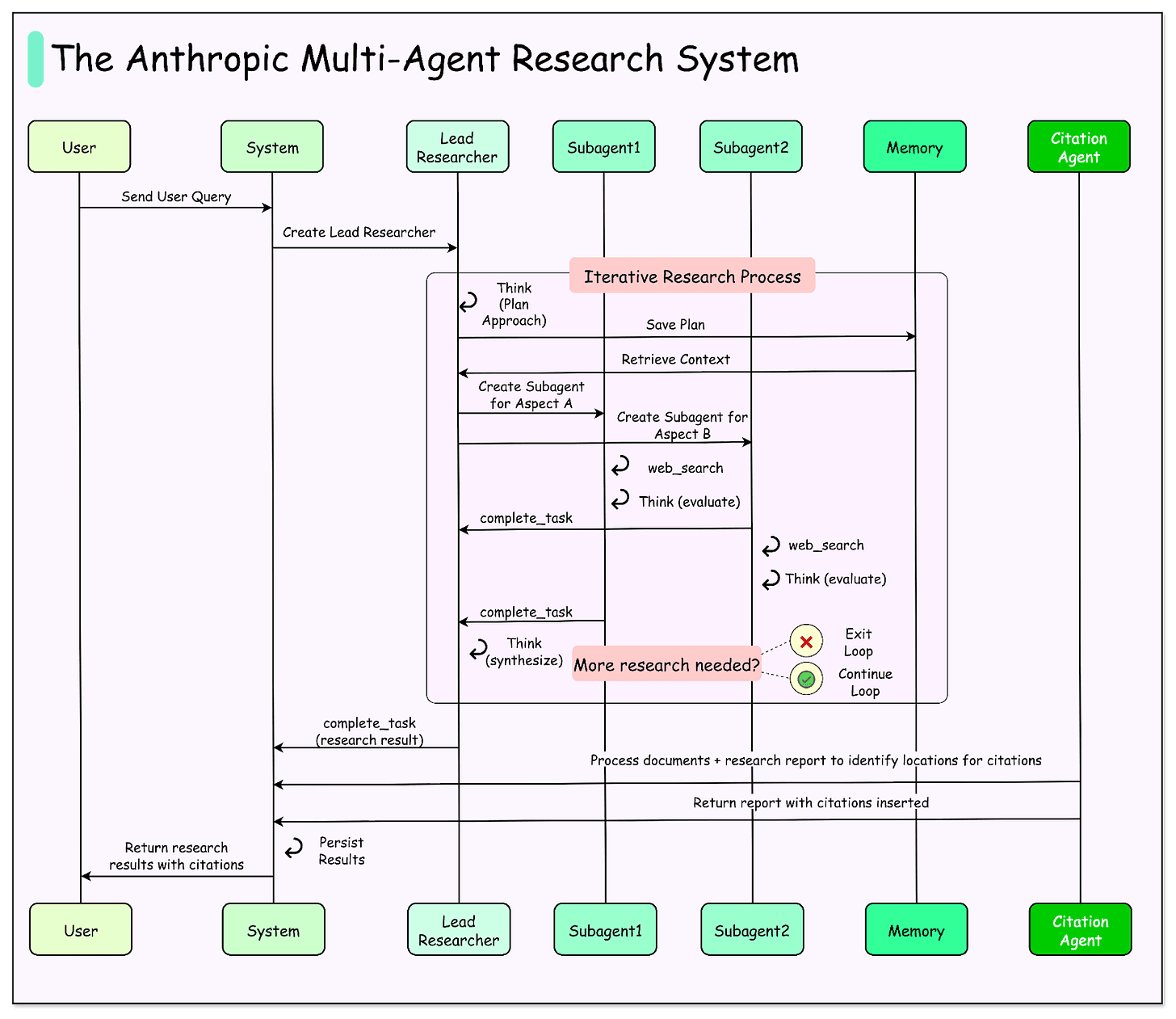

The core innovation described is not a new foundation model, but a system architecture that manages multiple Claude instances (agents) to collaborate on a single, overarching task. The harness acts as a controller, decomposing a high-level objective—like "build a responsive dashboard with these features"—into subtasks, assigning them to different agents, managing their execution, and synthesizing their outputs.

This approach is explicitly targeted at overcoming limitations of a single model context window and reasoning chain. For long-running tasks that require planning, iterative refinement, and specialized sub-skills (e.g., UI design, component implementation, testing), a single agent can lose coherence or struggle with the breadth of requirements.

Key Technical Details & Applications

According to the blog, the harness is applied to two primary domains:

Frontend Design & Development: The system can coordinate agents to handle the full stack of a frontend task. This might involve one agent interpreting design requirements, another generating HTML/CSS/JavaScript code for components, a third writing tests, and a fourth reviewing the overall implementation for consistency and quality. The harness manages the workflow between these agents.

Long-Running Autonomous Software Engineering: The post suggests the framework is used for more general software projects that require sustained, autonomous work. This implies tasks that go beyond writing a single function or file and may involve navigating codebases, making sequential decisions, and integrating feedback over time—operations that are difficult to fit into a single prompt or conversation.

The blog likely details components of the harness such as a task planner, an agent scheduler, a state/memory manager to maintain context across agents and time, and a validation or synthesis layer to combine outputs. The use of multiple agents allows for parallel processing of independent subtasks and the application of specialized prompting or fine-tuning to different agents.

Why This Approach Matters for AI-Assisted Development

Moving from a single conversational agent to a managed multi-agent system is a significant step toward more capable and reliable AI software engineers. It's a recognition that the unit of work for complex development is not a single response, but a process. This aligns with industry trends seen in research on AI software agents (like Devin from Cognition AI, or SWE-Agent) and frameworks like AutoGen and CrewAI, but applied here as an internal production system by a leading model provider.

For practitioners, this signals where the industry is heading: the next frontier of AI coding tools isn't just better code generation in an IDE sidebar, but orchestrated systems that can own and execute larger project slices. Anthropic's public discussion of this work provides a benchmark for how major labs are thinking about scaffolding their models for real-world engineering tasks.

gentic.news Analysis

This technical blog post is a strategic disclosure from Anthropic, coming at a time of intense competition in the AI coding assistant space. It follows Anthropic's recent release of Claude 3.5 Sonnet, which itself showed strong performance on coding benchmarks. By revealing internal multi-agent tooling, Anthropic is signaling that its competitive edge lies not only in raw model capabilities but in the sophisticated systems built on top of them. This is a direct counterpoint to the narrative around other autonomous coding agents; Anthropic is arguing that scalable, reliable automation requires robust orchestration, not just a powerful model prompted to "act like an agent."

The focus on frontend design is particularly notable. While many AI coding tools excel at backend logic and algorithms, frontend work—with its blend of visual design, responsive layout, interactivity, and aesthetic judgment—has remained a tougher challenge. Anthropic's targeted effort here suggests they see it as a high-value, tractable problem for their multi-agent approach. This aligns with trends we've covered, such as Vercel's v0 and other AI UI generation tools, but pushes further into full implementation and integration.

Furthermore, this development connects to the broader industry trend of AI agents transitioning from research concepts to applied engineering. As we noted in our analysis of Google's Astra and OpenAI's o1 reasoning models, the focus is shifting from next-token prediction to planning and execution over extended horizons. Anthropic's harness is a concrete implementation of this philosophy for software engineering. It suggests that future battles in the AI developer tool space will be fought at the systems level, where model intelligence is integrated into automated workflows and pipelines.

Frequently Asked Questions

What is a multi-agent harness in AI?

A multi-agent harness is a system that coordinates multiple AI model instances (agents) to work together on a complex task. Instead of a single AI trying to do everything, the harness breaks the problem down, assigns subtasks to specialized agents, manages their communication and execution order, and combines their results. It's like a project manager for a team of AI workers.

How is Anthropic's multi-agent system different from other AI coding tools?

Most current AI coding tools (like GitHub Copilot) operate as a single-model assistant within a developer's workflow, suggesting code line-by-line or function-by-function. Anthropic's described system is designed for autonomous, long-running execution of larger tasks (like building a full UI component). It uses multiple Claude instances orchestrated by a central system to plan, execute, and integrate code, aiming for a higher degree of independent task completion.

Does this mean Claude can now build software completely on its own?

Not exactly. The blog post describes an internal engineering system used to "push Claude further." It represents a capability direction and a scaling architecture. The harness likely operates within defined parameters and on specific types of tasks (like frontend prototypes). It is a step toward greater autonomy, but not a general-purpose, fully autonomous software engineer. Human oversight, specification, and integration into broader development pipelines are still implied.

Can developers access or use this multi-agent harness?

No. This was described in an Anthropic Engineering Blog post as an internal tool and research direction. It is not a product or publicly available API. However, it signals the types of capabilities Anthropic is developing and may influence future features in their developer platform or Claude's offerings.