The Discovery That Revealed Claude's Inner Workings

In a surprising turn of events for the AI development community, researchers examining Anthropic's Claude Agent SDK discovered something unexpected: the entire Claude Code CLI application bundled within the package. The file, located at node_modules/@anthropic-ai/claude-agent-sdk/cli.js, represents the complete executable that powers Claude Code when users interact with it through their terminal.

This accidental inclusion provides unprecedented visibility into how Anthropic's coding assistant operates under the hood. The 13,800-line minified JavaScript file, version 2.1.71 and built on March 6, 2026, contains everything from onboarding screens to the core agent loop that makes Claude Code function.

What the Bundle Actually Contains

According to analysis of the discovered file, the bundled CLI includes several critical components:

Core Architecture Components:

- The complete onboarding and setup screens that users see when first launching Claude Code

- Policy and managed settings loading mechanisms

- Debugging and inspector detection systems

- UI rendering using Ink/React for terminal interfaces

- Prefetching logic for performance optimization

- Comprehensive error handling and exit code management

Agent System Implementation:

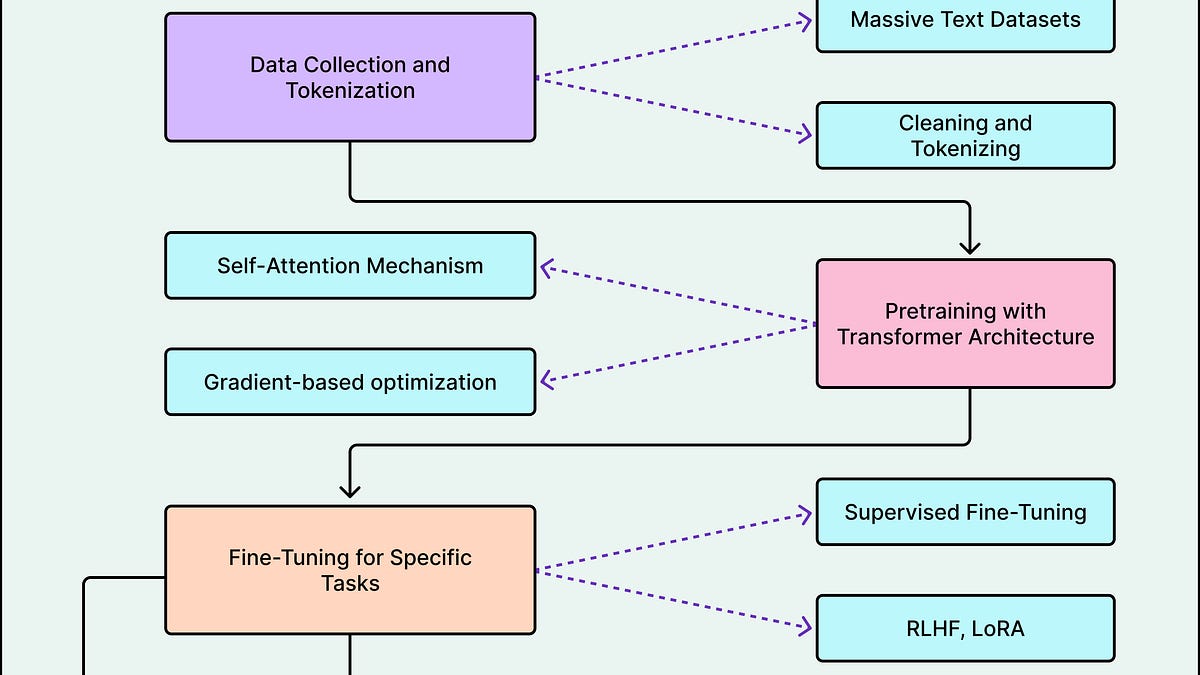

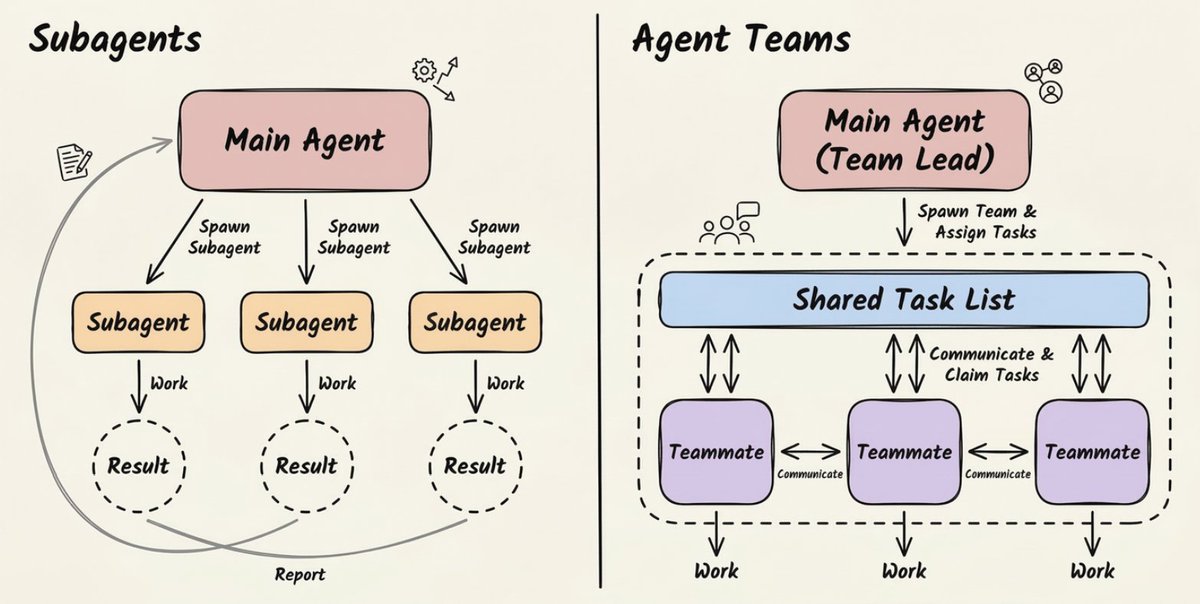

The most revealing aspect is the agent loop implementation, which shows how Claude Code coordinates its AI capabilities:

In-Process Agent Runner (i6z function): This component handles the initialization of agent sessions with specific parameters including agent ID, parent session ID, agent name, team name, color coding, and plan mode settings. The function logs "Starting agent loop for ${agentId}" as it begins execution.

Poll Loop System (l6z function): A continuous monitoring system that checks for multiple event types:

- Pending user messages requiring attention

- Mailbox messages from other agents in multi-agent scenarios

- Shutdown requests for graceful termination

- New tasks from the task management system

Model Orchestration Logic: Extensive systems for managing the main loop model, including:

- Model selection based on permission modes (with plan mode specifically using Opus)

- System prompt assembly and management

- Context window handling and optimization

The Copyright Notice That Speaks Volumes

Perhaps the most telling detail in the bundled file is the copyright notice from Anthropic PBC, which includes what developers are calling a "cheeky" note: "Want to see the unminified source? We're hiring!" This recruitment pitch, embedded in what was presumably meant to be hidden code, highlights the tension between proprietary protection and talent acquisition in the competitive AI landscape.

Security and Deployment Implications

The accidental inclusion raises several important questions about software deployment practices in AI companies:

Security Considerations:

- Shipping production executables in SDK packages creates potential attack surfaces

- The minified code, while obfuscated, still reveals architectural patterns that could be exploited

- Dependencies and internal APIs are exposed through the bundled implementation

Development Practice Questions:

- Why would Anthropic bundle their entire CLI with an SDK rather than providing clean API interfaces?

- What does this say about their internal build and deployment processes?

- How common is this practice among other AI companies shipping developer tools?

The Community Reaction and Analysis

On Hacker News and other developer forums, the discovery has sparked significant discussion. The central question posed by the original poster—"Do we think this was a mistake?"—has generated diverse perspectives:

Intentional Theory: Some argue this might be intentional, providing developers with a complete reference implementation, albeit minified. The SDK documentation suggests it "wraps this CLI as its underlying engine," which could imply this bundling is by design.

Accidental Exposure Theory: Others believe this represents a genuine oversight in Anthropic's packaging process, where internal build artifacts accidentally made their way into public distributions.

Hybrid Approach Theory: A third perspective suggests this might represent a transitional architecture, where Anthropic is moving from CLI-based to API-based interfaces but hasn't completed the migration.

What This Reveals About Claude Code's Architecture

Beyond the immediate questions of intent and security, the bundled code provides valuable insights into Claude Code's technical architecture:

Terminal-First Design: The heavy use of Ink/React for terminal UI suggests Claude Code was designed from the ground up as a terminal application, rather than being adapted from web or desktop interfaces.

Agent-Centric Architecture: The clear separation between different agent functions indicates a sophisticated multi-agent system where different components handle specific responsibilities.

Model Orchestration Complexity: The detailed model selection logic reveals that Claude Code doesn't simply call a single AI model but intelligently routes requests based on context, permissions, and task requirements.

Industry Context and Precedents

This incident isn't the first time AI companies have accidentally exposed internal code or architecture details:

- OpenAI's API Leaks: Previous incidents where internal API structures were revealed through client applications

- Model Weight Accidents: Cases where partial model weights or architectures were inadvertently included in distributions

- Configuration Exposures: Instances where production configuration files made their way into public repositories

What makes this case particularly interesting is the completeness of the exposure—virtually the entire application logic is present, just in minified form.

The Future Implications for AI Tooling

This discovery may influence how AI companies approach their developer tooling in several ways:

Increased Scrutiny: Other companies will likely audit their own distributions to ensure similar exposures haven't occurred.

Architecture Reconsideration: The practice of bundling complete applications within SDKs may be reconsidered in favor of cleaner API boundaries.

Transparency Pressures: As more of these incidents occur, there may be increased pressure for AI companies to be more transparent about their architectures, even for proprietary systems.

Security Standardization: The industry may develop better practices for separating public interfaces from internal implementations in AI tooling.

Conclusion: Accident or Calculated Risk?

Whether this represents a genuine mistake or a calculated risk by Anthropic remains unclear. What is certain is that the discovery provides the developer community with unprecedented visibility into how one of the leading AI coding assistants operates. The 13,800 lines of minified JavaScript tell a story of sophisticated agent systems, careful model orchestration, and terminal-first design principles.

For developers working with Claude Code or building similar systems, this accidental window into Anthropic's architecture offers valuable lessons about AI system design, agent coordination, and the practical challenges of shipping AI-powered developer tools. As the AI tooling space continues to evolve, incidents like this will likely shape both technical architectures and distribution practices across the industry.

The cheeky hiring notice embedded in the code may ultimately prove prophetic—as AI companies compete for talent, transparency about their technical approaches may become as important as the capabilities of their models themselves.