Key Takeaways

- AWS CEO Matt Garman stated that A100 servers are completely sold out and never retired, as demand for older chips outpaces supply.

- This underscores the prolonged GPU shortage and the value of legacy hardware in cloud AI.

What Happened

AWS CEO Matt Garman revealed that the company has never retired an A100 server and is currently completely sold out of them, citing persistent demand outstripping supply. Speaking at a recent event, Garman noted, "Because there is so much more demand than supply, there typically still is demand for the older chips, actually. And today, we actually are completely sold out of and have never retired an A100 server, as an example."

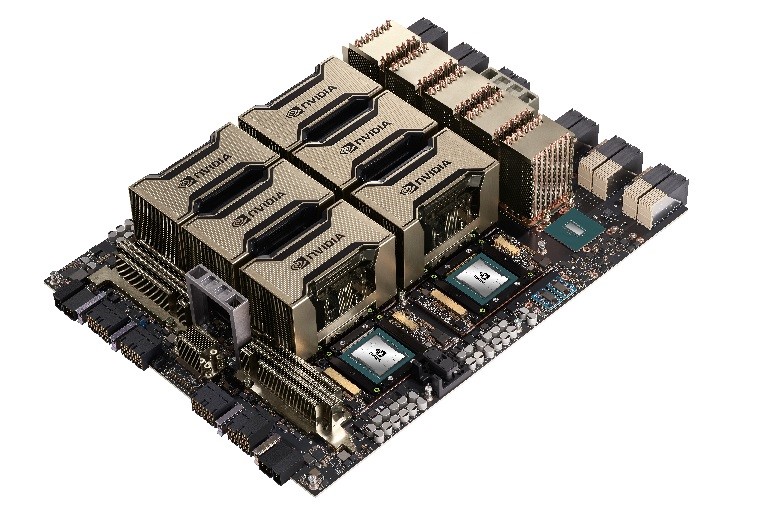

This statement underscores the ongoing GPU shortage that has plagued the AI industry since the surge in large language model training and inference workloads. The A100, based on NVIDIA's Ampere architecture, was released in 2020 and is now considered a previous-generation chip compared to the newer H100 (Hopper) and B100 (Blackwell) families.

Context

The A100 was NVIDIA's flagship data center GPU before the H100 launched in 2022. Despite being superseded, its availability and compatibility with existing infrastructure make it a critical resource for cloud customers who cannot access newer hardware or whose workloads are optimized for the A100's architecture.

AWS's admission that it has never decommissioned an A100 server and remains sold out highlights two key dynamics:

- Demand outstrips supply for all GPU generations, not just the latest ones.

- Legacy hardware retains value in AI workloads, especially for inference and fine-tuning, where raw performance gains from newer chips may not justify migration costs.

This is consistent with broader industry trends. Microsoft Azure and Google Cloud have also reported sustained demand for older GPU instances, and NVIDIA's data center revenue has continued to grow even as new products launch.

Key Numbers

A100 release year 2020 AWS A100 server status Never retired, completely sold out Demand vs. supply Demand exceeds supply for older chips Successor chips H100 (2022), B100 (2024)What This Means in Practice

For AI engineers and cloud architects, this means provisioning GPU capacity remains a bottleneck. If you need A100s, you may face long wait times or be forced to use less efficient alternatives. The lack of retirement suggests AWS expects continued demand for years to come, so planning for multi-year reservations or alternative architectures (e.g., custom chips like Trainium) is prudent.

gentic.news Analysis

This statement from AWS's CEO is a rare admission of the severity of the GPU crunch. While it's common knowledge that H100s are hard to get, the fact that even older A100s are completely sold out indicates the shortage is deeper than many realize. It's not just about cutting-edge hardware; every available GPU is being consumed.

This aligns with trends we've been tracking at gentic.news. For instance, in our coverage of the NVIDIA earnings call, we noted that data center revenue was up over 400% year-over-year, driven by demand that spans multiple product generations. The A100's longevity also mirrors the pattern we saw with the V100, which remained in production for years after its successor launched.

The implication for cloud customers is clear: don't expect relief soon. AWS's investment in custom silicon (Trainium and Inferentia) is partly a response to this shortage, but those chips are optimized for specific workloads and may not be drop-in replacements for NVIDIA GPUs. Enterprises should consider multi-cloud strategies, reserved instances, or even on-premises deployments to secure compute capacity.

Frequently Asked Questions

Why is AWS still sold out of A100 servers?

Demand for AI compute has outstripped supply for years, and A100s remain useful for inference and fine-tuning workloads. AWS has never retired them because customers keep renting them, and the company cannot produce enough new servers to meet demand.

Is the A100 still relevant for AI workloads in 2026?

Yes. While the H100 and B100 offer higher performance, the A100 is sufficient for many inference tasks and fine-tuning jobs. Its widespread software support and lower cost compared to newer chips make it a practical choice when available.

How does this compare to the H100 shortage?

The H100 has been even harder to procure, with wait times exceeding 6 months at some cloud providers. The A100 shortage is less severe but still significant, as AWS's statement confirms.

What should I do if I need A100 capacity?

Consider reserving instances in advance, using alternative GPU types (e.g., L40S, H100), or exploring AWS's custom Trainium chips for training workloads. Multi-cloud strategies can also help distribute demand across providers.