Cerebras Systems filed for an IPO on April 21, 2026, betting wafer-scale chips can unseat Nvidia's GPU cluster dominance. The filing signals growing investor belief that the era of simply adding more GPUs may be ending.

Key facts

- Cerebras filed for IPO on April 21, 2026

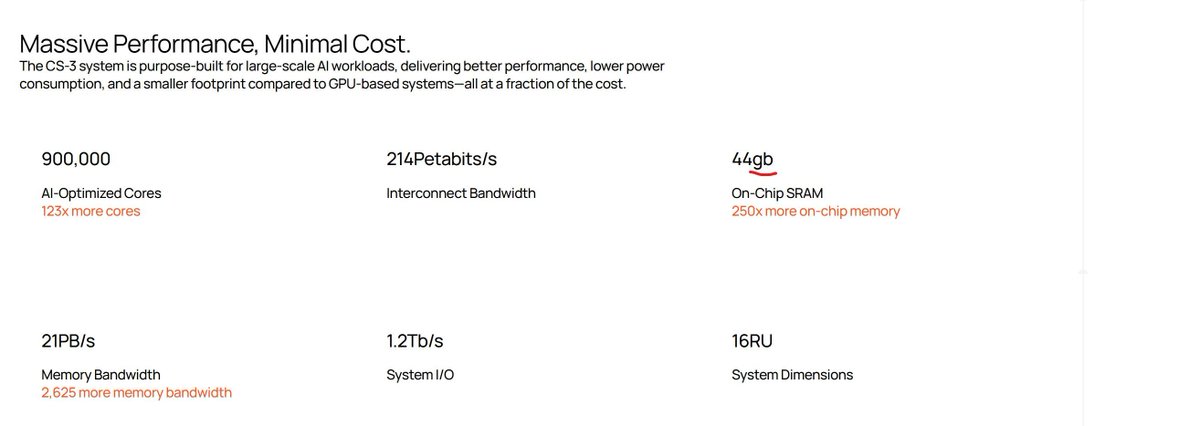

- SemiAnalysis flagged 8x SRAM understatement on May 7

- Wafer-scale chips avoid multi-GPU interconnect overhead

- IPO tests post-GPU scaling model for AI hardware

For most of the AI boom, the hardware playbook was simple: need more compute? Add more GPUs. Need bigger models? Build bigger clusters. Cerebras Systems went a different way, building wafer-scale engines that avoid the distributed computing overhead of multi-GPU systems. [According to HPCwire]

Cerebras confidentially filed paperwork with the SEC for an initial public offering on April 21, 2026. The company has not disclosed valuation or share count. [Per the SEC filing]

The IPO lands amid a broader reckoning. On May 7, SemiAnalysis noted that Cerebras understates on-chip SRAM by 8x on its website — a transparency issue that may surface during due diligence. [As SemiAnalysis reported]

But the strategic thesis is clear: as inference workloads shift from massive batch jobs to latency-sensitive queries, the GPU scaling model faces structural pressure. Our May 3 report on inference shift noted that AI chip startups now have an opening to challenge Nvidia. [Per gentic.news]

The unique take: The Cerebras IPO is less a bet on a single chip and more a hedge against the GPU-centric scaling model that has ruled AI for five years. If distributed GPU clusters hit diminishing returns on utilization or interconnect cost, wafer-scale architectures become an insurance policy for hyperscalers.

Competitive context: Cerebras competes with Nvidia, which holds an estimated 80%+ market share in AI accelerators. [According to industry estimates] The wafer-scale approach trades flexibility for density — a tradeoff that works best for specialized workloads.

What's undisclosed: Cerebras has not revealed its revenue, gross margins, or customer concentration in the confidential filing. Those numbers, when public, will determine whether the thesis holds water.

What to watch

Watch for the public S-1 filing, expected within weeks, which will reveal Cerebras revenue, gross margins, and customer concentration. The key metric: whether hyperscaler adoption of wafer-scale engines is growing or plateauing. Also watch Nvidia's response — potentially a wafer-scale or chiplet architecture at GTC 2027.