AMD released a PCIe-based GPU on May 8, 2026, designed to plug into existing servers. The card targets immediate AI workload acceleration without requiring new data center buildouts or liquid cooling.

Key facts

- Released May 8, 2026.

- Plugs into existing server PCIe slots.

- No liquid cooling required.

- Targets installed server base, not greenfield data centers.

- Follows MI350P PCIe card with 40% FP8 lead over H200 NVL.

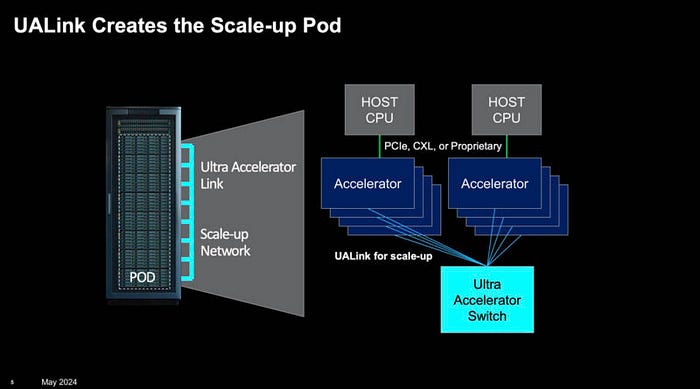

AMD released a new PCIe-based GPU on May 8, 2026, that plugs directly into existing servers' PCI busses, providing an immediate boost for running new AI workloads, according to HPCwire. The approach contrasts with Nvidia's strategy of building entire data centers around new GPUs with elaborate scale-up networking, exotic chiplet architectures, and advanced liquid cooling.

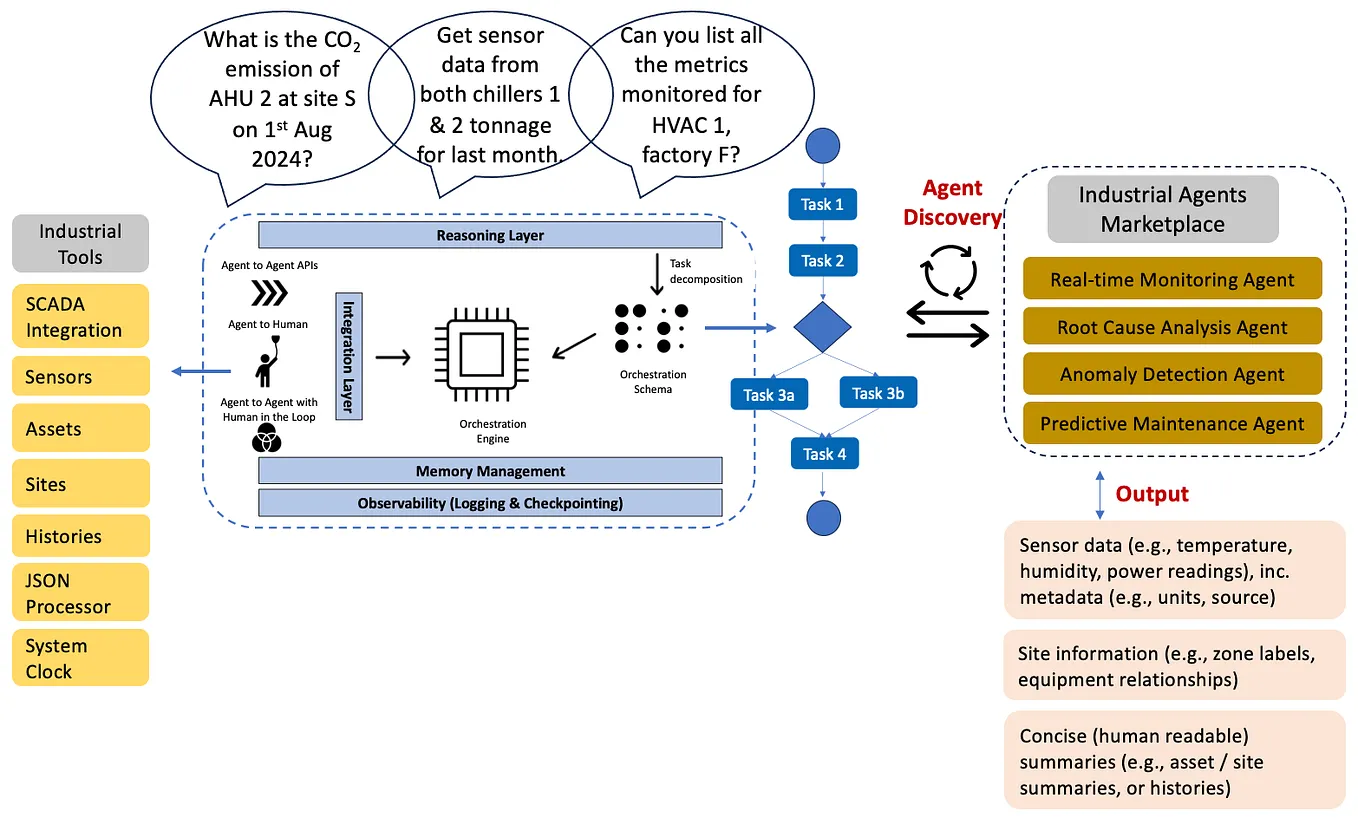

The unique take: AMD is betting that the largest AI infrastructure opportunity is not greenfield data centers but the installed base of servers already in operation. By offering a plug-in PCIe card, AMD targets the tens of thousands of servers that enterprises already own, enabling incremental AI upgrades without the capital expense of new facilities or cooling systems.

How the card works

The GPU uses PCIe form factor, meaning it fits into standard server PCIe slots. This eliminates the need for custom power delivery or cooling infrastructure. The card draws power through the PCIe bus and supplemental cables, similar to high-end consumer GPUs. [According to the source], the design allows customers to "build an entire data center around a new GPU" or simply plug in.

Comparison to Nvidia

Nvidia's recent launches — including the 100K-GPU DOE supercomputer and validated data center blueprints — focus on large-scale, purpose-built clusters. AMD's PCIe card takes the opposite approach: drop-in compatibility with existing hardware. The trade-off is performance; PCIe slots have power limits (75 watts from the slot, plus supplemental cables), constraining peak compute compared to Nvidia's liquid-cooled, scale-up designs.

Market implications

The launch comes less than a week after AMD's MI350P PCIe card claimed a 40% FP8 lead over Nvidia's H200 NVL. [According to a previous gentic.news article], AMD's ROCm software stack saw a 75x performance jump in 14 days post-DeepSeek v4. The PCIe GPU extends AMD's strategy of offering competitive AI hardware without requiring infrastructure overhauls.

Who this affects

Enterprise IT teams running existing server fleets with available PCIe slots. The card targets organizations that want to experiment with AI workloads without committing to data center expansion. AMD competes with Nvidia for this segment, but Nvidia's strength remains in large-scale, purpose-built clusters.

What to watch

Watch for benchmark comparisons against Nvidia's H200 and B200 in inference workloads. Also track enterprise adoption rates — if AMD's PCIe card gains traction in cloud providers or enterprise data centers, it signals a shift toward incremental AI infrastructure spending over greenfield buildouts.