A GitHub repository compiling 84 battle-tested best practices for using Anthropic's Claude Code has surged to #1 trending with 19.7K stars and 1.7K forks. The repository, claude-code-best-practice, provides a complete open-source implementation of the advanced setup used internally at Anthropic, including subagents, slash commands, skills, hooks, and MCP servers.

Key Takeaways

- A GitHub repository called 'claude-code-best-practice' has amassed 19.7K stars by compiling 84 production tips from Anthropic's Claude Code creators.

- It provides a full open-source framework for moving from basic usage to advanced agentic workflows.

What's in the Repository

The repository is structured as a comprehensive guide to transforming "vanilla" Claude Code usage into a full agentic development environment. According to the source material, it contains:

- 84 specific tips from Boris Cherny (creator of Claude Code), Thariq, Cat Wu, and other Anthropic team members

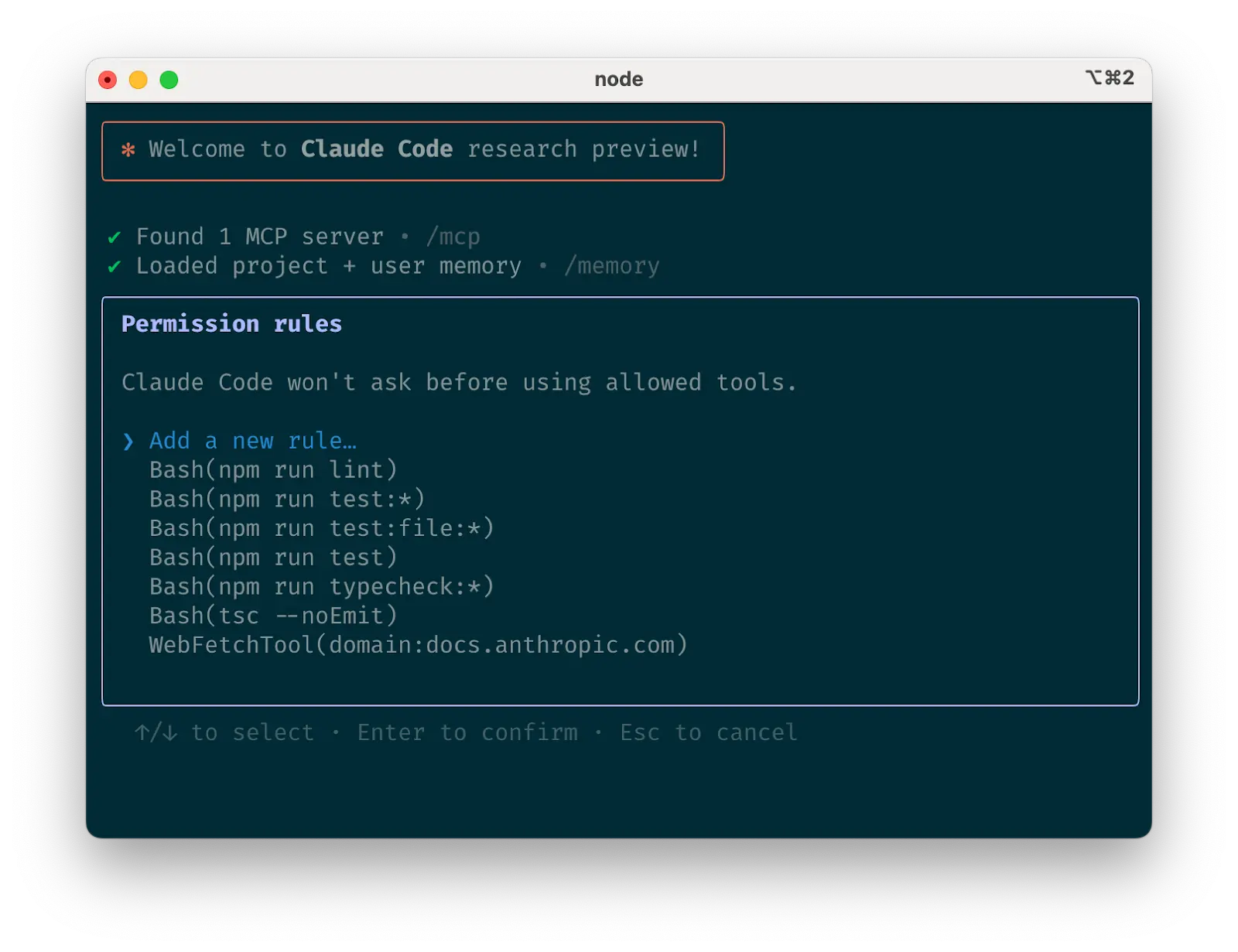

- Implemented patterns for subagents, slash commands, skills, hooks, and MCP servers — not just theoretical descriptions

- A working orchestration workflow demonstrating the Command → Agent → Skill pattern with a

/weather-orchestratordemo - Side-by-side comparisons of 8 top development workflows including Superpowers, Spec Kit, BMAD, Get Shit Done, OpenSpec, and HumanLayer

- Agent Teams implementation using tmux and git worktrees for parallel development

- The "Ralph Wiggum loop" for managing long-running autonomous tasks

- Cross-model workflows combining Claude Code with Codex for QA and plan review

- Technical reports on CLAUDE.md management, memory handling, skills in monorepos, LLM degradation, and Agent SDK vs CLI comparisons

Key Technical Practices Documented

The repository emphasizes several counterintuitive but proven practices from Anthropic's internal usage:

1. Context Management Strategy

"Use subagents with fresh 200K contexts instead of compacting"

This challenges the common practice of trying to compress context windows, suggesting instead that spinning up fresh subagents with full context is more effective for complex tasks.

2. Search Over RAG

"Agentic search (glob + grep) beats RAG every time"

For codebases, the repository advocates for traditional file search patterns over retrieval-augmented generation approaches, claiming better accuracy and speed for development tasks.

3. Structured Prompt Engineering

"Keep CLAUDE.md under 200 lines, wrap domain rules in <important if="..."> tags"

The guidelines provide specific formatting rules for prompt files, emphasizing brevity and conditional importance tagging for better model comprehension.

4. Model Specialization

"Start every task in plan mode, use Opus for planning and Sonnet for code"

This practice recommends using Claude 3 Opus for initial planning and architectural decisions, then switching to Claude 3.5 Sonnet for the actual coding implementation.

5. Quality Enforcement

"Challenge Claude — grill me on these changes and don't make a PR until I pass your test"

The repository includes patterns for having Claude Code act as a rigorous code reviewer that must approve changes before they proceed.

Implementation Architecture

The repository demonstrates several architectural patterns that move beyond simple chat-based coding assistance:

Orchestration Layer

The Command → Agent → Skill pattern creates a clear separation between user commands, agent coordination, and specialized skill execution. The /weather-orchestrator demo shows how this pattern handles complex, multi-step tasks with error handling and retry logic.

Parallel Development Workflows

Using tmux and git worktrees, the setup enables true parallel development where different Claude Code agents can work on separate features simultaneously, with proper isolation and merge coordination.

Skill-Based Architecture

Skills are implemented as modular, reusable components that can be composed for different tasks. The repository includes examples of common development skills and patterns for creating new ones.

Why This Matters for Developers

For developers still using Claude Code as "ChatGPT with a terminal," the repository claims they're "leaving 10x on the table." The documented practices represent production-hardened approaches that have evolved through extensive internal use at Anthropic.

The MIT-licensed, 100% open-source nature means teams can immediately implement these patterns without vendor lock-in or proprietary dependencies. The rapid adoption (19.7K stars in a short period) suggests the community has been waiting for exactly this type of comprehensive, practical guidance.

gentic.news Analysis

This repository's explosive growth reflects a broader trend in the AI-assisted development space: the shift from basic code completion to sophisticated agentic workflows. Following Anthropic's Claude 3.5 Sonnet release in June 2024, which significantly improved coding capabilities, the community has been seeking structured methodologies to leverage these advances. This repository fills that gap with production-proven patterns.

The emphasis on "agentic search over RAG" aligns with findings we covered in our October 2025 analysis of code search techniques, where traditional grep/glob approaches often outperformed embedding-based retrieval for precise code location. The repository's architectural patterns also echo the multi-agent coordination frameworks we've seen emerging from companies like MindsDB and Fixie.ai, though specifically tailored for the Claude Code ecosystem.

Notably, this repository represents a significant knowledge transfer from Anthropic to the open-source community. While companies like GitHub (with Copilot) and Amazon (with CodeWhisperer) have extensive documentation, this level of internal best practice sharing from the actual tool creators is unusual. It suggests Anthropic is taking an ecosystem-building approach similar to what OpenAI did with the original ChatGPT plugin system, but with more technical depth.

The timing is particularly interesting given the increased competition in AI-assisted development. With Google's Gemini Code Assist gaining enterprise traction and Microsoft's GitHub Copilot Workspace entering beta, Anthropic appears to be strengthening its developer community by open-sourcing its internal expertise rather than keeping it proprietary.

Frequently Asked Questions

What is Claude Code?

Claude Code is Anthropic's AI-powered coding assistant that integrates directly into development environments. Unlike general-purpose chatbots, it's specifically optimized for understanding codebases, generating implementations, and assisting with software development tasks through a command-line interface and IDE integrations.

Is this repository officially maintained by Anthropic?

While the repository contains practices from Anthropic team members including Boris Cherny (creator of Claude Code), it appears to be a community compilation rather than an officially maintained Anthropic project. The MIT license and open-source nature suggest it's a community effort to document and share proven patterns.

How does this differ from using Claude Code with basic prompts?

The repository moves beyond simple question-answer interactions to implement full agentic workflows where Claude Code can orchestrate complex tasks across multiple files, run tests, create subagents for specialized work, and maintain context across extended development sessions. It's the difference between having a coding assistant and having an automated development team.

What are the system requirements for implementing these patterns?

Most patterns require Claude Code with sufficient context window access (200K tokens recommended) and standard development tools like tmux and git. The repository includes setup instructions and assumes familiarity with command-line development workflows. Some advanced patterns may require specific Claude model access (Opus for planning, Sonnet for coding).