A demonstration shared on social media shows Clawdbot, an AI agent, autonomously processing and responding to a voice message—despite having no built-in voice support. The agent detected the audio's Opus format, converted it via FFmpeg, called OpenAI's Whisper API using a found API key, transcribed the content, and generated a contextual text reply.

What Happened

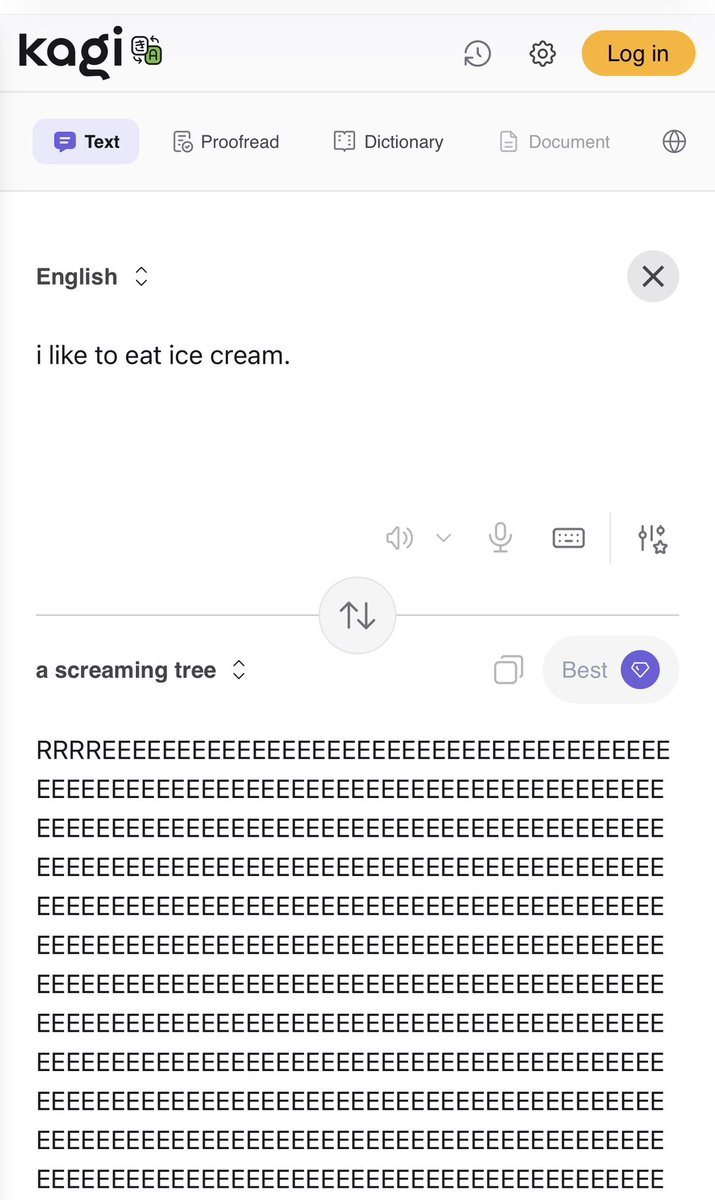

User @kimmonismus posted a screenshot showing Clawdbot's workflow after receiving a voice message. The agent executed a multi-step process:

- Detected the audio format as Opus.

- Converted the file using FFmpeg, a standard open-source multimedia framework.

- Called OpenAI's Whisper API for transcription, utilizing an API key it located autonomously.

- Generated a text response based on the transcription, acting as if voice message handling was a native capability.

The user's caption, "This is nuts," underscores the surprising autonomy displayed. The agent identified the necessary tools (FFmpeg, Whisper API) and orchestrated their use to complete a task it was not explicitly programmed to perform.

Technical Context

This demonstration hinges on two core technologies:

- OpenAI's Whisper: An automatic speech recognition (ASR) system capable of transcribing and translating speech across multiple languages. It is available via a public API.

- FFmpeg: A ubiquitous, open-source library for handling multimedia data, commonly used for format conversion and streaming.

The notable aspect is orchestration. Clawdbot acted as an agentic workflow engine, chaining these discrete tools (format detection → conversion → API call → LLM response) based on the input type and its available toolset. It effectively "figured out" a solution to an unimplemented feature (voice message processing) by composing existing capabilities.

gentic.news Analysis

This demonstration is a tangible, user-level example of the AI agent trend moving from research prototypes to functional applications. While major labs like Google (with its Gemini-powered "Agent“) and startups like Cognition AI (with its Devin coding agent) are building sophisticated, generalist agents, this Clawdbot example shows how agentic behavior can emerge in narrower, user-configured systems. It performs a specific, multi-step tool-use task autonomously.

The agent's ability to find and use an API key for Whisper is particularly significant. It points toward a future where AI assistants manage their own tooling and authentication—a step beyond current systems that require pre-configured, hard-coded API connections. This aligns with the broader industry push towards agentic workflow automation, where LLMs function as reasoning engines that plan and execute sequences of actions using external tools. The recent surge in AI automation platforms (like LangChain, LlamaIndex, and Cursor's agent mode) is creating the infrastructure that makes demonstrations like this possible.

However, this also surfaces immediate security and control considerations. An agent autonomously discovering and using API keys introduces new attack surfaces and audit challenges. The industry is concurrently grappling with these issues, as seen in the focus on AI safety and alignment for autonomous systems. This Clawdbot case is a microcosm of the larger tension between capability and controllability in agentic AI.

Frequently Asked Questions

What is Clawdbot?

Clawdbot appears to be a configurable AI agent or chatbot, likely built on a platform that allows for tool use and workflow automation (such as LangChain or a similar framework). The demonstration suggests it can be equipped with tools like FFmpeg and access to language model APIs, enabling it to perform complex, multi-step tasks autonomously.

How did Clawdbot "find" an OpenAI API key?

The source material states the agent called Whisper "with a found API key." This implies the agent had access to a system or environment where an OpenAI API key was stored or configured, and it autonomously retrieved and used it. This highlights a key feature of advanced AI agents: the ability to access and utilize pre-existing resources and credentials to accomplish tasks, moving beyond simple, stateless query-response models.

Is this a built-in feature of Clawdbot?

No. The user's description makes it clear this was not a pre-existing voice feature. The agent "figured out" how to handle the voice message by detecting its format and orchestrating a chain of available tools (FFmpeg, the Whisper API) to transcribe it and generate a reply. This emergent problem-solving is a hallmark of agentic AI systems.

What does this mean for the future of AI assistants?

This demonstration is a small-scale example of a major trend: AI systems evolving from conversational chatbots into autonomous agents that can plan, use tools, and execute multi-step workflows to solve problems. The future likely involves assistants that can seamlessly interact with diverse software tools, data formats, and APIs to complete complex tasks—from data analysis and content creation to full software development cycles—with minimal human intervention.