What Happened

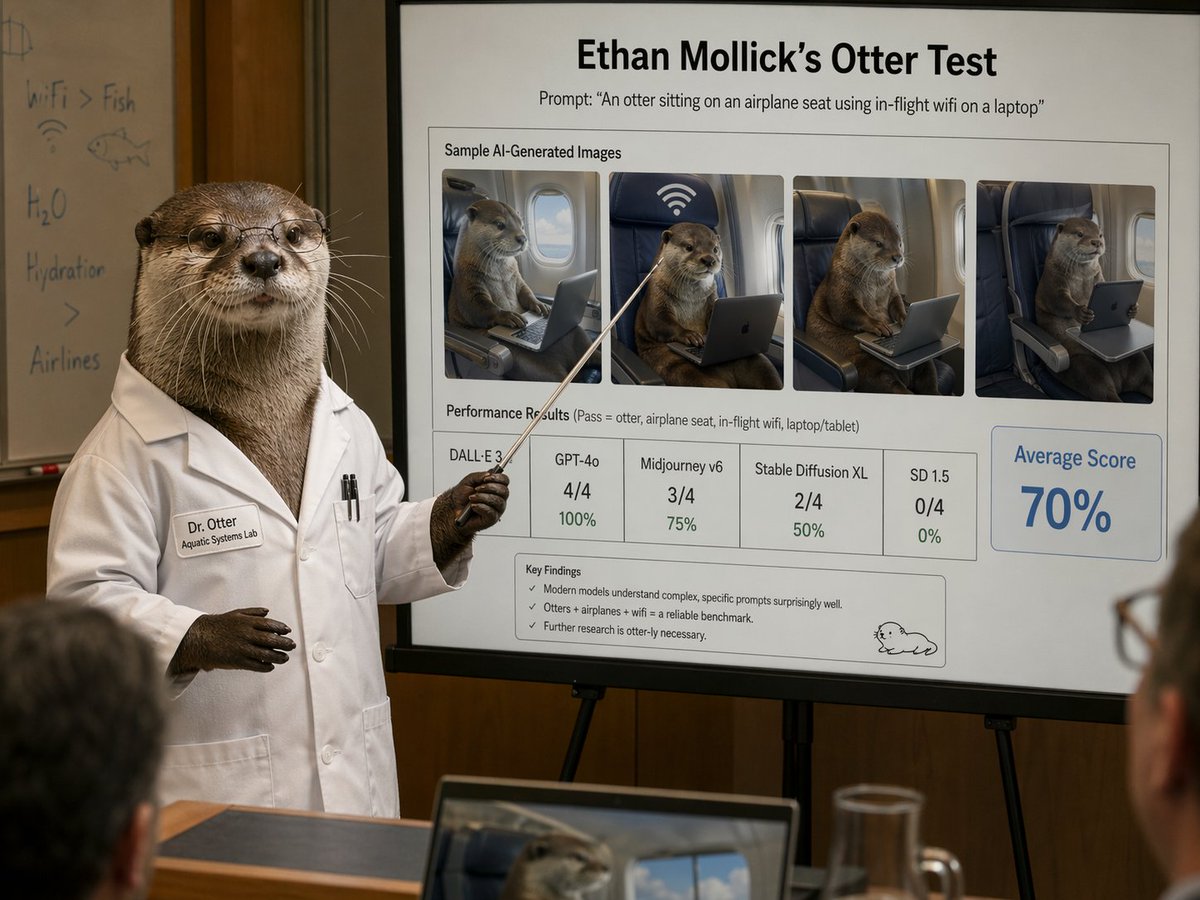

Ethan Mollick, a professor at Wharton and AI researcher, shared a brief demonstration video showing OpenAI's Whisper model performing real-time speech translation. The video shows English speech being translated into Spanish with minimal latency, prompting Mollick's comment: "It really is a universal translator."

The demonstration appears to showcase Whisper's speech recognition and translation capabilities working in near real-time, though the source provides no technical specifications about model versions, latency measurements, or accuracy metrics.

Context

OpenAI's Whisper is an open-source speech recognition system released in September 2022. It was trained on 680,000 hours of multilingual and multitask supervised data collected from the web, demonstrating robust speech recognition and translation capabilities across multiple languages.

Unlike previous speech translation systems that often required separate components for recognition, translation, and synthesis, Whisper uses an end-to-end approach where a single model transcribes speech in one language and can optionally translate it to English. The system supports transcription in approximately 99 languages and translation from those languages to English.

Current Limitations

While the demonstration shows progress, several limitations remain:

- Directional limitation: Whisper primarily translates to English, not between arbitrary language pairs

- Latency requirements: Real-world universal translation requires near-instantaneous processing

- Context preservation: Nuance, tone, and cultural context remain challenging for current systems

- Resource requirements: High-quality real-time translation still demands significant computational resources

Practical Implications

The demonstration suggests Whisper's architecture may be adaptable for more general speech-to-speech translation applications. Researchers have been exploring extensions to the original Whisper model for direct speech translation between non-English languages, though these remain research projects rather than production systems.

For developers and engineers, the open-source nature of Whisper means the underlying technology can be adapted and extended, potentially accelerating progress toward more capable translation systems.