A developer's social media post has highlighted a specific, practical use case for AI coding assistants that goes beyond simple code generation: absorbing and recalling a team's institutional knowledge. The post describes a common pain point—a team being "stuck" when a senior developer with years of context is unavailable—and credits CodeRabbit with solving it by "remember[ing] how we build things."

What Happened

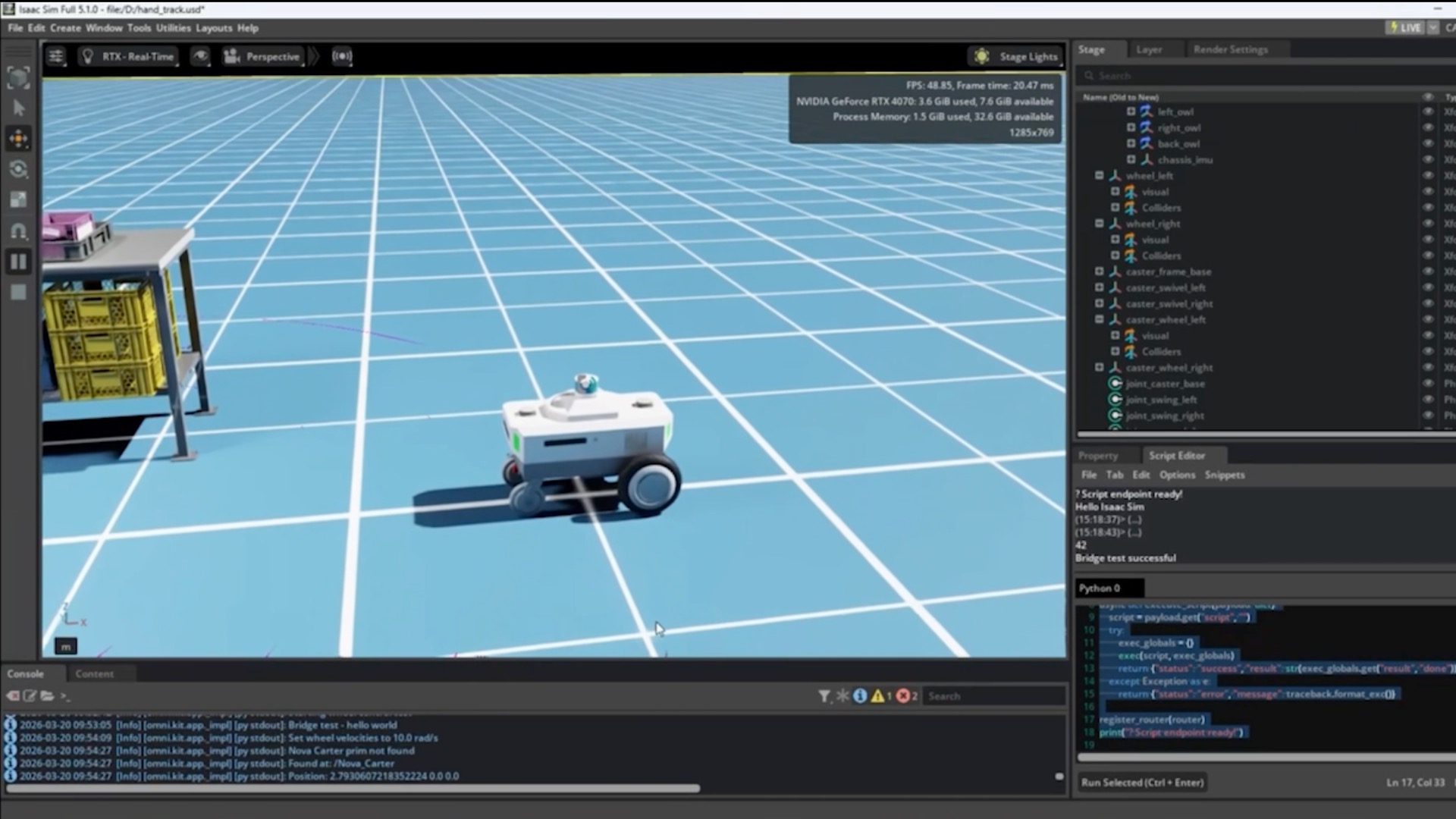

The source is a testimonial from a developer (Kimmo N. on X) describing their team's experience with CodeRabbit, an AI-powered code review and assistant tool. The core problem identified is the "bus factor"—the risk to a project if a key person (here, a senior developer with five years of context) is suddenly unavailable. Without that person, the team is left "digging through old messages" to understand past architectural decisions.

Kimmo states that CodeRabbit "somehow just remembers how we build things," allowing developers to get answers about the codebase's history and rationale without "bother[ing] anyone or start[ing] from zero." The tool's knowledge is contextual to the specific team's repository history.

Context: The 'Bus Factor' & AI Code Assistants

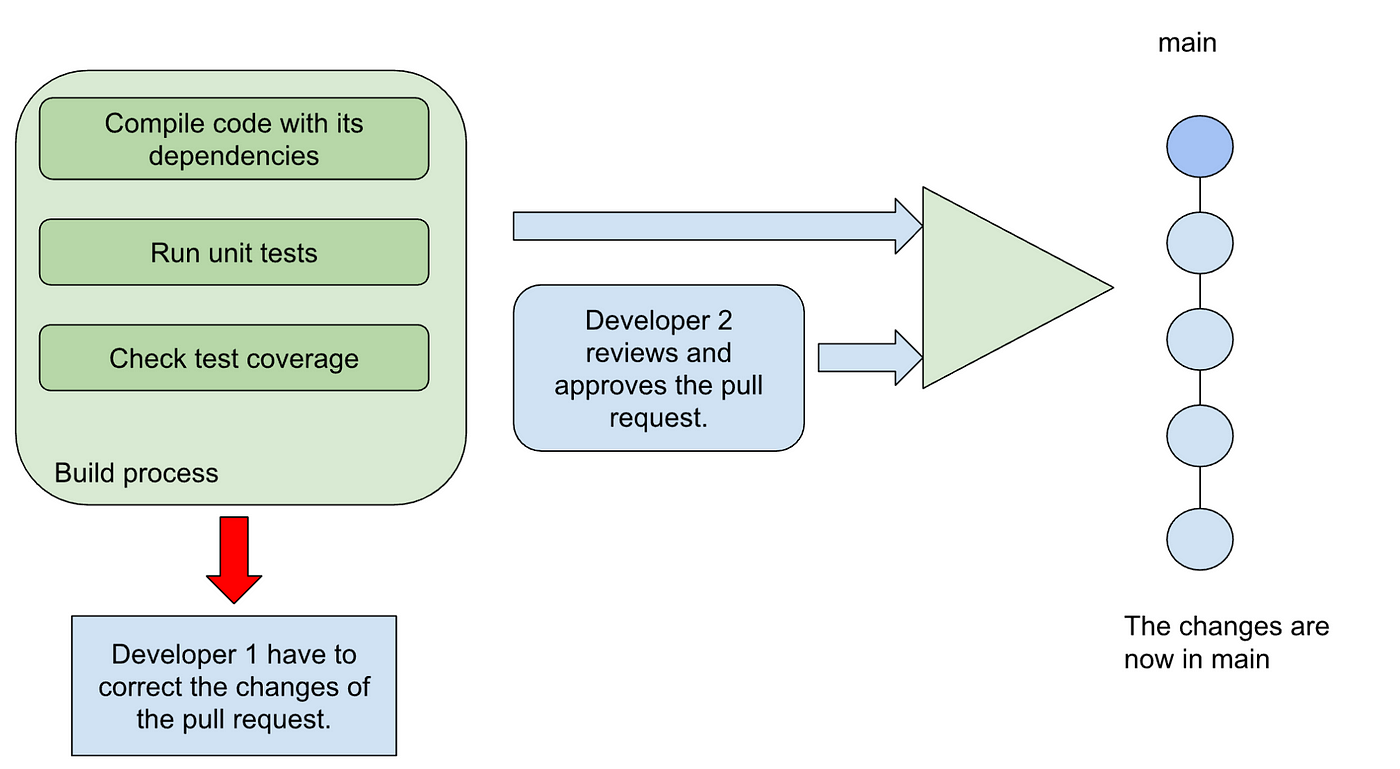

The "bus factor" is a long-standing risk in software engineering. While AI coding assistants like GitHub Copilot, Amazon CodeWhisperer, and Codium have primarily focused on generating new code or unit tests, capturing and explaining existing rationale is a different challenge. It requires the AI to process not just the current code state but also the historical evolution, commit messages, and possibly PR discussions to infer "why a weird decision was made."

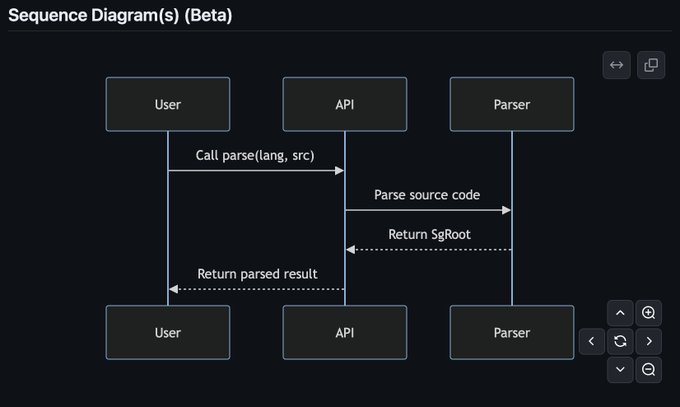

CodeRabbit's approach appears to involve creating a persistent, project-specific knowledge graph or embedding index from the codebase history. This differs from the standard, stateless autocomplete model of many assistants. When a developer asks a question, the tool can ground its answer in the specific historical context of that project.

What This Means in Practice

For development teams, this functionality could translate to:

- Reduced onboarding time: New engineers can query the AI for project context.

- Faster blocker resolution: Developers stuck on legacy code can ask "why is this built this way?"

- Preserved institutional knowledge: The rationale for decisions is captured and made searchable, reducing tribal knowledge.

gentic.news Analysis

This user report points to a significant, under-discussed evolution in AI coding tools: the shift from being a coding co-pilot to a project historian. While major players have focused on raw code generation metrics (like lines of code accepted), CodeRabbit is tackling a different part of the developer productivity stack—knowledge retention and transfer. This aligns with a broader trend we noted in our coverage of Augment's $252M raise in 2024, where the company emphasized its AI's deep codebase understanding for enterprise teams.

The capability described—an AI that "remembers" a team's build patterns—requires robust, long-context retrieval. This connects directly to the architectural race for longer context windows in foundation models, such as Google's Gemini 1.5 Pro with its 1M token context or Anthropic's 200K context Claude. CodeRabbit is likely building a specialized RAG (Retrieval-Augmented Generation) pipeline on top of such a model, continuously indexing a repository's commit history, PRs, and comments to create a queryable knowledge base. The business implication is clear: tools that reduce critical dependency on individual engineers directly address enterprise risk management, a stronger purchasing driver than mere code completion speed.

However, the testimonial leaves key technical questions unanswered. What is the source scope—just git log messages, or does it ingest Slack/Teams threads? How does it handle conflicting historical decisions? Without published benchmarks on knowledge accuracy, the claim remains an anecdotal positive signal. The next step for tools in this space will be to quantify the "context recovery accuracy" or the reduction in time spent searching for historical rationale.

Frequently Asked Questions

What is the 'bus factor' in software development?

The "bus factor" is a morbid but common term for the risk posed to a project if a key team member were to be hit by a bus (i.e., suddenly become unavailable). It measures the minimum number of team members who would need to disappear before a project stalls due to lost knowledge. A high bus factor (e.g., 1) indicates dangerous over-reliance on a single individual.

How is CodeRabbit different from GitHub Copilot?

GitHub Copilot primarily functions as an autocomplete tool, suggesting the next lines of code in real-time based on the current file and recently opened files. It is generally stateless across sessions. CodeRabbit, based on this description, appears to build a persistent, project-specific memory by analyzing the entire codebase history, including past commits and decisions, allowing it to answer "why" questions about the project's architecture.

Does this mean AI will replace senior developers?

No. This technology aims to amplify and preserve the value of senior developers, not replace them. It captures their institutional knowledge and decision-making rationale, making it accessible to the entire team. This reduces bottlenecks and allows senior engineers to focus on higher-level problems rather than constantly providing historical context.

What are the potential risks of an AI remembering codebase history?

Risks include the AI perpetuating outdated or incorrect rationales if the historical data contains errors. There are also security and privacy considerations: the AI would have access to potentially sensitive information in commit histories or code comments. Teams would need to ensure the tool is properly configured to exclude secrets and comply with data governance policies.