CoreWeave beat 10 other inference providers on speed and price-performance for Moonshot AI's kimi-k2-6" class="entity-chip">Kimi K2.6, per Artificial Analysis. The benchmark underscores how open-weight models shift inference competition from proprietary APIs to infrastructure providers.

Key facts

- 11 inference providers tested on Kimi K2.6.

- CoreWeave delivered top speed and price-performance.

- Kimi K2.6 released April 2026 as open-weights model.

- Moonshot AI valued over $18B, backed by Alibaba, Tencent.

Cloud GPU provider CoreWeave announced it achieved the strongest combination of speed and price-performance for Moonshot AI's Kimi K2.6 in independent benchmarking by Artificial Analysis. The evaluation covered 11 inference providers on the current top open-source model, with CoreWeave simultaneously delivering the highest output speed and most cost-efficient performance [per CoreWeave's announcement].

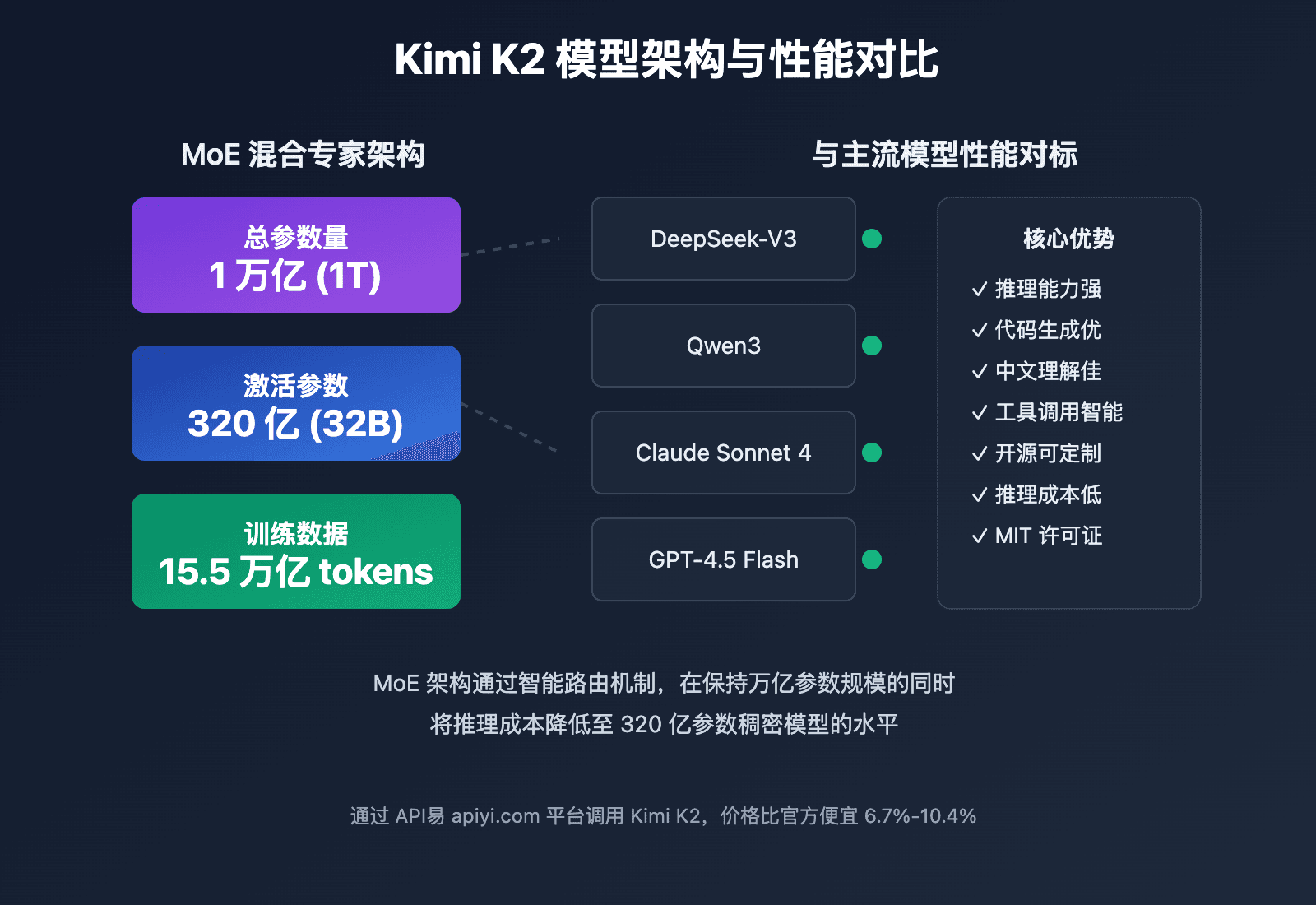

Kimi K2.6 is Moonshot AI's open-weights reasoning model released in late April 2026, following the K2.5 trillion-parameter multimodal model. The K2.6 variant focuses on coding and reasoning, scoring top marks on SWE-Bench Pro and HumanEval with Tools benchmarks upon release [as previously reported].

Unique take: The benchmark result signals that the inference market is bifurcating. Proprietary model providers like OpenAI and Anthropic compete on capability and latency. But for open-weight models like Kimi K2.6, the battleground has shifted to cloud infrastructure — who can run the same weights fastest and cheapest. CoreWeave's win here is a direct challenge to AWS, GCP, and Azure, which also offer GPU instances but may lack the specialized inference optimizations that CoreWeave has built.

CoreWeave did not disclose specific token-per-second numbers or dollar-per-million-token pricing in the announcement. Artificial Analysis typically publishes detailed provider rankings on its website, but those figures were not provided in the source material.

The company has been aggressive in building out its inference infrastructure, positioning itself as a low-cost alternative to hyperscalers for AI workloads. Moonshot AI, valued at over $18 billion and backed by Alibaba and Tencent, has made its models open-weight to encourage broad adoption — a strategy that benefits infrastructure providers like CoreWeave.

What to watch

Watch for Artificial Analysis to publish full provider rankings with token-per-second and cost-per-million-token data. Also watch if AWS, GCP, or Azure respond with optimized inference offerings for Kimi K2.6 in the next 60 days.