Cursor releases SDK to run AI agents headlessly in CI/CD, shifting billing from seats to compute tokens.

Key Takeaways

- Cursor is releasing an SDK that turns its agent runtime into programmable infrastructure for headless use in CI/CD pipelines, internal tools, and third-party products.

- Revenue scales with compute tokens, not seats, enabling higher volume without human-in-the-loop.

What Happened

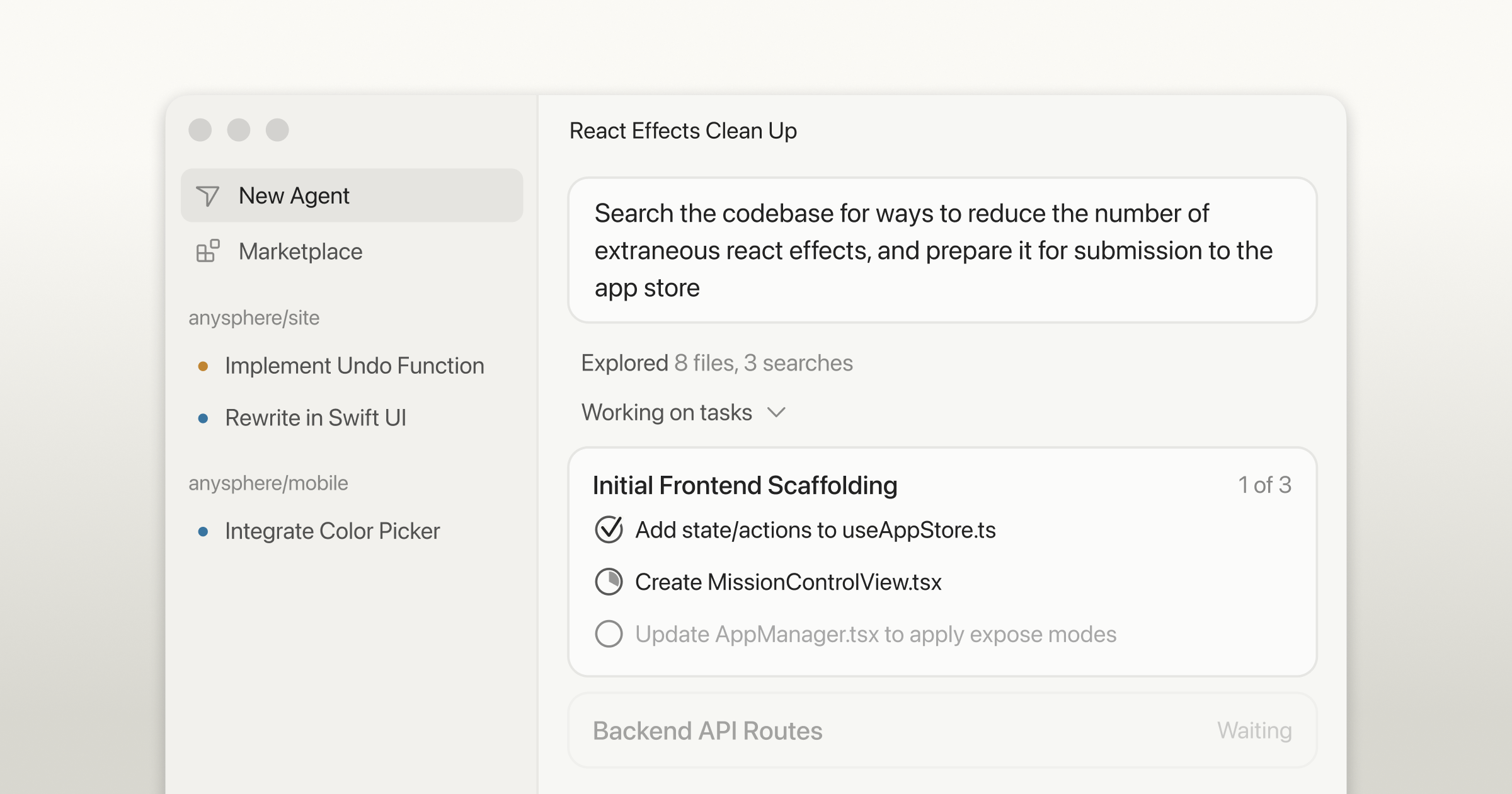

Cursor, the AI-native IDE known for its code completion and agentic features, is making a significant platform play. According to a tweet from @kimmonismus, Cursor is releasing an SDK that transforms its agent runtime into programmable infrastructure. This means developers can now run Cursor agents headlessly—without a graphical interface—in CI/CD pipelines, internal tools, and even third-party products.

Key Numbers

- Billing model shift: From per-seat to token-based compute consumption

- No human-in-the-loop: Agents can operate autonomously, driving higher volume

- SDK availability: Released, though exact pricing and token rates are not yet public

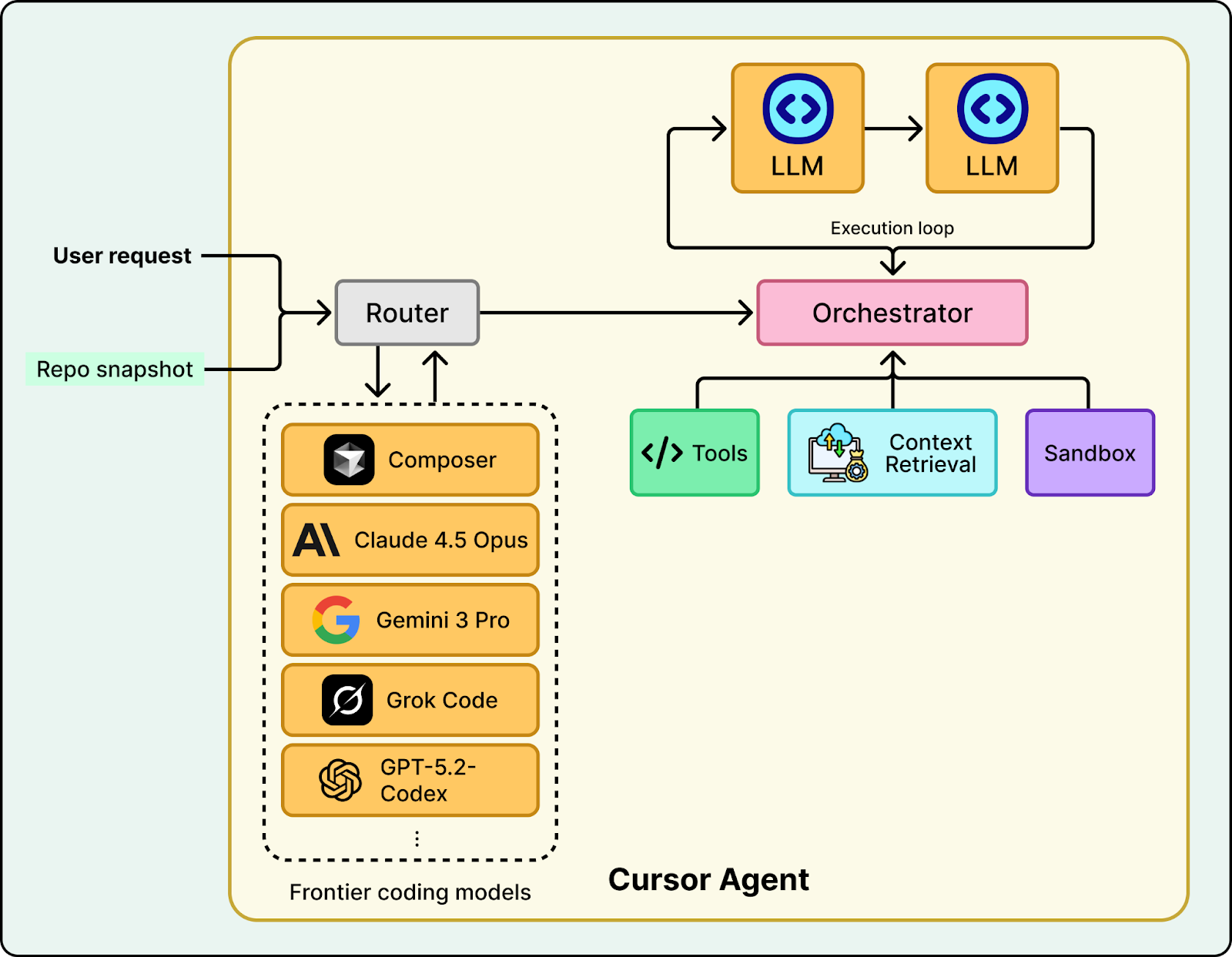

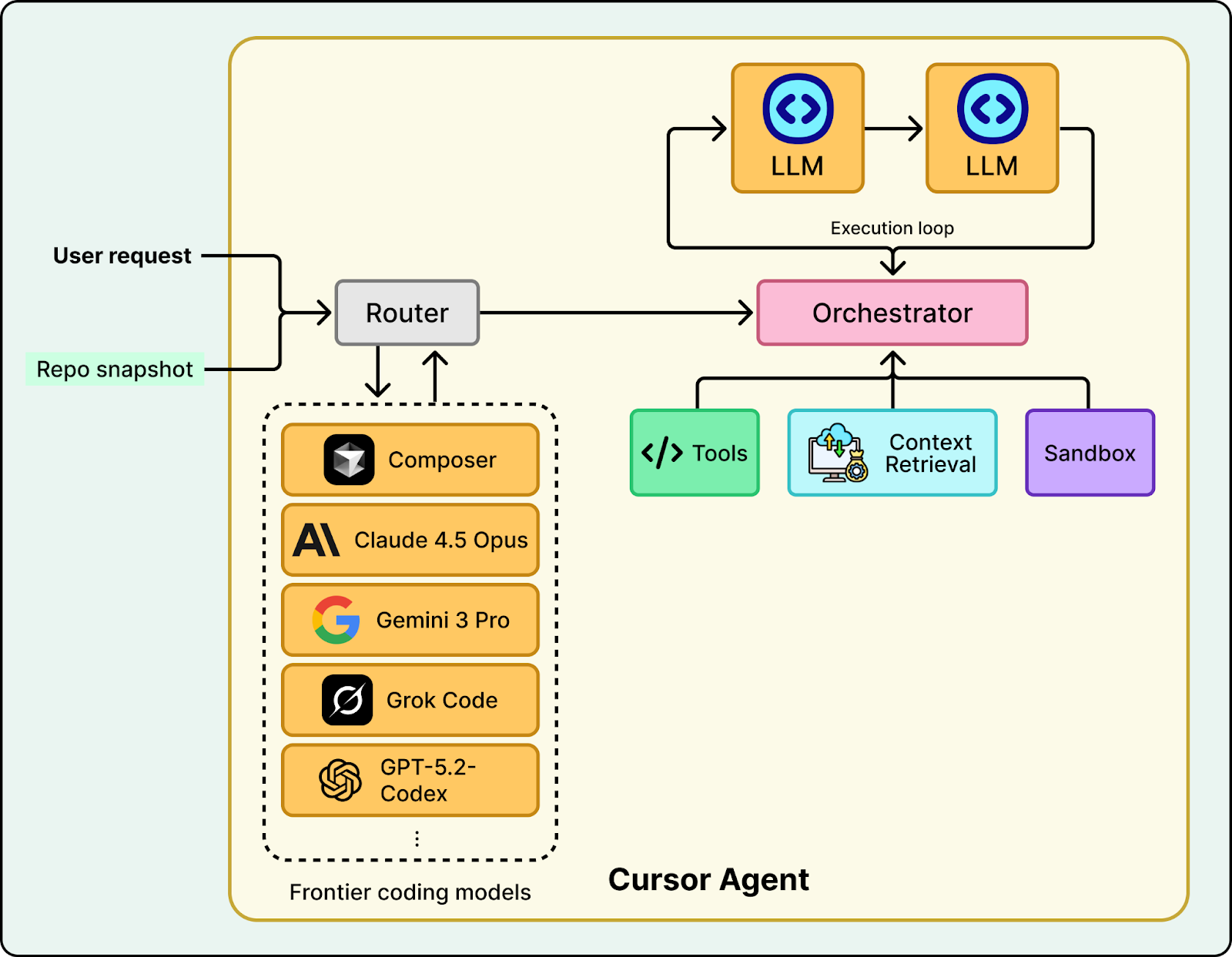

How It Works

The SDK exposes Cursor's agent runtime as an API. Developers can spin up agents programmatically, pass them tasks, and collect results—all without ever opening the IDE. This is analogous to how AWS Lambda turned serverless compute into an API, or how OpenAI's API turned GPT into a callable service.

Technical Details

- Agent runtime: The same core that powers Cursor's in-IDE agentic features (code generation, debugging, refactoring)

- Headless mode: Runs in CI/CD pipelines, background jobs, or serverless functions

- Token-based billing: Each agent invocation consumes tokens, which are billed to the user's account

- Integration points: Likely REST API or SDK client libraries for Python, JavaScript, etc.

Why This Matters

This move fundamentally changes Cursor's business model. Previously, Cursor was an IDE—a tool you open to write code. Revenue came from subscription seats. Now, by turning the agent runtime into programmable infrastructure, Cursor can:

- Scale revenue with compute, not seats: A single CI/CD pipeline can spin up thousands of agents per hour, each consuming tokens.

- Expand use cases: Beyond interactive coding, agents can now handle automated code review, test generation, dependency updates, and more.

- Embed into third-party products: Other tools can integrate Cursor agents as a service, paying Cursor for token consumption.

What This Means in Practice

For engineering teams, this means you could have a CI/CD pipeline that automatically generates unit tests for every PR, runs them, and refactors failing code—all orchestrated by Cursor agents running headlessly. For platform teams, it means building internal developer portals that leverage Cursor agents on demand, billed per task.

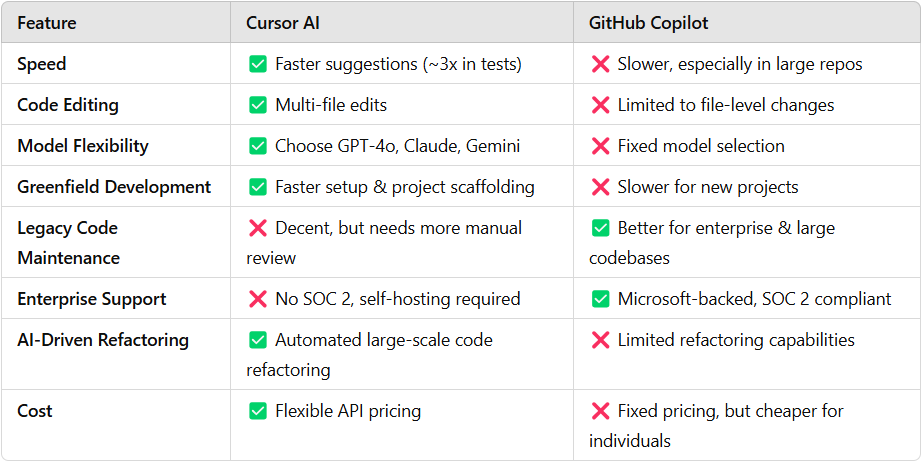

How It Compares

Interface

IDE only

IDE + API

IDE + API (limited)

Headless mode

No

Yes

Yes (Copilot Chat API)

Billing model

Per-seat

Per-seat + token

Per-seat + compute

Agentic capabilities

In-IDE only

Programmable

Limited to chat

Third-party embedding

No

Yes

No

Limitations and Caveats

- No official announcement yet: The source is a tweet from an industry observer, not an official Cursor press release. Details may change.

- Pricing unclear: Token rates and volume discounts are not specified. If tokens are expensive, adoption may be limited to high-value use cases.

- Competition: GitHub Copilot already offers a limited API for headless use. Amazon CodeWhisperer and others may follow.

- Quality concerns: Headless agents lack human oversight. Errors could cascade in automated pipelines without proper guardrails.

gentic.news Analysis

Cursor's platform play is a natural evolution for AI coding tools. We've seen this pattern before: what starts as a consumer product becomes infrastructure. OpenAI did it with GPT (chatbot → API), Anthropic with Claude (chat → API), and now Cursor is following suit with its agent runtime.

What's interesting is the timing. Cursor has been gaining traction as a premium AI IDE, but the real value may be in the agent runtime. By unbundling it from the IDE, Cursor can compete directly with GitHub Copilot's API and even with standalone agent frameworks like AutoGPT or LangChain agents—but with a more focused, code-specific runtime.

The billing shift from seats to tokens is critical. It aligns Cursor's incentives with usage, not headcount. This is the same model that made AWS and OpenAI successful: charge for what customers consume, not for access. For Cursor, this could mean much higher revenue per customer, especially for large engineering orgs that run hundreds of automated agents per day.

However, there's a risk: if the SDK is too expensive or too limited, developers may build their own agent infrastructure using open-source models (e.g., CodeLlama, DeepSeek-Coder) and orchestration frameworks (e.g., LangChain, CrewAI). Cursor needs to offer something compelling—either superior agent quality, lower cost, or seamless integration with existing tools—to justify the token spend.

Frequently Asked Questions

What can I do with the Cursor SDK?

The SDK allows you to run Cursor's AI agents programmatically, without the IDE. Use cases include automated code review in CI/CD pipelines, generating test suites for pull requests, refactoring codebases, and embedding AI coding capabilities into internal tools or third-party products.

How is Cursor's SDK different from GitHub Copilot's API?

Cursor's SDK focuses on agentic capabilities—agents that can plan, execute, and iterate on multi-step coding tasks. GitHub Copilot's API is more limited, primarily offering code completion and chat. Cursor's agents can potentially handle more complex, autonomous workflows.

Will the Cursor SDK replace the IDE?

No. The SDK is an additional product, not a replacement. The IDE remains for interactive development. The SDK targets automation, CI/CD, and embedding scenarios where a graphical interface isn't needed.

How much does the Cursor SDK cost?

Pricing details have not been officially announced. The model is token-based, meaning you pay per agent invocation or per token consumed. Expect pricing to be revealed when Cursor formally launches the SDK.