A developer has released an open-source proxy tool called free-claude-code that intercepts API calls from Anthropic's Claude Code desktop application and reroutes them to free NVIDIA NIM inference endpoints. This effectively allows users to run the Claude Code interface—a specialized coding assistant—without paying Anthropic's subscription fees, using free-tier NVIDIA API credits instead.

The project, announced on X (formerly Twitter), represents a direct workaround to vendor-specific pricing by leveraging NVIDIA's free NIM model hosting service. Users configure Claude Code to send requests to a local proxy server (localhost), which then translates the Anthropic API format to the NVIDIA NIM API format before forwarding the request.

Key Takeaways

- A developer released free-claude-code, a proxy that intercepts Claude Code's API calls and routes them to free NVIDIA NIM endpoints, unlocking free access to models like Kimi K2 and GLM 4.7.

- This bypasses Anthropic's subscription fees and adds remote execution via a Telegram bot.

What the Proxy Does

The free-claude-code proxy acts as a middleware layer with several key functions:

- API Translation: Converts Anthropic's API request/response schema to be compatible with NVIDIA NIM's API. This allows Claude Code, which is designed to communicate with Anthropic's servers, to work seamlessly with a different backend.

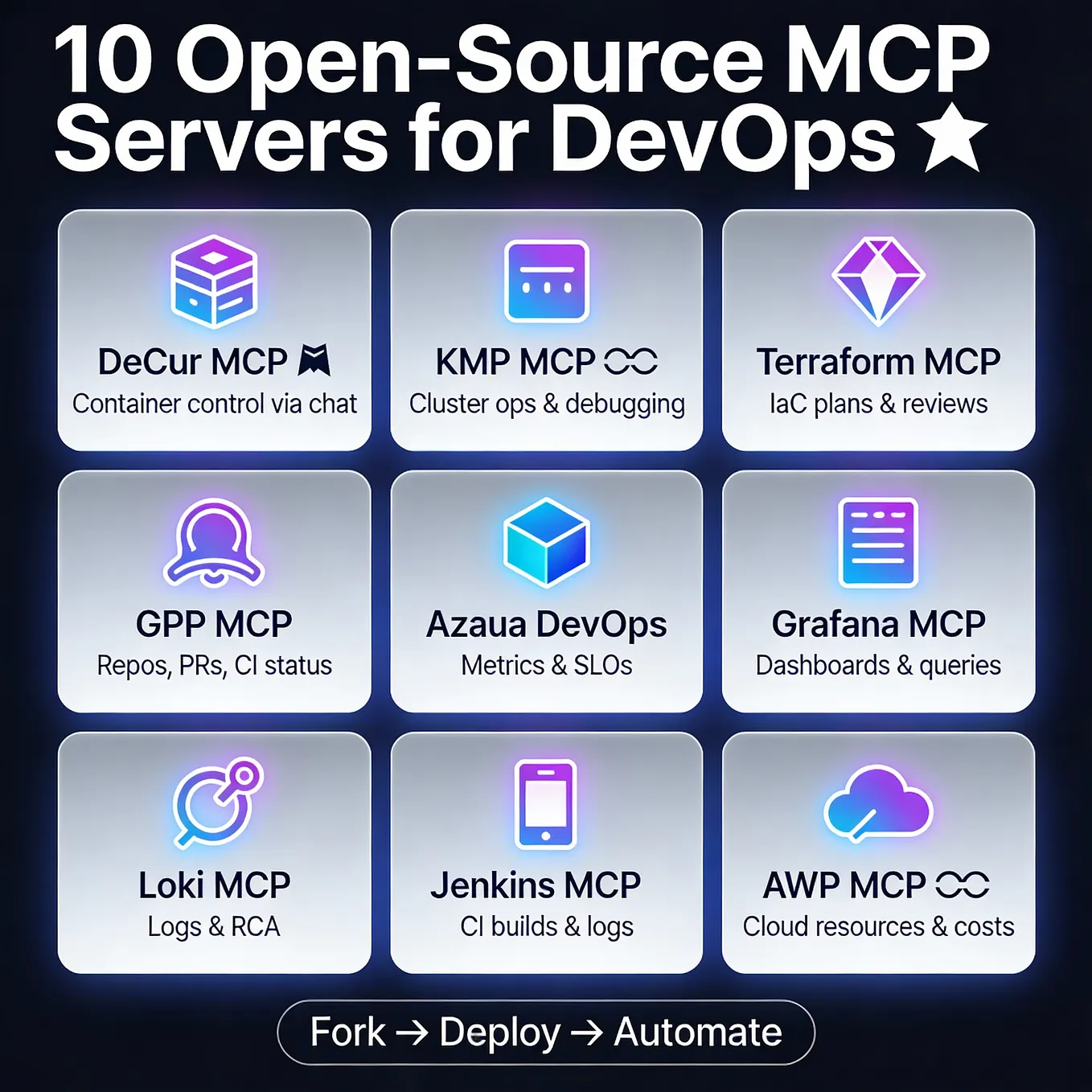

- Model Routing: Users can configure the proxy to use various open models hosted on NVIDIA NIM, including Kimi K2 (from Moonshot AI), GLM 4.7 (from Zhipu AI), MiniMax M2, and Devstral (the model behind the open-source coding assistant Devin).

- Free Tier Access: It utilizes NVIDIA's free API tier, which currently offers up to 40 requests per minute. This removes the primary cost barrier for using the Claude Code application.

- Feature Preservation: The proxy maintains core Claude Code features, including streaming of intermediate "thinking" tokens and execution of tool calls (like running code).

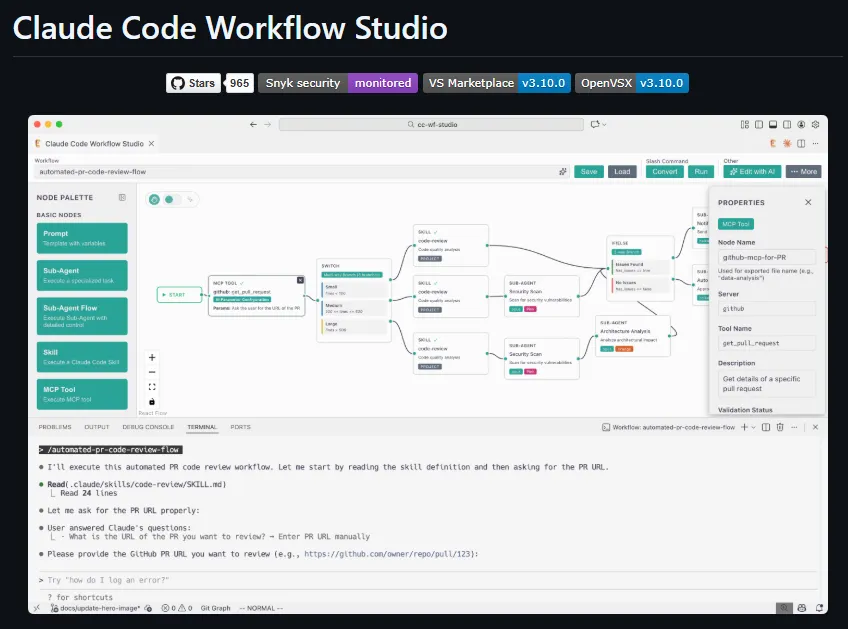

- Remote Control: An integrated Telegram bot allows users to send coding prompts and receive results from Claude Code directly on their mobile devices, turning the local application into a remotely accessible agent.

Technical Setup and Implications

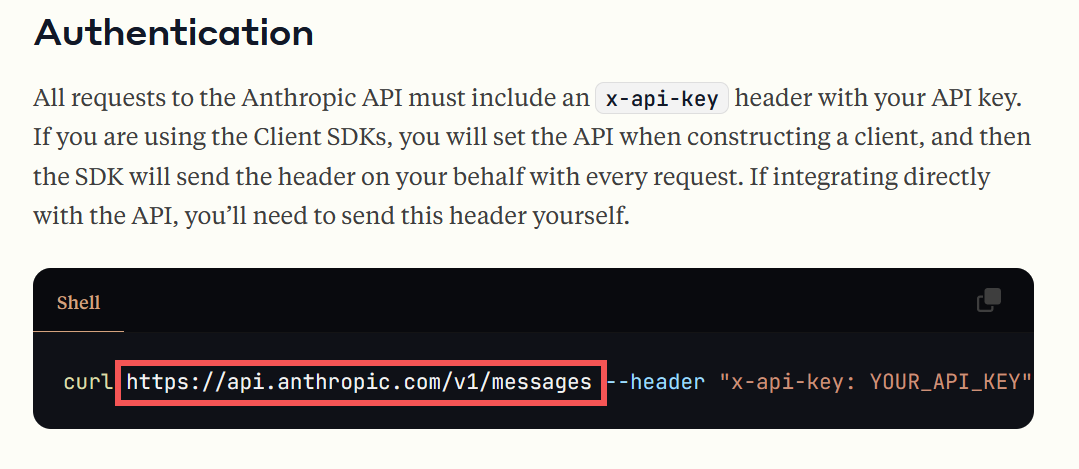

Setup involves cloning the GitHub repository, obtaining a free NVIDIA API key, and running the proxy server. Users then point the Claude Code application's endpoint configuration to http://localhost:port. The proxy handles all subsequent communication.

This development sits at the intersection of several trends: the proliferation of capable open-source coding models, the availability of free inference tiers from cloud providers like NVIDIA, and community-driven tools to bypass closed ecosystem lock-in. Unlike model aggregation services like OpenRouter—which provide a unified paid API for many models—this tool specifically targets a single, popular desktop application and redirects its traffic to a free alternative.

For developers, the immediate impact is cost elimination for using the Claude Code interface. The longer-term implication is a demonstration of how application-layer proxies can decouple front-end interfaces from their intended back-end services, increasing user choice and creating pressure on commercial API providers to compete on price and openness.

The project is openly available on GitHub under the name free-claude-code.

gentic.news Analysis

This tool is a tactical exploit of the current AI infrastructure landscape. It directly leverages NVIDIA's strategy to seed developer adoption by offering free NIM inference credits—a move we covered in our analysis of NVIDIA's 2025 developer conference. By converting Anthropic's proprietary API calls, the proxy effectively turns NVIDIA's loss-leading free tier into a subsidy for users of a competitor's application.

This follows a pattern of community tools emerging to "crack open" closed AI ecosystems. We saw similar dynamics with early reverse-engineering of ChatGPT plugins and proxies for Midjourney's API. The specific targeting of Claude Code is significant because it's a product Anthropic has positioned as a premium, subscription-based coding copilot. The success of this proxy could pressure Anthropic to reconsider its pricing model or offer a limited free tier, similar to how GitHub Copilot eventually introduced a free option for verified students and open-source maintainers.

The integration of a Telegram bot for remote control is an innovative twist that aligns with the growing "AI agent" trend. It transforms Claude Code from a local desktop tool into a remotely triggerable service, previewing a future where coding assistants are ambient and context-agnostic. This development also highlights the robustness of the open-source model ecosystem—models like GLM 4.7 and Kimi K2 are now capable enough to be drop-in replacements for closed models in specific tasks like coding, which was less true just a year ago.

Frequently Asked Questions

Is using free-claude-code legal?

The legality depends on Anthropic's Terms of Service for the Claude Code application. While the proxy itself is open-source software, using it to circumvent intended payment mechanisms for a proprietary application may violate the ToS. Users should review the relevant terms. The proxy does not modify the Claude Code binary itself; it only intercepts network traffic.

What are the limitations of the free NVIDIA NIM tier?

The primary limitation is the rate limit of 40 requests per minute. For individual developers, this is typically sufficient, but it could be a bottleneck for heavy, automated usage. The free tier also has a limited selection of models compared to the full NVIDIA catalog, and the available models may not be the latest versions.

How does performance compare to native Claude Code?

Performance will depend entirely on the chosen alternative model (e.g., Kimi K2, GLM 4.7). These are different architectures from Anthropic's Claude models and will have different strengths and weaknesses in code generation, reasoning, and tool use. There is no guarantee of parity. The proxy adds minimal latency for the API translation, but the main variable is the inference speed and quality of the NVIDIA-hosted model.

Can this proxy be used for other Anthropic applications?

The current free-claude-code proxy is specifically engineered to handle the API calls and features of the Claude Code desktop application. It is not a general-purpose Anthropic API proxy. However, its existence demonstrates the technique, and similar tools could be developed for other applications that use identifiable API patterns.