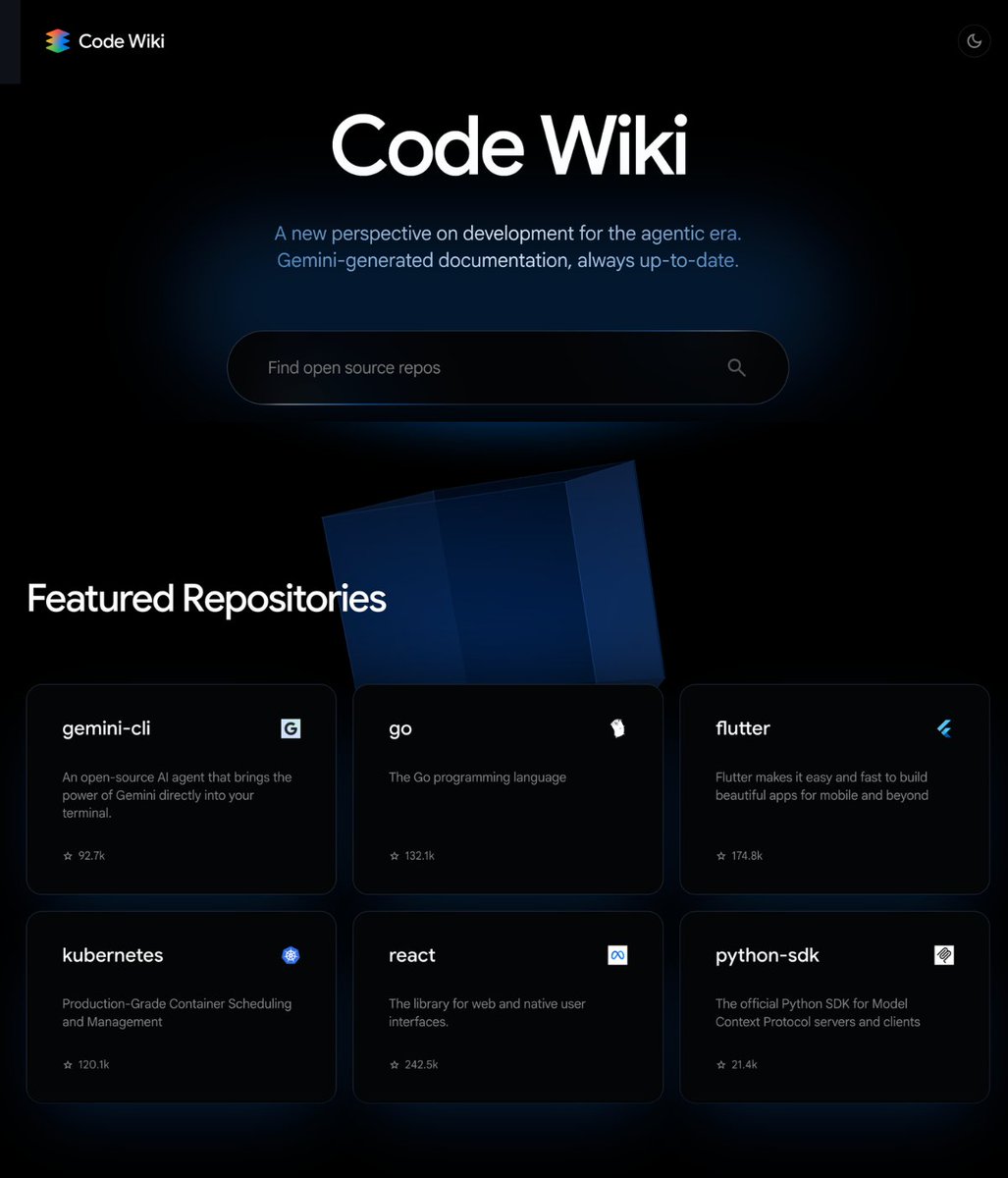

The command-line interface for Google's Gemini models, Gemini CLI, has launched a new feature called Subagents. This allows developers to run multiple, specialized AI agents in parallel, with each subagent operating in its own isolated context window and following custom system instructions.

Key Takeaways

- The Gemini CLI tool has launched a 'Subagents' feature, allowing users to run multiple specialized AI agents concurrently, each with its own isolated context and system prompt.

- This enables more complex, modular workflows by preventing instruction bleed between tasks.

What Happened

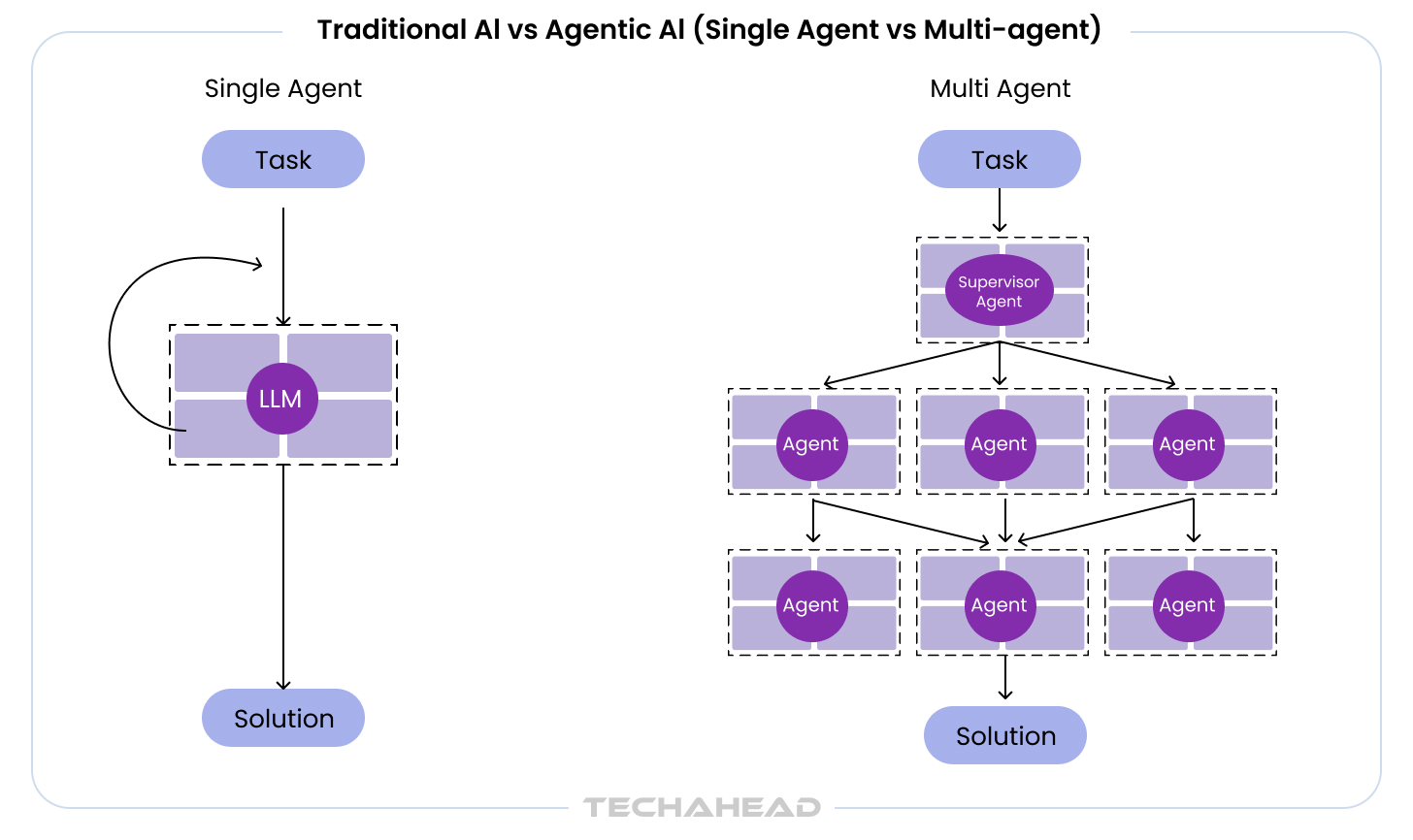

A brief announcement from the official @geminicli account on X (formerly Twitter) revealed the feature, stating it had been a "Long time in the making." The core capability is that each subagent maintains separation from others, preventing cross-contamination of context or instructions. This architectural choice is designed for building more complex, multi-step AI workflows where different agents handle discrete parts of a task.

Context

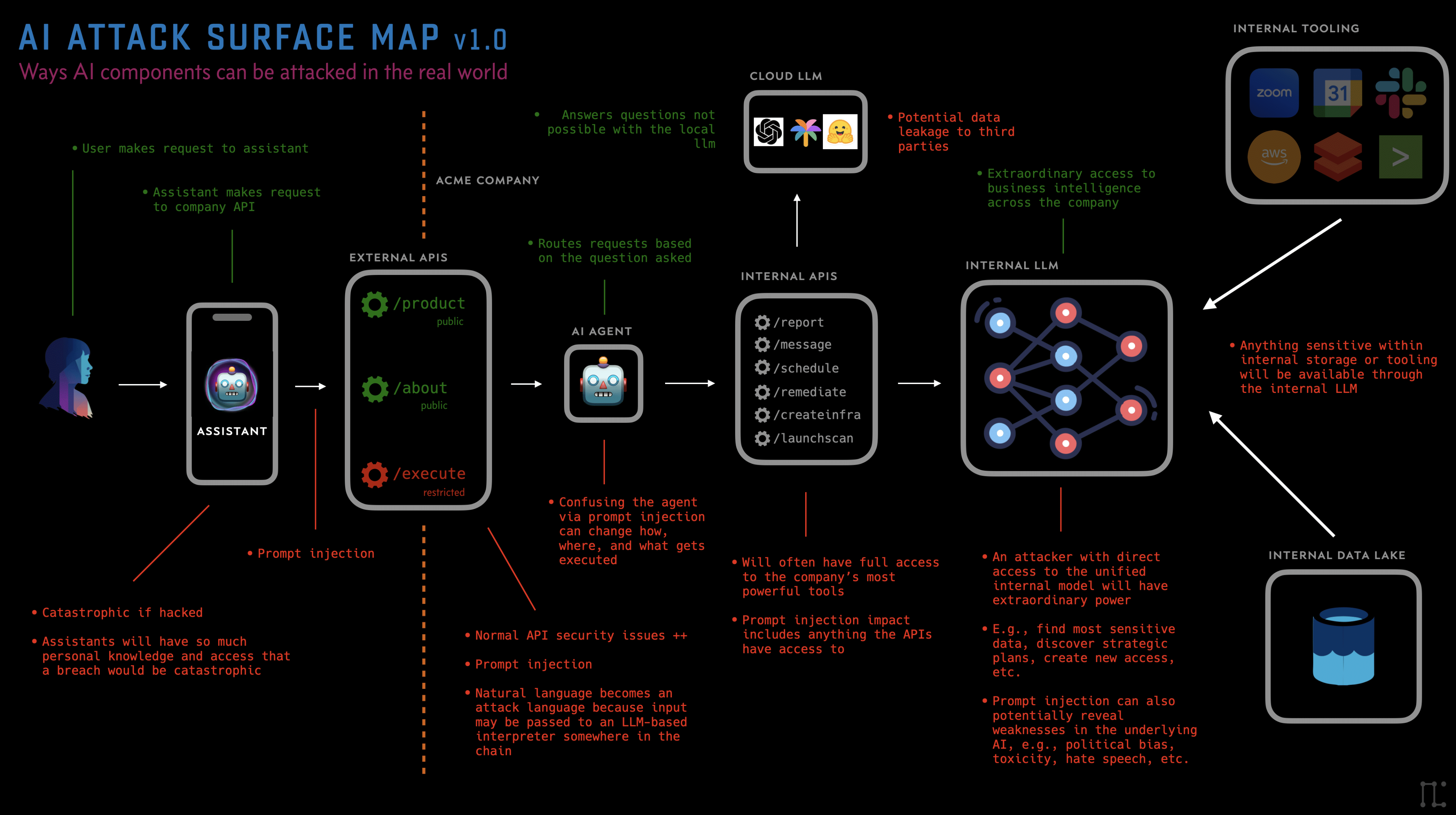

Gemini CLI is a developer tool that provides terminal-based access to Google's Gemini family of large language models (LLMs). The introduction of a subagent system moves it beyond a simple chat interface into a lightweight framework for orchestrating AI-powered tasks. Isolating context windows is a known challenge in agentic systems, where a single, long conversation history can lead to performance degradation or prompt hijacking. This feature directly addresses that by providing native separation.

Technical Implications

While the announcement lacks specific benchmarks, the feature's design implies several technical use cases:

- Parallel Processing: Different subagents can work on separate problems simultaneously, such as one analyzing code while another drafts documentation.

- Role Specialization: Each subagent can be given a unique system prompt (e.g., "You are a security auditor," "You are a UX writer"), allowing a single CLI session to switch between expert roles without manual context clearing.

- Memory Management: Isolated contexts prevent long, messy conversation histories that can confuse the core model or exceed token limits inefficiently.

gentic.news Analysis

This is a pragmatic, developer-focused update that aligns with the broader industry trend of moving from monolithic LLM calls to orchestrated, multi-agent workflows. It positions Gemini CLI against other emerging agent frameworks like LangChain or Microsoft's AutoGen, but within the native Google ecosystem. The emphasis on isolated context windows is particularly noteworthy; it's a direct solution to a common pain point where agents forget their initial instructions or become contaminated by user queries meant for other tasks.

This follows Google's continued strategy of deepening its developer tools for Gemini, competing for mindshare in an environment where OpenAI's API and CLI tools are widely adopted. By baking agent-like capabilities directly into its CLI, Google lowers the barrier to entry for building compound AI systems with Gemini. However, the real test will be in the implementation details not yet revealed: how subagents communicate, if they can share data securely, and how resource-intensive parallel execution becomes.

Frequently Asked Questions

What is Gemini CLI?

Gemini CLI is a command-line interface tool provided by Google that allows developers to interact with Gemini AI models directly from their terminal. It's used for scripting, automation, and integrating AI capabilities into development workflows without a graphical interface.

How do Subagents differ from just starting a new chat?

While a new chat also provides a fresh context, subagents are designed to run in parallel within a single orchestrated session. This allows for managed concurrency and potential inter-agent communication, which is more complex than manually switching between independent chat threads.

Can Subagents talk to each other?

The initial announcement does not specify a built-in communication mechanism between subagents. Their primary defined feature is isolation (separate context, custom instructions). Coordination would likely need to be handled by the user's external scripting logic that manages the CLI.

Is this feature available for all Gemini models?

The announcement does not specify model compatibility. Typically, Gemini CLI supports the various Gemini model sizes (Ultra, Pro, Flash). The Subagents feature is likely a framework-level capability of the CLI itself, but its performance may vary depending on the underlying model's context window size and capability.