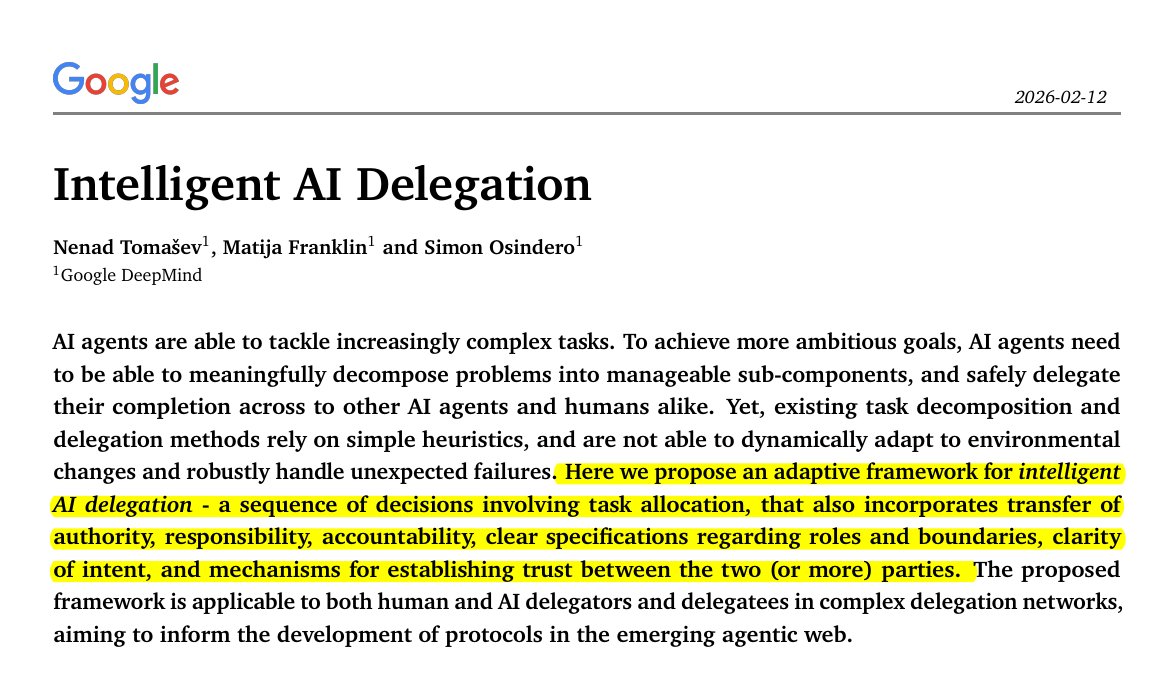

Google DeepMind has unveiled a revolutionary framework that could fundamentally reshape how AI agents operate and collaborate. Called "Intelligent AI Delegation," this comprehensive system provides the first standardized approach for AI agents to safely delegate tasks to other AI agents and humans—addressing long-standing challenges in accountability, transparency, and reliability that have plagued multi-agent systems.

The Five Pillars of Intelligent Delegation

The framework operates across five core pillars that together create a robust infrastructure for AI collaboration:

Dynamic Assessment: Unlike static systems that assume fixed capabilities, this approach enables agents to evaluate each other's real-time competencies before delegating tasks. This continuous assessment ensures that tasks are assigned to agents with appropriate skills for the current context.

Adaptive Execution: Perhaps most critically, the system allows for mid-task re-delegation when things go wrong. This means that if an agent encounters unexpected challenges or demonstrates inadequate performance during task execution, responsibility can be dynamically transferred to another capable agent without starting from scratch.

Structural Transparency: Every delegation decision and execution step is auditable, eliminating the "black box" problem that has troubled AI systems. This transparency enables troubleshooting, accountability, and continuous improvement of the delegation process.

Scalable Market Coordination: The framework incorporates decentralized bidding systems where agents can compete for tasks based on their capabilities and availability. This market-based approach creates efficient resource allocation across complex multi-agent ecosystems.

Systemic Resilience: The system includes hard stops and safeguards to prevent cascading failures—a critical feature as AI systems become increasingly interconnected and their failures potentially more consequential.

Learning from Aviation and Medicine

One of the most insightful aspects of DeepMind's approach is its borrowing of concepts from high-stakes human domains like aviation and medicine. The researchers identified that AI agents often blindly follow bad instructions in ways analogous to how junior doctors might not question senior surgeons—even when they suspect something might be wrong.

This phenomenon, known in human systems as "authority gradient" problems, has direct parallels in AI systems where agents might defer to seemingly authoritative instructions without proper critical evaluation. DeepMind's framework builds specific mechanisms to address this, creating systems where agents can appropriately question, verify, or reject instructions when necessary.

Practical Implementation and Industry Alignment

This isn't merely theoretical work. The DeepMind team has specifically mapped their framework onto existing industry protocols including:

- MCP (Model Context Protocol)

- A2A (Agent-to-Agent protocols)

- AP2 (Agent Protocol 2)

By analyzing what's missing from each existing approach, the researchers have created what many are calling "the infrastructure layer the agentic web has been waiting for." This practical orientation suggests that implementation could proceed relatively quickly, with the framework providing missing pieces rather than requiring complete system overhauls.

Implications for the Future of AI Systems

The Intelligent AI Delegation framework arrives at a critical moment in AI development. As AI agents become more sophisticated and numerous, the challenges of coordination, accountability, and reliability have become increasingly apparent. Previous approaches often relied on brittle heuristics or ad-hoc handoff mechanisms that created "accountability black holes"—situations where it was impossible to determine why a task failed or who was responsible.

DeepMind's systematic approach addresses these issues head-on, potentially enabling:

- More complex AI ecosystems: With reliable delegation, AI systems can tackle more ambitious problems requiring diverse capabilities

- Human-AI collaboration: The framework explicitly includes humans in the delegation chain, creating more natural and effective human-AI teamwork

- Enterprise adoption: The transparency and accountability features address critical concerns for business deployment of AI systems

- Safety advancements: By preventing cascading failures and enabling mid-course corrections, the framework could significantly improve AI safety

The Road Ahead

While the paper represents a significant theoretical and architectural advance, real-world implementation will be the true test. The decentralized bidding systems, real-time capability assessment, and transparent auditing mechanisms all introduce computational overhead that will need to be balanced against performance requirements.

Nevertheless, DeepMind's Intelligent AI Delegation framework marks a pivotal moment in AI development—moving from individual intelligent agents to intelligently coordinated agent ecosystems. As the research community and industry begin to implement and build upon these ideas, we may look back at this work as the foundation upon which truly collaborative AI systems were built.

Source: Google DeepMind research paper on Intelligent AI Delegation, as reported by @hasantoxr on X/Twitter.