An X user, @mweinbach, has reported observing OpenAI's GPT-5.4 model engaged in a lengthy, autonomous optimization task. According to the post, the model spent three consecutive hours working to optimize an embedding model specifically for the Qualcomm Neural Processing Unit (NPU).

Key Takeaways

- An X user observed GPT-5.4 working for three hours to optimize an embedding model specifically for the Qualcomm NPU.

- This suggests a practical application of advanced AI for hardware-specific model tuning.

What Happened

The report is a simple observation: a user witnessed the GPT-5.4 model actively processing a task for a significant duration. The stated goal of this task was to optimize an embedding model—a type of AI model that converts data into numerical vectors—for execution on Qualcomm's specialized AI accelerator hardware, the NPU. The three-hour runtime indicates a non-trivial computational process, far beyond a simple prompt-and-response interaction.

Context

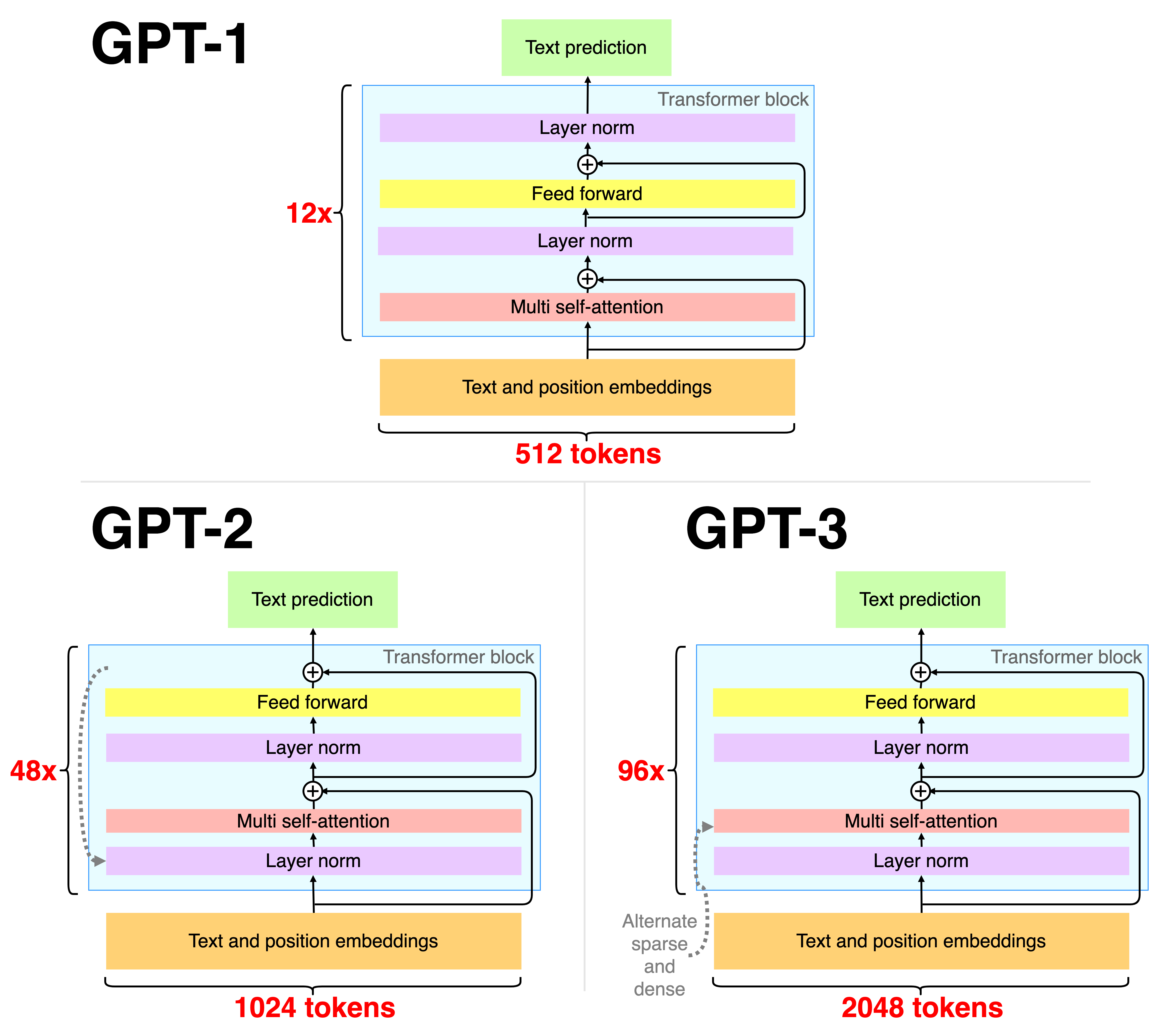

This anecdote points to the evolving use of large language models (LLMs) beyond text generation. Instead of being prompted for an answer, the model appears to have been tasked with or initiated a complex engineering optimization job. Embedding models are crucial for retrieval-augmented generation (RAG), semantic search, and clustering. Optimizing them for a specific hardware architecture like Qualcomm's NPU—common in smartphones and upcoming AI PCs—can dramatically improve inference speed and energy efficiency, key metrics for edge and mobile AI.

Qualcomm has been aggressively positioning its Snapdragon platforms with integrated NPUs as the foundation for on-device AI, competing directly with Apple's Neural Engine and Intel's AI accelerators. Efficient models are critical for this vision.

gentic.news Analysis

This user report, while thin on technical details, aligns with two major, converging trends we've been tracking. First, it exemplifies the shift from LLMs as conversational tools to LLMs as autonomous computational engines. This is not about chatting with GPT; it's about deploying it as a problem-solving agent that operates over extended timescales. We observed the early seeds of this in 2025 with projects like Devin from Cognition AI and OpenAI's own rumored "Strawberry" project, which focused on deep research and planning capabilities. A three-hour optimization task fits squarely into this paradigm of AI agents performing open-ended, complex work.

Second, it highlights the intensifying hardware-software co-design race. As we covered in our analysis of the MediaTek Dimensity 9400 launch, the performance gap between AI chips is increasingly closed by software optimizations. An LLM like GPT-5.4, with its broad coding and systems knowledge, is a potent tool for automatically generating these optimizations. It can potentially explore a vast space of kernel configurations, quantization schemes, and graph compilations tailored to the Qualcomm NPU's microarchitecture—a task that would take human engineers days or weeks. If this capability is productized, it could significantly lower the barrier to deploying state-of-the-art models on edge devices, accelerating Qualcomm's ecosystem growth against competitors like Apple and Intel.

Frequently Asked Questions

What is a Qualcomm NPU?

The Qualcomm Neural Processing Unit (NPU) is a dedicated hardware accelerator integrated into the company's Snapdragon system-on-chips (SoCs). It is designed specifically to run AI and machine learning models efficiently, offering better performance and lower power consumption compared to running the same models on the CPU or GPU. It's a key component in smartphones, laptops, and other devices for enabling on-device AI features.

What does it mean to optimize a model for an NPU?

Optimizing a model for an NPU involves modifying the model's architecture or its execution plan to fully leverage the specific hardware's capabilities. This can include techniques like quantization (reducing numerical precision of weights), operator fusion (combining multiple operations into one), and tailoring the computation graph to the NPU's parallel processing units. The goal is to maximize speed (throughput, latency) and minimize power consumption during inference.

Is GPT-5.4 an AI agent?

Based on this report and other emerging capabilities, GPT-5.4 appears to have significant agentic functionalities. An AI agent can perceive its environment, set goals, and take a series of actions over time to achieve those goals. A three-hour optimization task suggests GPT-5.4 can plan and execute a multi-step computational process autonomously, moving beyond single-turn response generation. This aligns with the industry's broader push toward agentic AI systems.

Why would you use an LLM to optimize another model?

Large Language Models have extensive knowledge of code, algorithms, and system architectures. They can be prompted or tasked to write, analyze, and refine optimization scripts. Using an LLM for this can automate a highly specialized and iterative process, potentially discovering novel optimization strategies or rapidly adapting a model to new, undocumented hardware features faster than a human engineer could manually.