In the rapidly evolving landscape of artificial intelligence, autonomous agents are increasingly making decisions and taking actions that have real-world consequences. From executing financial transactions to modifying production infrastructure, these AI systems operate with growing independence. Yet a fundamental question remains unanswered: How do we prove, beyond reasonable doubt, what an AI agent actually did?

A new open-source project called GuardClaw aims to provide exactly that proof. Developed by viruswami5511 and recently showcased on Hacker News, GuardClaw implements cryptographically verifiable execution logs specifically designed for AI agents. This isn't just another logging system—it's a protocol that creates mathematically provable records of what happened, when, and in what sequence.

The Accountability Gap in AI Systems

Traditional logging systems suffer from a critical flaw: they're mutable. Whether stored in append-only files, databases, or observability pipelines, conventional logs can be edited, deleted, or tampered with after the fact. This creates what security experts call an "accountability gap"—when something goes wrong, we only have the system's claim about what happened, not verifiable evidence.

Consider the scenario presented in GuardClaw's documentation: "Imagine a trading bot loses $2M — and the only evidence is logs that can be edited." In financial services, healthcare, critical infrastructure, and other high-stakes domains, this uncertainty is unacceptable. As AI agents gain more autonomy and capability, the need for trustworthy audit trails becomes increasingly urgent.

How GuardClaw Works: Cryptographic Chaining and Signatures

GuardClaw implements GEF-SPEC-1.0 (Guard Execution Format), a minimal protocol that combines several established cryptographic techniques:

- RFC 8785 canonicalized envelopes ensure consistent serialization

- SHA-256 causal hash chaining creates an immutable sequence where each entry cryptographically depends on the previous one

- Ed25519 per-entry signatures provide cryptographic proof of authorship

- Offline verification via CLI allows anyone with the public key to verify the entire history without accessing the original runtime environment

The resulting ledger is stored as a plain JSONL (JSON Lines) file—a simple, portable format that requires no specialized servers or infrastructure. This design choice emphasizes accessibility and interoperability.

What makes GuardClaw particularly interesting is its demonstration of tamper detection. The project intentionally shows how the system identifies manipulated entries:

[2] execution SIG:FAIL CHAIN:OK

[3] execution SIG:OK CHAIN:BREAK

Violations: 2 — TAMPERED

This dual verification—checking both individual signatures and the chain integrity—provides robust protection against various tampering scenarios.

Performance and Practical Considerations

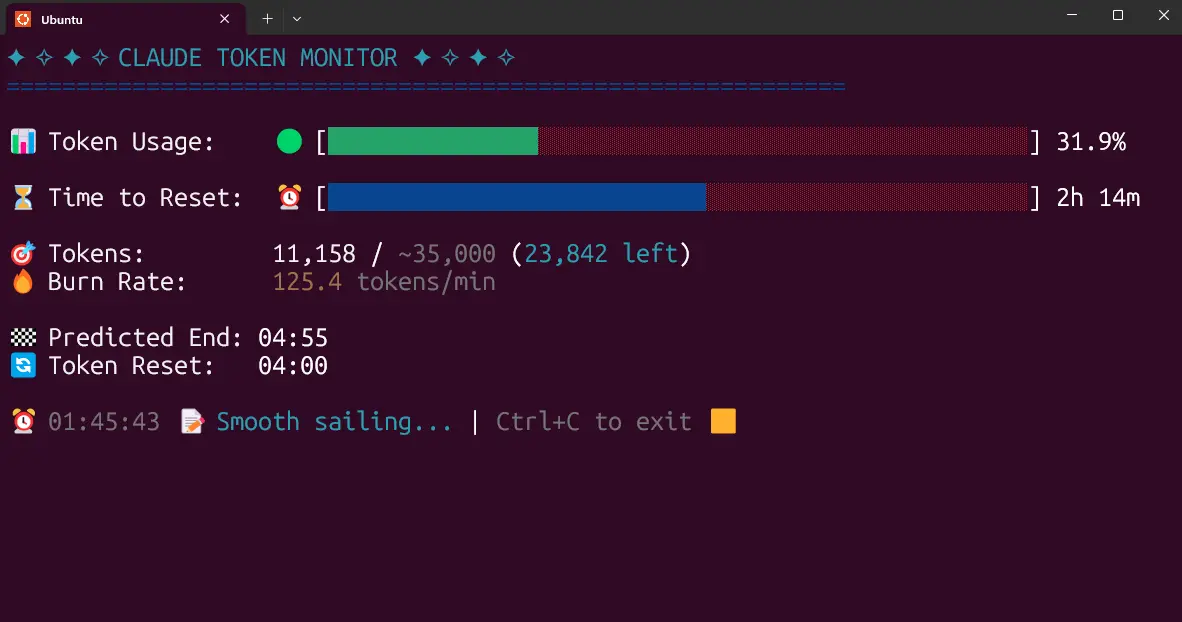

For a system that adds cryptographic overhead to every operation, GuardClaw demonstrates impressive performance characteristics:

- ~762 writes per second (for 1 million entries, single-threaded)

- ~9,000 full verifications per second

- ~39MB RAM for streaming verification

These numbers suggest the system could handle substantial workloads without becoming a bottleneck in production environments.

However, the project acknowledges an important limitation: "if the signing key is compromised, past history can be rewritten." This highlights the critical importance of proper key management, which the protocol intentionally leaves out of scope. In practice, implementing GuardClaw would require careful consideration of key rotation, storage, and recovery procedures.

The Broader Context: AI Accountability and Trust

GuardClaw emerges at a pivotal moment in AI development. As systems transition from research projects to production deployments with real-world impact, questions of accountability, auditability, and trust become paramount. The technology addresses several pressing needs:

Regulatory Compliance: Industries like finance and healthcare face strict regulatory requirements for audit trails. GuardClaw could help AI systems meet these requirements by providing mathematically verifiable records.

Incident Investigation: When AI systems fail or behave unexpectedly, investigators need reliable data about what happened. Cryptographic logs eliminate questions about whether logs were altered during or after an incident.

Multi-Party Trust: In scenarios where AI agents interact with multiple stakeholders (different departments, organizations, or regulatory bodies), cryptographic proof provides a neutral, verifiable source of truth.

Long-Term Archival: For systems that operate over extended periods, cryptographic chaining ensures that historical records remain verifiable even as technologies and organizations change.

Implementation and Adoption Challenges

While GuardClaw presents an elegant technical solution, several challenges remain for widespread adoption:

Integration Complexity: Adding cryptographic logging to existing AI systems requires careful integration to ensure all relevant actions are captured without disrupting core functionality.

Key Management: As noted in the documentation, key management is "intentionally out of scope," yet it's crucial for real-world security. Organizations would need to develop robust key management practices.

Performance Trade-offs: While GuardClaw's performance is impressive, cryptographic operations still add overhead compared to traditional logging. Teams would need to evaluate whether this trade-off is acceptable for their use cases.

Standardization: As an individual project, GuardClaw represents one approach. Widespread adoption might require industry standardization around cryptographic audit trails for AI systems.

The Future of Verifiable AI

GuardClaw represents more than just a technical tool—it points toward a future where AI systems are designed with verifiability and accountability as first-class requirements. As autonomous agents take on increasingly important roles, society will demand mechanisms to ensure they operate transparently and accountably.

The project's open-source nature and language-neutral protocol specification (GEF-SPEC-1.0) suggest potential for broader adoption and community development. By focusing on a minimal, interoperable design, the creators have lowered barriers to experimentation and implementation.

Looking forward, we might see cryptographic audit trails like GuardClaw become standard components of enterprise AI systems, particularly in regulated industries. They could also enable new forms of AI governance, where autonomous actions are automatically verified against policies and constraints.

Getting Started with GuardClaw

For those interested in exploring GuardClaw, the project is available on PyPI and GitHub:

pip install guardclaw

guardclaw verify your_ledger.jsonl

The documentation includes a demonstration showing intentional tampering and verification failure, allowing users to understand exactly how the system detects manipulation.

As AI continues its rapid advancement, tools like GuardClaw remind us that technical capability must be matched by accountability mechanisms. By providing cryptographically verifiable execution logs, this project takes an important step toward making autonomous AI systems more trustworthy, auditable, and responsible.

Source: GuardClaw GitHub Repository