The Innovation — What the Research Reports

A new study, published as a preprint on arXiv, provides a rigorous, data-driven answer to a critical but often overlooked question in digital commerce: Does the order in which you ask for feedback change the feedback itself?

The research, titled "Intuition First or Reflection Before Judgment? The Impact of Evaluation Sequence on Consumer Ratings," investigates the psychological and behavioral consequences of two common interface designs:

- Rating-First: The user is prompted to select a star rating (e.g., 1-5) before they can write any text.

- Review-First: The user writes their textual review first, and then selects a star rating.

Through three controlled experiments and a large-scale analysis of secondary data from Yelp (Rating-First) and Letterboxd (Review-First), the researchers uncovered a clear and significant polarization effect.

Key Findings:

- Context-Dependent Polarization: In high-quality service or product contexts, a Rating-First sequence leads to higher overall ratings compared to Review-First. Conversely, in low-quality contexts, Rating-First leads to significantly lower ratings.

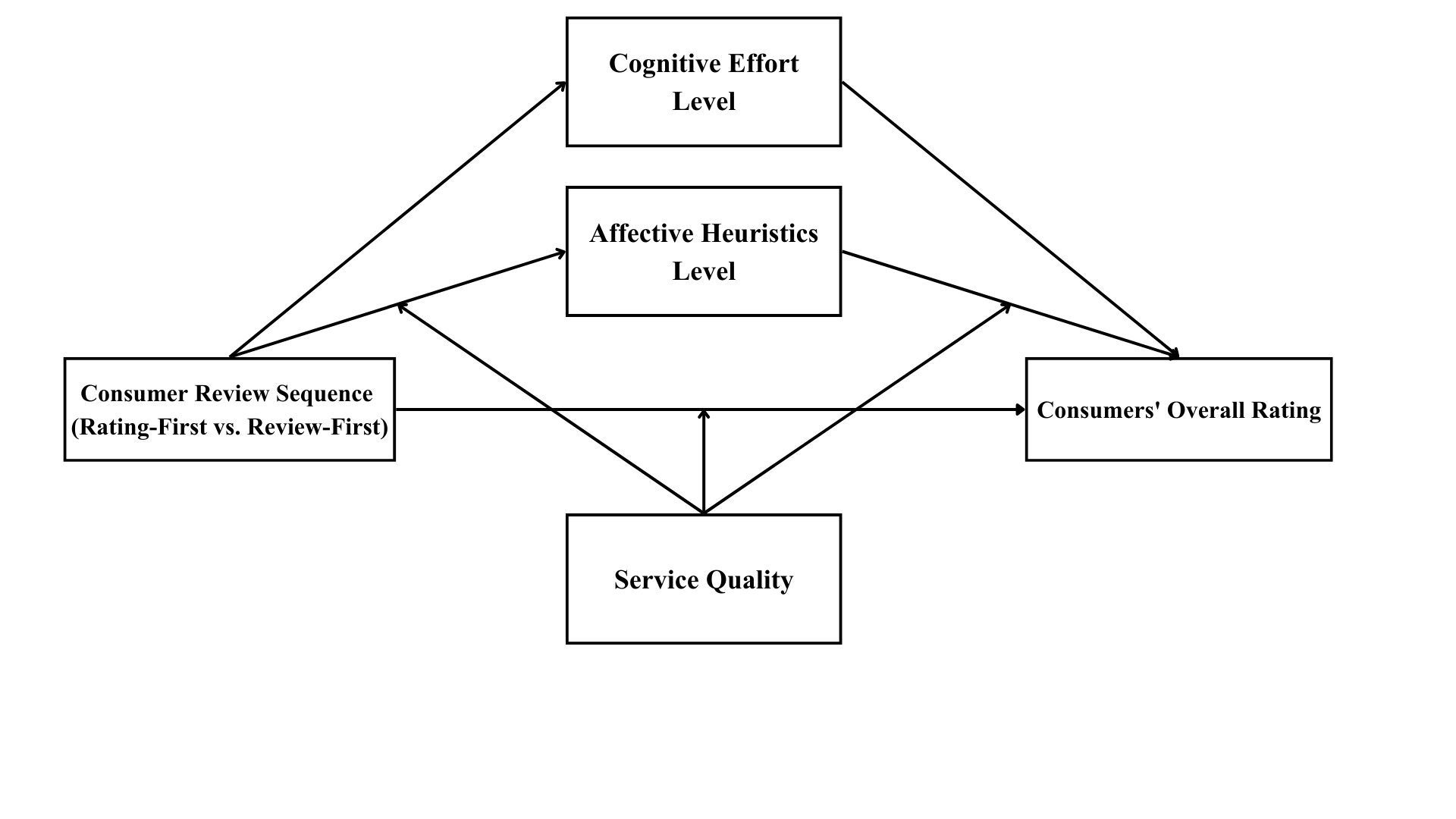

- Psychological Mechanism: This effect is driven by a serial mediation path. The Rating-First design triggers an initial affective heuristic—a gut-feeling, emotional response—which then reduces subsequent cognitive effort during the review-writing process. The user's initial snap judgment anchors their entire evaluation, limiting nuanced reflection.

- Product-Type Moderation: The polarization is amplified for hedonic products (those consumed for pleasure, like luxury fashion, fine dining, or entertainment) compared to utilitarian products (those consumed for a functional purpose, like tools or basic appliances).

- Real-World Evidence: The data analysis showed that Yelp's Rating-First interface correlates with a polarized, bimodal rating distribution (lots of 1-star and 5-star reviews). In contrast, Letterboxd's Review-First design correlates with ratings that are more concentrated and less extreme.

The core conclusion is that interface design is not neutral. It actively shapes the data—the ratings—that every subsequent customer uses to make decisions and that algorithms use for ranking and recommendations.

Why This Matters for Retail & Luxury

For luxury and premium retail brands, online ratings and reviews are not just social proof; they are a core component of brand equity and perceived value. This research has direct implications for several key areas:

1. E-commerce & Marketplace Platform Strategy:

If your brand sells on a third-party platform (e.g., Farfetch, Net-a-Porter, Mytheresa, or a brand's own Shopify store with a review app), you need to audit the review interface. A Rating-First design on a product page may be artificially inflating ratings for excellent items but also creating devastatingly low ratings for any product with a minor flaw or a customer service mishap. Understanding this bias is crucial for interpreting your aggregated score.

2. Post-Purchase Experience & CRM:

The sequence of the review solicitation email or in-app notification is a critical lever. A luxury brand aiming to cultivate a reputation for flawless experience might benefit from a Review-First approach in its post-purchase surveys, encouraging more reflective, nuanced feedback that could mitigate extreme negative reactions to single issues. Conversely, for a new product launch where generating initial positive momentum is key, a Rating-First approach could help capture and amplify strong initial affective responses.

3. Data Integrity for AI & Personalization:

Machine learning models for recommendation, search ranking, and demand forecasting are trained on this rating data. If the data is systematically polarized by interface design, the models' outputs are biased. A product with a "true" quality of 4 stars might appear as a 5-star or a 3-star product based purely on how feedback was collected, leading to suboptimal inventory, merchandising, and personalization decisions.

4. Hedonic vs. Utilitarian Product Strategy:

The finding that hedonic products see amplified effects is particularly salient for luxury. A handbag, a bottle of perfume, or a haute couture piece is the epitome of a hedonic good. This means the choice of evaluation sequence will have an even stronger impact on the rating distribution for these items compared to, say, a white t-shirt or a pair of socks.

Business Impact

The impact is primarily on perceived value and customer decision-making, which indirectly affects conversion rates, average order value, and customer lifetime value. A polarized rating distribution can:

- Increase perceived risk for customers considering a purchase with middling (3-star) ratings, potentially suppressing conversion.

- Accelerate the "winner-takes-most" dynamic, where top-rated products gain disproportionate visibility and sales, making it harder for new or nuanced products to break through.

- Amplify the damage of any service failure, as a negative experience is more likely to result in a severe 1-star rating rather than a constructive 2 or 3-star review.

While the study doesn't provide a quantified revenue impact, the mechanism is clear: interface design alters the key signal (the rating) that feeds into the consumer's purchase calculus.

Implementation Approach

Implementing insights from this research involves a mix of analytics, experimentation, and platform coordination.

1. Audit & Analysis:

- Map Your Touchpoints: Catalog every place you solicit ratings—post-purchase emails, in-app prompts, website pop-ups, third-party platform pages.

- Analyze Rating Distributions: For each touchpoint, analyze the distribution of ratings. Look for bimodal patterns (spikes at 1 and 5 stars) as a potential signature of a Rating-First design bias.

- Segment by Product Type: Compare rating distributions for hedonic (e.g., ready-to-wear, jewelry) vs. more utilitarian (e.g., basics, accessories) items within your catalog.

2. Controlled Experimentation (A/B Testing):

- Test the Sequence: For your owned channels (e.g., CRM emails), run A/B tests comparing Rating-First vs. Review-First flows. Primary metrics should include average rating, rating distribution, review text length, and sentiment of the text.

- Measure Downstream Effects: Track the impact of the different review flows on subsequent conversion rates for the reviewed products. Does more nuanced feedback lead to higher trust and conversion?

3. Vendor & Platform Engagement:

- For third-party platforms, use this research as a data-backed argument in discussions about their review system design. Advocate for testing or for interfaces that promote more authentic feedback, which benefits both the platform's credibility and your brand's accurate representation.

Technical Requirements: The change itself is low from a pure engineering standpoint—it's a UI/UX and copy change. The complexity lies in the experimental design, data analysis, and organizational buy-in to challenge a common industry pattern.

Governance & Risk Assessment

Privacy & Ethics: This intervention is low-risk from a privacy perspective, as it doesn't change the data collected, only the order of collection. Ethically, moving towards a design that promotes more reflective feedback can be seen as fostering more authentic communication and reducing impulsive, potentially unfair, evaluations.

Bias & Fairness: The existing Rating-First design introduces a cognitive bias (affective anchoring). Changing the sequence is an attempt to mitigate that bias. However, care must be taken to ensure the Review-First prompt does not itself lead to bias (e.g., by priming with overly positive or negative language).

Maturity Level: The research is academically rigorous but as a preprint, it awaits formal peer review. However, the methodology (controlled experiments + large-scale observational data) is sound. The concept is ready for pilot testing within retail organizations. It is not a plug-and-play solution but a strategic insight that should inform customer experience design.

The core takeaway for AI and commercial leaders in luxury is that the data your AI systems rely on is malleable. Before building complex models to predict or respond to customer sentiment, it is essential to audit and, if possible, optimize the primary channels that generate that sentiment data. The architecture of choice shapes the choice itself.