In a provocative new paper that challenges foundational assumptions in artificial intelligence, Meta's Chief AI Scientist Yann LeCun and fellow researchers propose a fundamental reorientation of the field's ultimate goals. Rather than pursuing artificial general intelligence (AGI)—machines that can perform any intellectual task a human can—they argue we should instead build what they term Superhuman Adaptable Intelligence (SAI): specialized systems that rapidly learn and excel at specific domains far beyond human capabilities.

This perspective, detailed in a paper that has sparked intense discussion across the AI community, represents a significant departure from the dominant narrative that has driven research and investment for decades. The work suggests that the entire industry's obsession with human-like generality may be fundamentally misguided.

The Human Intelligence Illusion

At the core of LeCun's argument is a biological reality check: human intelligence isn't actually general in the way AI researchers often imagine. Instead, it's a highly specialized toolkit shaped by evolution for one primary purpose—physical survival in a specific ecological niche.

"Nature optimized our brains specifically for tasks necessary to stay alive in the physical world," the researchers explain. Abilities like walking, seeing, and social cognition appear incredibly general to us precisely because they're absolutely critical for our existence. But this creates what the paper calls "an illusion of generality"—we mistake our survival-optimized capabilities for universal intelligence.

The evidence is everywhere once we look: humans are remarkably poor at cognitive tasks outside our evolutionary comfort zone. We struggle with probability calculations, cannot naturally process high-dimensional data, and require years of specialized training to master even moderately complex games like chess—which computers now dominate effortlessly.

The Flawed AGI Pursuit

The paper argues that attempting to replicate human intelligence in machines is therefore a category error. We're trying to build technology that mimics a biological system optimized for survival in prehistoric environments, not for solving modern complex problems.

This pursuit has practical consequences. Current approaches often waste enormous computational resources trying to teach AI systems human-like traits and limitations. The massive energy consumption of training frontier models, the difficulty in getting them to perform consistently across domains, and their frequent failures in specialized applications all stem from this fundamental mismatch between goals and reality.

"Instead of trying to force one giant model to master every possible task from folding laundry to predicting protein structures," the researchers suggest a different path: building expert systems that learn generic knowledge through self-supervised methods, then specialize dramatically in their domains.

The Superhuman Adaptable Intelligence Alternative

The proposed alternative—Superhuman Adaptable Intelligence—focuses on a different metric: how quickly a system can learn new skills within its domain, not how broadly it can mimic human capabilities. These systems would use internal world models to understand how things work, allowing them to adapt rapidly to solve complex problems that human brains simply cannot handle.

Imagine a medical diagnostic AI that doesn't just match human doctors but understands disease mechanisms at a molecular level we cannot naturally perceive. Or a climate modeling system that processes atmospheric dynamics across scales impossible for human cognition. These wouldn't be "general" intelligences—they'd be superhuman specialists.

This approach has several advantages:

- Practical problem-solving: Systems could be designed specifically for important challenges like drug discovery, materials science, or climate prediction

- Efficiency: Resources wouldn't be wasted on human-like traits irrelevant to the task

- Measurable progress: Learning speed within domains provides clearer benchmarks than vague "general intelligence" metrics

- Safety: Specialized systems have more predictable capabilities and limitations

Technical Implementation Pathways

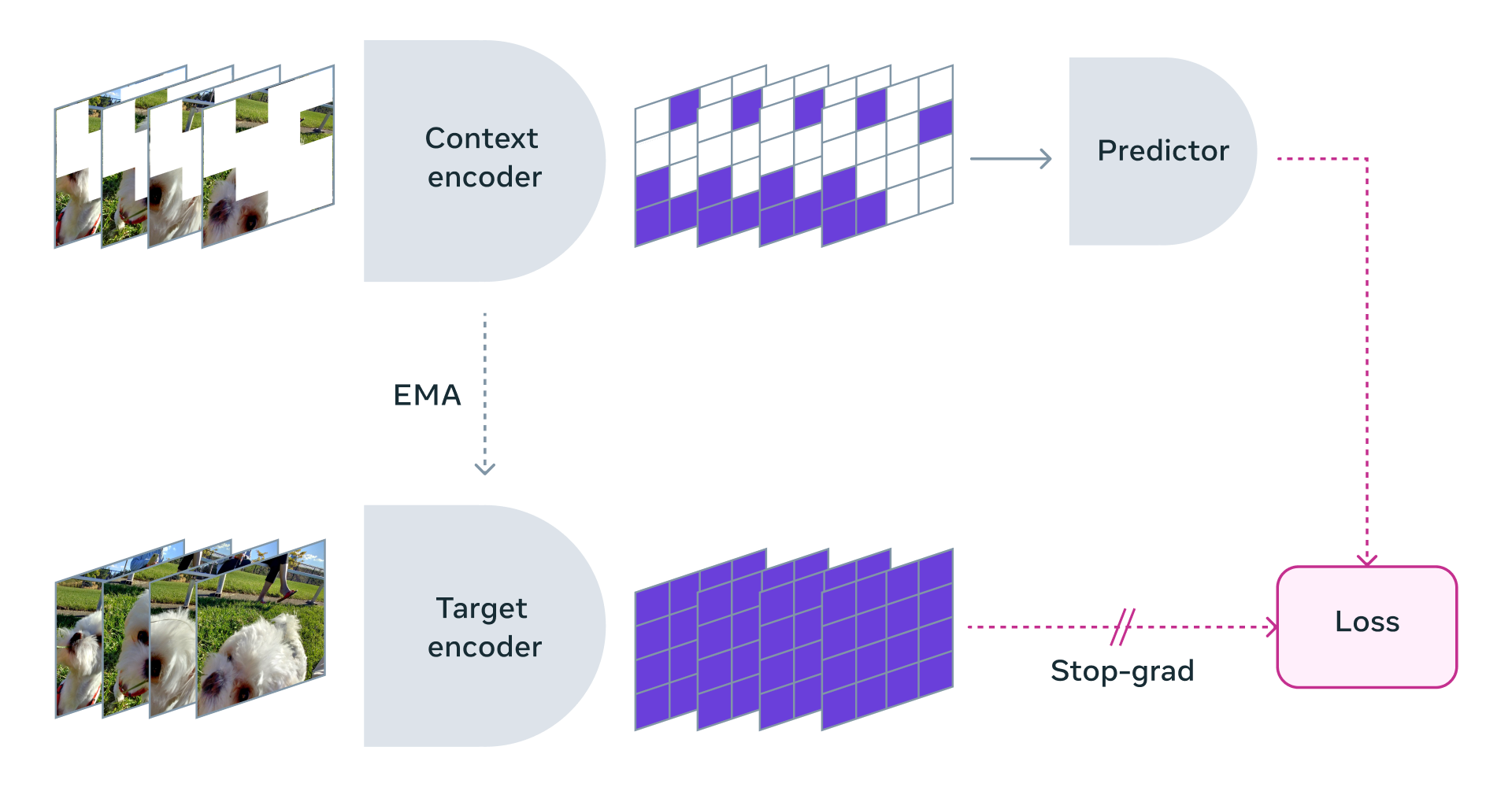

The paper suggests several technical directions for realizing this vision. Self-supervised learning—where systems learn from data without explicit human labeling—would allow AI to develop rich internal models of how specific domains work. Modular architectures could enable different specialized systems to share foundational knowledge while developing deep expertise in their areas.

Crucially, these systems wouldn't need to replicate human cognitive processes. They could develop entirely alien forms of reasoning optimized for their domains, free from the biological constraints that shape human thought.

Industry Implications

This perspective could reshape AI development priorities. Instead of a race toward increasingly large general models, we might see more investment in specialized systems for healthcare, scientific research, engineering, and other critical fields. The economic incentives could shift from creating human-like assistants to building tools that solve problems humans cannot.

It also suggests different evaluation frameworks. Rather than testing AI on human-centric benchmarks, we'd measure systems by their ability to rapidly master complex domains and produce novel solutions to difficult problems.

Philosophical and Ethical Dimensions

The paper touches on deeper questions about intelligence itself. If human intelligence is just one specialized form among many possible intelligences, what does that mean for how we value different cognitive styles? How do we ensure superhuman specialists remain aligned with human values when their reasoning processes may be fundamentally incomprehensible to us?

These questions become more urgent if LeCun's vision gains traction. Building intelligences that exceed human capabilities in specific domains requires careful consideration of how they're deployed and controlled.

The Path Forward

LeCun and colleagues aren't suggesting abandoning all general learning approaches. Foundational models that capture broad knowledge still have value. But they argue these should serve as platforms for specialization rather than ends in themselves.

The research community's response has been mixed. Some see this as a necessary correction to unrealistic AGI expectations, while others worry it might limit AI's potential. What's clear is that the paper has ignited a crucial debate about what we're actually trying to build—and why.

As AI systems grow more capable, clarifying our goals becomes increasingly important. LeCun's proposal offers one compelling vision: not machines that think like us, but tools that think in ways we cannot, solving problems beyond our natural reach.

Source: Analysis based on Yann LeCun's research paper and related discussions as referenced in @rohanpaul_ai's coverage.