What Changed — llm-anthropic 0.25 Release

The llm-anthropic command-line tool, created by Simon Willison, has been updated to version 0.25 with several key improvements for developers using Claude models locally. This tool is part of the larger LLM ecosystem that lets you run language models from your terminal.

Key updates:

- New model:

claude-opus-4.7is now available with support forthinking_effort: xhigh - New options:

thinking_displayandthinking_adaptiveboolean flags - Increased defaults:

max_tokensnow defaults to the maximum allowed for each model - Cleanup: Removed obsolete

structured-outputs-2025-11-13beta header for older models

What It Means For You — Direct Access to Deeper Reasoning

If you use Claude Code for complex refactoring, debugging, or system design, the xhigh thinking effort setting is what you've been waiting for. This isn't just another model version bump—it's access to Claude's deepest reasoning capabilities through a simple CLI tool.

The thinking_effort parameter controls how much "internal thinking" Claude does before responding. While Claude Code in the IDE uses this internally, llm-anthropic gives you direct control:

low: Faster, less thorough (good for simple tasks)medium: Balanced (default for most uses)high: More thorough reasoningxhigh: Maximum reasoning depth for hardest problems

Try It Now — Install and Configure

First, update or install the tool:

pip install -U llm-anthropic

Set your Anthropic API key:

llm keys set anthropic

# Enter your API key when prompted

Now you can run Claude Opus 4.7 with maximum thinking effort:

# Basic usage with xhigh thinking

llm -m claude-opus-4.7 --thinking-effort xhigh "Refactor this Python function for better performance:"

# With thinking display to see the reasoning process

llm -m claude-opus-4.7 --thinking-effort xhigh --thinking-display true "Explain the time complexity of this algorithm:"

# Pipe code directly

cat complex_script.py | llm -m claude-opus-4.7 --thinking-effort xhigh "Find security vulnerabilities in this code:"

When to Use xhigh Thinking Effort

Don't use xhigh for every query—it's slower and more expensive. Reserve it for:

- Complex refactoring: When you need to understand intricate dependencies

- Debugging subtle bugs: Race conditions, memory leaks, or heisenbugs

- Architecture decisions: Evaluating multiple design patterns

- Security audits: Deep analysis of potential vulnerabilities

- Performance optimization: Finding non-obvious bottlenecks

For everyday coding tasks, stick with medium or high. The thinking_adaptive option (when available) will automatically adjust thinking effort based on query complexity.

Integration with Your Claude Code Workflow

While Claude Code in your IDE handles most development tasks, llm-anthropic with Opus 4.7 excels at:

- Batch processing: Analyze multiple files in sequence

- Scripting: Incorporate Claude into your build pipelines

- Deep analysis: When you need more reasoning than the IDE provides

- Experimentation: Testing different thinking levels on the same problem

Create aliases for common tasks:

# Add to your .bashrc or .zshrc

alias claude-deep="llm -m claude-opus-4.7 --thinking-effort xhigh"

alias claude-fast="llm -m claude-3-5-sonnet-20241022"

# Usage

claude-deep "<your complex coding question>"

The Default max_tokens Change

The increased default max_tokens means you're less likely to hit truncation issues when processing large codebases or generating lengthy documentation. This aligns with how Claude Code handles context in the IDE—giving models room to work with your entire codebase.

Note: The thinking_display option currently only shows summarized output in JSON format or JSON logs. For full thinking traces, you'll need to use Anthropic's API directly or wait for future updates.

gentic.news Analysis

This release follows Anthropic's steady rollout of thinking effort controls across their API, which began with the introduction of the parameter in late 2025. The llm-anthropic tool, maintained by Simon Willison (co-creator of Django), has become a crucial bridge for developers who want CLI access to Anthropic's models alongside other providers in the LLM ecosystem.

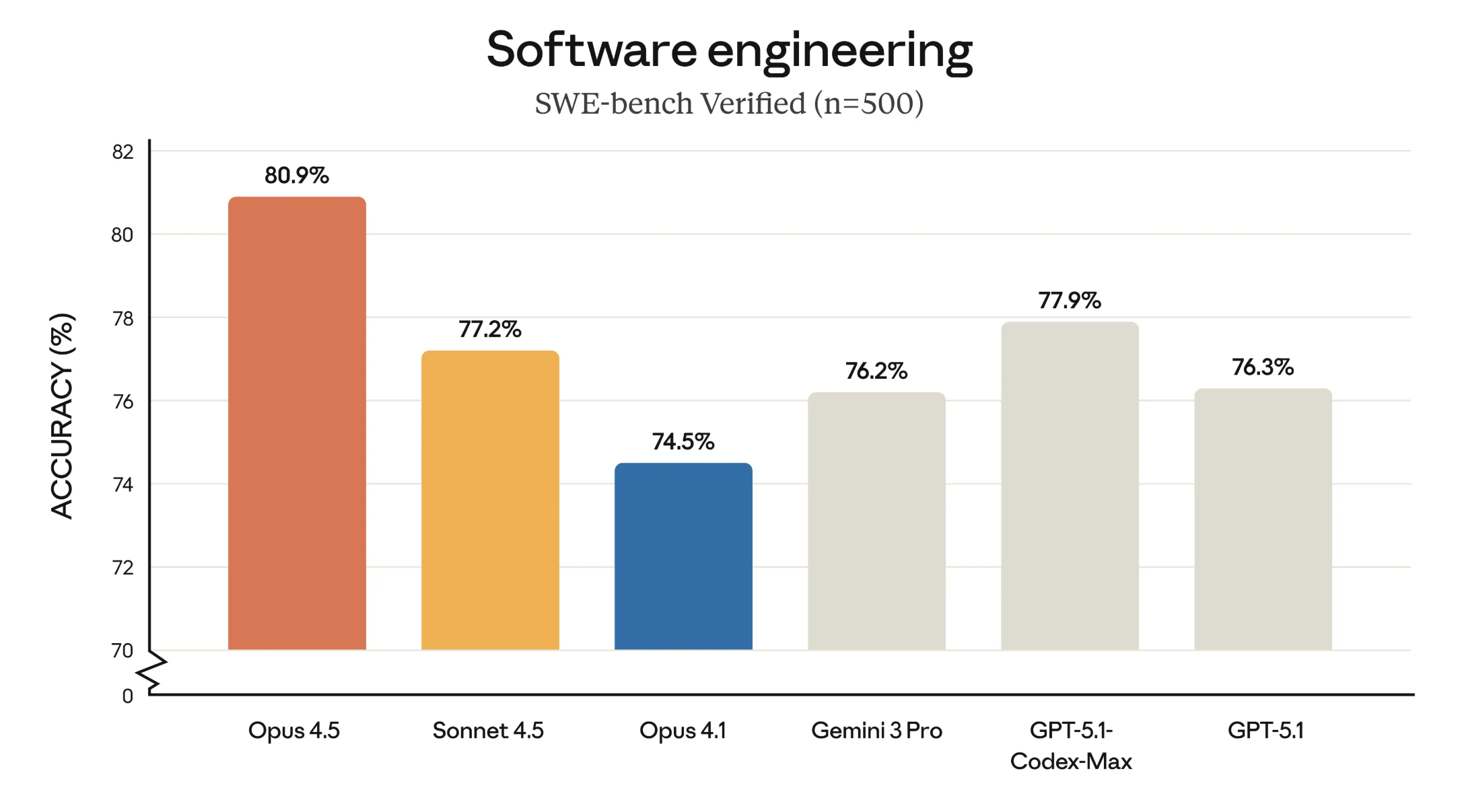

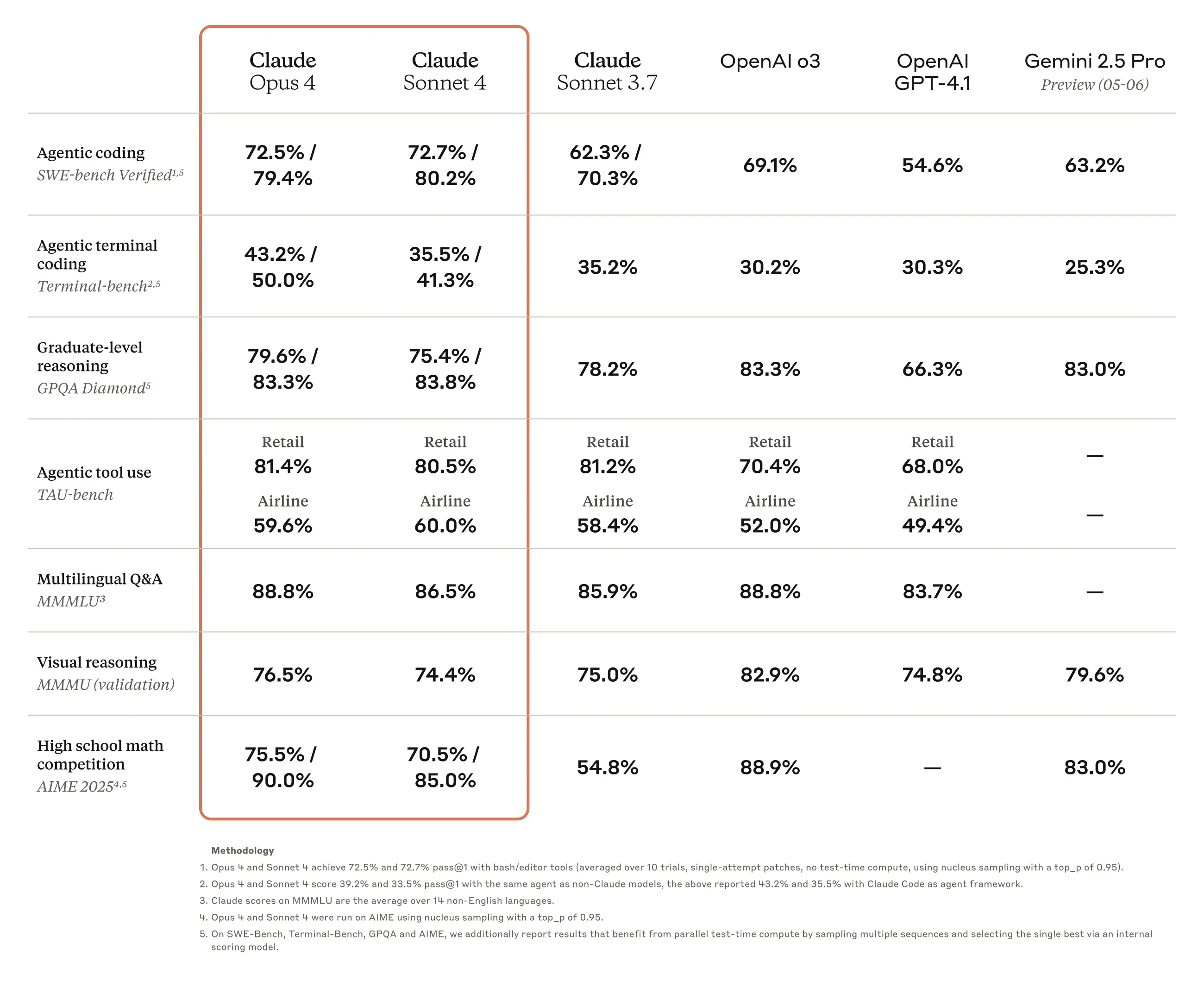

The timing is notable—just days after Willison's post about Qwen3.6-35B-A3B outperforming Claude Opus 4.7 on specific tasks, this update gives developers direct access to Opus 4.7's deepest reasoning capabilities. This isn't about raw performance benchmarks but about providing the right tool for specific jobs: use xhigh thinking for complex reasoning tasks where depth matters more than speed.

For Claude Code users, this represents another tool in the toolbox. While Claude Code in the IDE handles the day-to-day workflow, llm-anthropic with Opus 4.7 and xhigh thinking serves as your deep analysis engine for the hardest problems. This follows the pattern we've seen with other MCP servers and CLI tools—specialized tools for specialized tasks within the broader Claude ecosystem.