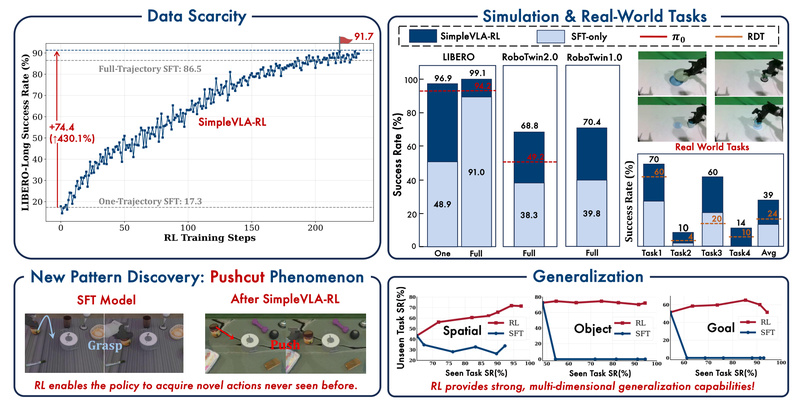

A chart shared by an X user has sparked discussion within the AI community, showing what is described as an "insane jump" in the performance of large language models (LLMs) on the United States of America Mathematical Olympiad (USAMO) from 2025 to 2026.

The source is a single tweet from user @kimmonismus, containing a simple bar chart and the comment. No accompanying research paper, methodology details, or official benchmark publication is linked. The chart visually compares the performance of several unnamed LLMs on "USAMO 2026" versus "USAMO 2025."

What the Chart Shows

Based on the visual representation in the shared image:

- 2025 Performance: Most models appear to score between 0% and 30% on the USAMO problems.

- 2026 Performance: There is a significant upward shift. Multiple models are shown scoring above 50%, with at least one model's bar extending to what appears to be a score between 70-80%.

- The Claim: The accompanying text states this represents an "insane jump in just 1 year."

Critical Caveat: The tweet does not specify which models are being tested, the exact evaluation methodology (e.g., number of problems, scoring rubric, use of tool-augmented reasoning), or the source of the data. It presents a community observation rather than a peer-reviewed result.

Context: The USAMO as a Benchmark

The USAMO is a prestigious high-school mathematics competition consisting of highly challenging proof-based problems. It has become a key benchmark for evaluating the advanced mathematical reasoning and problem-solving capabilities of AI systems. Success on the USAMO requires multi-step logical deduction, creative problem-solving, and rigorous formal proof—capabilities that have historically been difficult for AI.

In recent years, models like OpenAI's o1, Google's Gemini series, and Anthropic's Claude have made significant strides on mathematical benchmarks. Improvements have often been driven by techniques like process supervision, reinforcement learning from human feedback (RLHF) on reasoning chains, and search-augmented generation.

gentic.news Analysis

This community-shared data point, while unofficial, aligns with the accelerating trend we've documented in AI mathematical reasoning. In our previous coverage of OpenAI's o1 model launch, we noted its breakthrough performance on the MATH dataset, which contains Olympiad-level problems. The apparent jump from sub-30% to above-50% scores on USAMO within a year, if validated, would represent a steeper improvement curve than seen on many other benchmarks.

This trend is not isolated. It connects directly to the increased competitive activity (📈) in the "reasoning model" space. Following OpenAI's o1 preview, both Anthropic and Google have been actively pushing their own reasoning architectures. The timeline data shows a clustering of announcements and research papers focused on formal reasoning and proof verification throughout 2024 and 2025, setting the stage for the kind of leap this chart suggests.

For practitioners, the key takeaway is the validation of a specific technical direction: investment in reinforcement learning over reasoning traces and process-based training yields disproportionate returns on hard reasoning tasks. This contrasts with the earlier scaling law paradigm that prioritized data and parameter count. The entities leading this charge—OpenAI, Anthropic, and Google DeepMind—are all leveraging their proprietary RL frameworks to tackle the reward assignment problem for multi-step reasoning, a technical challenge we explored in our analysis of Claude 3.5 Sonnet's self-correcting capabilities.

However, caution is warranted. Without published methodology, it's impossible to confirm if the evaluation conditions were consistent between years or if the 2026 test benefited from data contamination or narrower problem selection. The AI community will need to wait for formal benchmarks, such as those from the upcoming IMO 2025 or a published paper, to confirm the scale of this progress.

Frequently Asked Questions

What is the USAMO?

The United States of America Mathematical Olympiad (USAMO) is a highly selective, proof-based mathematics competition for high school students in the United States. It serves as the final round of the American Mathematics Competitions series and is used to select the team for the International Mathematical Olympiad (IMO). Its problems are exceptionally difficult and require deep, creative reasoning, making it a rigorous benchmark for AI.

Which AI models are best at math Olympiad problems?

As of late 2025, the top-performing models on formal mathematical reasoning benchmarks have been OpenAI's o1 series, Google's Gemini Advanced with its reasoning capabilities, and Anthropic's Claude 3.5 Sonnet and subsequent versions. Performance is highly dependent on whether the model is using a simple prompt or is augmented with tools like code execution and search. The chart in the source does not name specific models.

Why is performance on USAMO important for AI development?

Strong performance on the USAMO demonstrates an AI's ability to perform complex, multi-step logical deduction, plan solutions, and handle abstract concepts—skills that are foundational for reliable reasoning in science, engineering, and general problem-solving. It moves beyond pattern recognition to test genuine understanding and application of formal rules, a key step toward more robust and trustworthy AI systems.

How should I interpret this unofficial performance chart?

Interpret it as a compelling community observation that indicates rapid progress, but not as a definitive scientific result. For accurate comparisons, look for peer-reviewed papers or official technical reports from AI labs that detail the exact models tested, the specific problem sets used, the evaluation protocol, and the scoring methodology. Always check for potential confounders like test-set contamination.