Meta has detailed a significant evolution in its infrastructure management, moving from traditional automation to a fleet of unified AI agents that autonomously optimize capacity and performance across its global compute footprint. This system, described in a technical blog post from the company's engineering team, represents a critical step in managing the hyperscale demands of AI training, recommendation systems, and social networks.

Key Takeaways

- Meta's engineering team has built and deployed a system of unified AI agents to autonomously manage capacity and performance across its hyperscale infrastructure.

- This represents a significant shift from rule-based automation to AI-driven orchestration for one of the world's largest computing fleets.

What's New: From Automation to Autonomous Agents

The core shift is from scripted, rule-based automation to a multi-agent AI system capable of perception, reasoning, and action. These agents are unified, meaning they operate on a shared understanding of Meta's entire infrastructure state—from data centers and network layers to individual workloads and service-level objectives (SLOs).

Key capabilities of the deployed system include:

- Autonomous Capacity Management: Agents continuously analyze demand signals and resource utilization to proactively allocate and rebalance compute, memory, and network capacity.

- Performance Optimization: They tune thousands of system parameters in real-time to maintain application performance while maximizing hardware efficiency.

- Anomaly Mitigation: The system can detect and remediate performance degradation or capacity shortfalls faster than human operators, often before they impact end-users.

- Closed-Loop Control: Actions taken by the agents are monitored, and their outcomes are fed back into the models to improve future decisions, creating a self-improving loop.

Technical Architecture: A Fleet of Specialized Agents

The system is not a single monolithic model but a coordinated fleet of agents with specialized roles, likely built on Meta's foundational AI research. The architecture follows a hierarchical structure:

- Perception Layer: Ingests petabytes of telemetry data from servers, networks, clusters, and applications to create a real-time, unified view of system health and demand.

- Reasoning & Planning Layer: AI agents analyze this state, predict future demand, and generate orchestration plans. This layer likely employs reinforcement learning and large-scale simulation to evaluate the impact of potential actions.

- Execution Layer: Safe, validated actions are carried out through existing infrastructure APIs to reconfigure resources, shift workloads, or adjust system settings.

The "unified" aspect is crucial. By breaking down silos between compute, storage, and network management systems, the agents can make globally optimal decisions that local, isolated automations could not—such as trading off network bandwidth for compute efficiency to meet an overall service latency target.

The Hyperscale Imperative

This development is driven by necessity. Meta's infrastructure, which supports billions of users and massive AI workloads like the Llama family of models, has grown too complex and dynamic for human-led or simple automated management. The cost of inefficiency—in terms of capital expenditure on idle hardware, operational overhead, and energy consumption—is enormous at this scale. Autonomous AI agents offer a path to "capacity efficiency," squeezing more useful work out of every watt and server.

What to Watch: The Move to AI-Native Infrastructure

Meta's public disclosure signals that AI-for-infrastructure is moving from research to core production. The implications for the industry are substantial:

- Benchmarks: The true measure of success will be in operational metrics: reduction in manual intervention tickets, improvement in aggregate resource utilization (e.g., GPU cluster usage), and consistency in meeting SLOs.

- Limitations: The system's decision-making boundaries and safety guarantees are critical. How does it handle unprecedented failure modes or conflicting objectives? The blog post emphasizes a human-in-the-loop oversight model for high-stakes decisions.

- Competitive Pressure: Other hyperscalers (Google, Amazon, Microsoft) and large enterprises running private clouds are pursuing similar initiatives. Meta's open sharing of its approach provides a benchmark for the state of the art in autonomous infrastructure.

gentic.news Analysis

Meta's deployment of unified AI agents is a logical and aggressive next step in a trend we've been tracking: the convergence of AI research and infrastructure engineering. This isn't merely about using more AI workloads; it's about making the infrastructure itself AI-native. The agents managing this infrastructure are likely descendants of the same reinforcement learning and planning research that powers Meta's game-playing and strategic reasoning AI, now applied to a vastly more complex and economically critical domain.

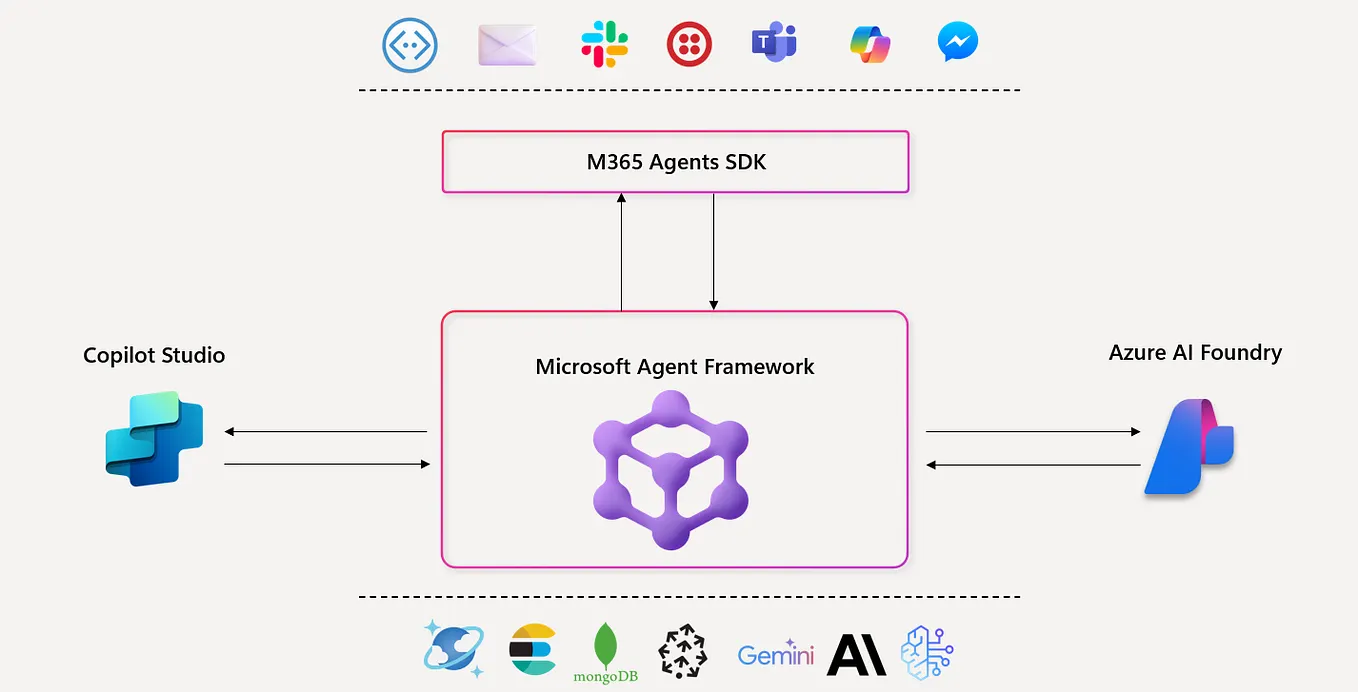

This move aligns with a broader industry shift we noted in our coverage of Google's Gemini-powered data center cooling optimization and Microsoft's Project Synergy for AI-driven cloud management. The race is on to build the most efficient, self-healing cloud. For Meta, the stakes are particularly high. The immense computational cost of training frontier models like Llama 3 and its successors makes infrastructure efficiency a direct competitive advantage in the AI arms race. Every percentage point of GPU utilization gained translates to faster iteration cycles or lower training costs.

Furthermore, this development validates a key trend from our knowledge graph: the entity relationship between Meta's FAIR (Fundamental AI Research) lab and its infrastructure teams has strengthened significantly (📈). Research breakthroughs in multi-agent systems and world models are no longer just academic exercises; they are being productized to run the company's core business. This tight integration of research and engineering is becoming a defining characteristic of leading AI organizations, as we've seen with similar patterns at OpenAI, Anthropic, and Google DeepMind. The era of AI managing AI infrastructure has decisively begun.

Frequently Asked Questions

What are "unified AI agents" in this context?

They are AI systems, likely based on reinforcement learning and planning models, that perceive the state of Meta's entire technical infrastructure (servers, network, storage, workloads), reason about optimal configurations, and execute actions to maintain performance and efficiency. "Unified" means they operate on a shared, global view rather than isolated system views.

How is this different from traditional IT automation?

Traditional automation uses predefined scripts and rules ("if utilization > 90%, add a server"). Meta's AI agents use learned models to understand complex, dynamic relationships and make nuanced trade-offs across multiple systems and objectives, adapting to situations not explicitly programmed.

Why is Meta doing this?

The scale and cost of its infrastructure—especially for training large AI models—make manual or simple automated management inefficient and costly. AI-driven optimization can improve hardware utilization, reduce energy consumption, maintain application performance, and lower operational overhead, saving significant capital and operational expenditure.

Is this technology available to other companies?

Not directly. Meta is building this for its own private infrastructure. However, the concepts and research are influencing the broader cloud and datacenter industry. Cloud providers like AWS, Google Cloud, and Microsoft Azure are developing similar AI-driven management services for their customers.