What Happened

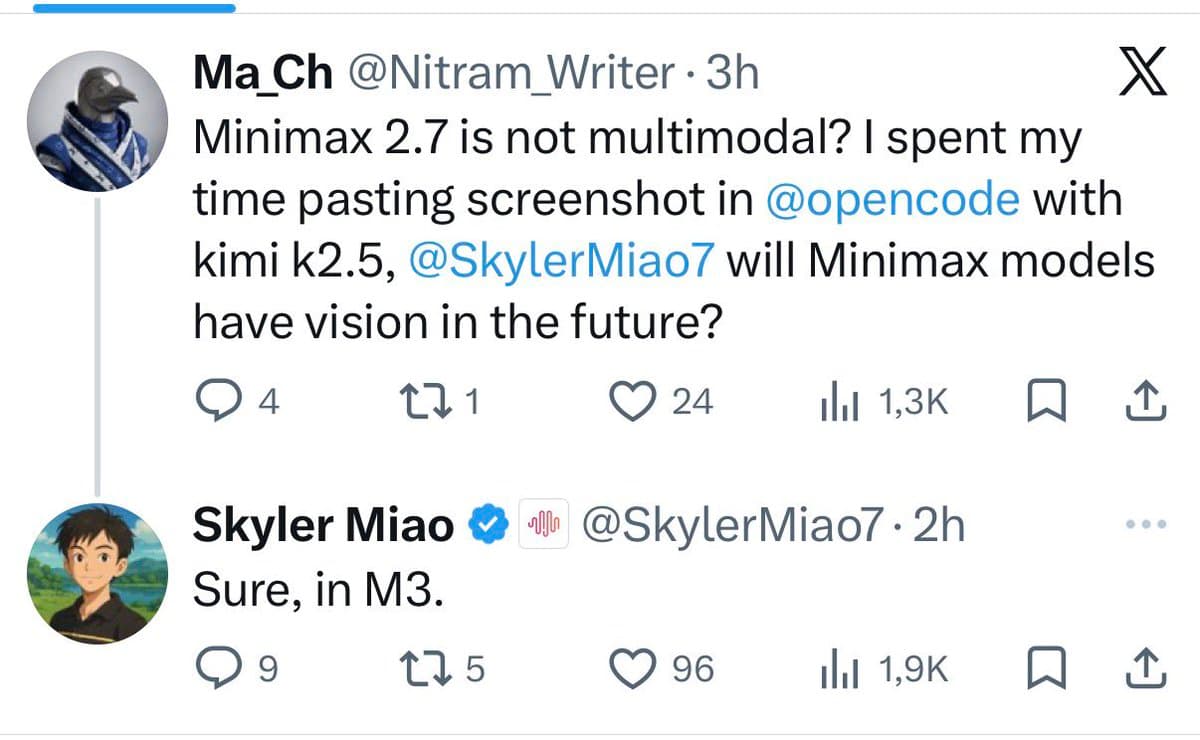

On June 19, 2025, a user on X (formerly Twitter) posted a screenshot indicating that AI company Minimax has confirmed the development of a multimodal model. The screenshot, shared by user @kimmonismus, shows a brief text exchange or notification with the message: "Cool, multimodal Minimax m3 confirmed :)"

The post includes a link to an image (https://t.co/hRT4FwMG2t) which appears to be the source of the confirmation. The term "m3" is used as an internal codename or project name for this multimodal system.

Context

Minimax is a Chinese AI company known for developing large language models, including the Abab series. The company has been competing in a crowded field against other Chinese AI firms like Baidu (Ernie), Alibaba (Qwen), and 01.AI (Yi), as well as international players.

Multimodal AI refers to systems that can process and generate multiple types of data—typically combining text, images, audio, and sometimes video. This confirmation places Minimax in direct competition with other multimodal offerings like OpenAI's GPT-4V, Google's Gemini series, and Anthropic's Claude 3 models.

The social media tease follows a pattern of AI companies using informal channels to generate buzz ahead of formal announcements. No further details about the model's capabilities, architecture, training data, performance benchmarks, or release timeline were provided in this initial confirmation.