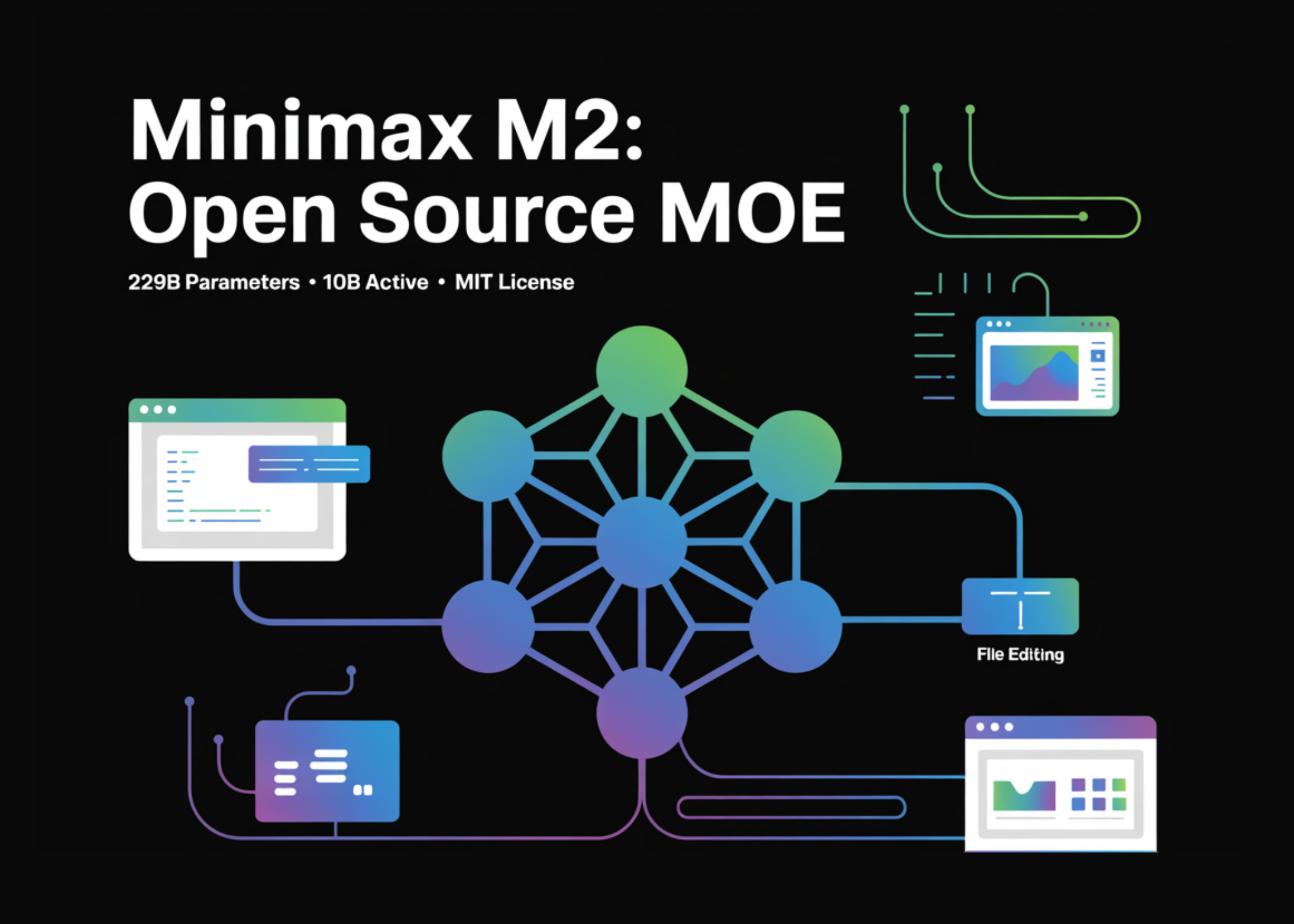

Chinese AI company MiniMax has open-sourced its M2.7 model. The announcement, highlighted by a researcher, points to the blog's description of a "model's self-evolution" as the most notable technical disclosure.

Key Takeaways

- Chinese AI firm MiniMax has open-sourced its M2.7 model.

- The key detail from its blog is a 'self-evolution' training process, likened to AlphaGo's self-play, for iterative improvement.

What Happened

MiniMax made its M2.7 model publicly available under an open-source license. The accompanying blog post details the model's development process, with a specific focus on a "self-evolution" training methodology. The researcher sharing the news, Yuchen J., summarized the core idea: "It's essentially Karpathy's LLM training scaling, but with a self-evolution twist."

The reference to "Karpathy's LLM training scaling" likely points to principles outlined by former Tesla and OpenAI researcher Andrej Karpathy regarding data, model size, and compute scaling for large language models. The "self-evolution" component suggests an iterative, bootstrapping process where the model improves itself, drawing a parallel to the self-play reinforcement learning technique famously used by DeepMind's AlphaGo.

Context

MiniMax is a prominent Chinese AI startup, known for developing large language models and conversational AI. The company has previously released other model families. Open-sourcing a model of this scale represents a significant contribution to the research and developer community, allowing for external scrutiny, fine-tuning, and integration.

The concept of "self-evolution" or self-improving AI systems is a active area of research, often explored under terms like recursive self-improvement, self-play, or reinforcement learning from AI feedback (RLAIF). Implementing such a technique for a large language model involves creating a loop where the model generates its own training data or critiques its outputs to create a progressively stronger training signal.

gentic.news Analysis

MiniMax's move to open-source M2.7 follows a strategic pattern we've observed with other major AI labs, such as Meta's releases of Llama models and Google's Gemma, which aim to build ecosystem influence and accelerate downstream innovation. For MiniMax, a Chinese firm competing in a global market dominated by US giants, open-sourcing is a credible way to attract developer mindshare and demonstrate technical capability on the world stage.

The brief mention of "self-evolution" is the critical technical hook. If implemented robustly, this moves beyond standard supervised fine-tuning or human feedback (RLHF) into a more autonomous training regime. The AlphaGo analogy is apt: just as the Go program improved by playing millions of games against itself, a language model could theoretically refine its reasoning and instruction-following by generating, evaluating, and learning from its own synthetic chain-of-thought trajectories. The major challenge, as our previous coverage of self-rewarding models has noted, is preventing reward hacking or drift—where the model optimizes for a flawed internal reward signal. The technical details in MiniMax's blog will be crucial for assessing whether their approach mitigates these risks.

This release also intensifies the open-source pressure on closed-model API providers like OpenAI and Anthropic. As we analyzed in our piece on the "Open Source AI Surge of 2025," each high-quality open model raises the baseline for what a freely available model can do, forcing commercial players to innovate faster or justify their premium. MiniMax's contribution adds another capable model to the open-weight ecosystem, giving developers more options to build and customize without vendor lock-in.

Frequently Asked Questions

What is the MiniMax M2.7 model?

The MiniMax M2.7 is a large language model developed by the Chinese AI company MiniMax. The "2.7" likely refers to the parameter count in billions (2.7B), positioning it as a small to mid-sized model efficient for edge deployment or research experimentation. It has now been released under an open-source license.

What does 'model self-evolution' mean?

Based on the announcement, "self-evolution" describes a training method where the model iteratively improves itself, similar to how AlphaGo used self-play. In practice for an LLM, this could involve the model generating its own training examples or reasoning traces, then using those to create a refined training signal for the next iteration, reducing reliance on static human-annotated datasets.

Is the MiniMax M2.7 model available for commercial use?

The announcement states the model is "open-source," but the specific license (e.g., Apache 2.0, MIT, or a more restrictive license) will determine the terms of commercial use. Developers should check the official repository for the license file to understand usage rights, limitations, and attribution requirements.

How does MiniMax's model compare to other open-source LLMs?

Without published benchmark scores for M2.7, a direct performance comparison is difficult. Based on its described size (2.7B parameters), it would compete in a category with models like Microsoft's Phi-3-mini (3.8B), Google's Gemma 2 (2B), and Meta's Llama 3 8B (though larger). Its purported "self-evolution" training could differentiate its capabilities, especially in reasoning tasks, if the technique proves effective.