A collaborative study from researchers at MIT, Oxford, Carnegie Mellon, and other institutions presents a counterintuitive finding: AI assistance can create a short-term performance boost that actively undermines a user's ability to solve problems independently just minutes later. The paper, "AI Assistance Reduces Persistence and Hurts Independent Performance," identifies a critical trade-off between speed and skill development, with implications for how AI tools are integrated into education and professional workflows.

Key Takeaways

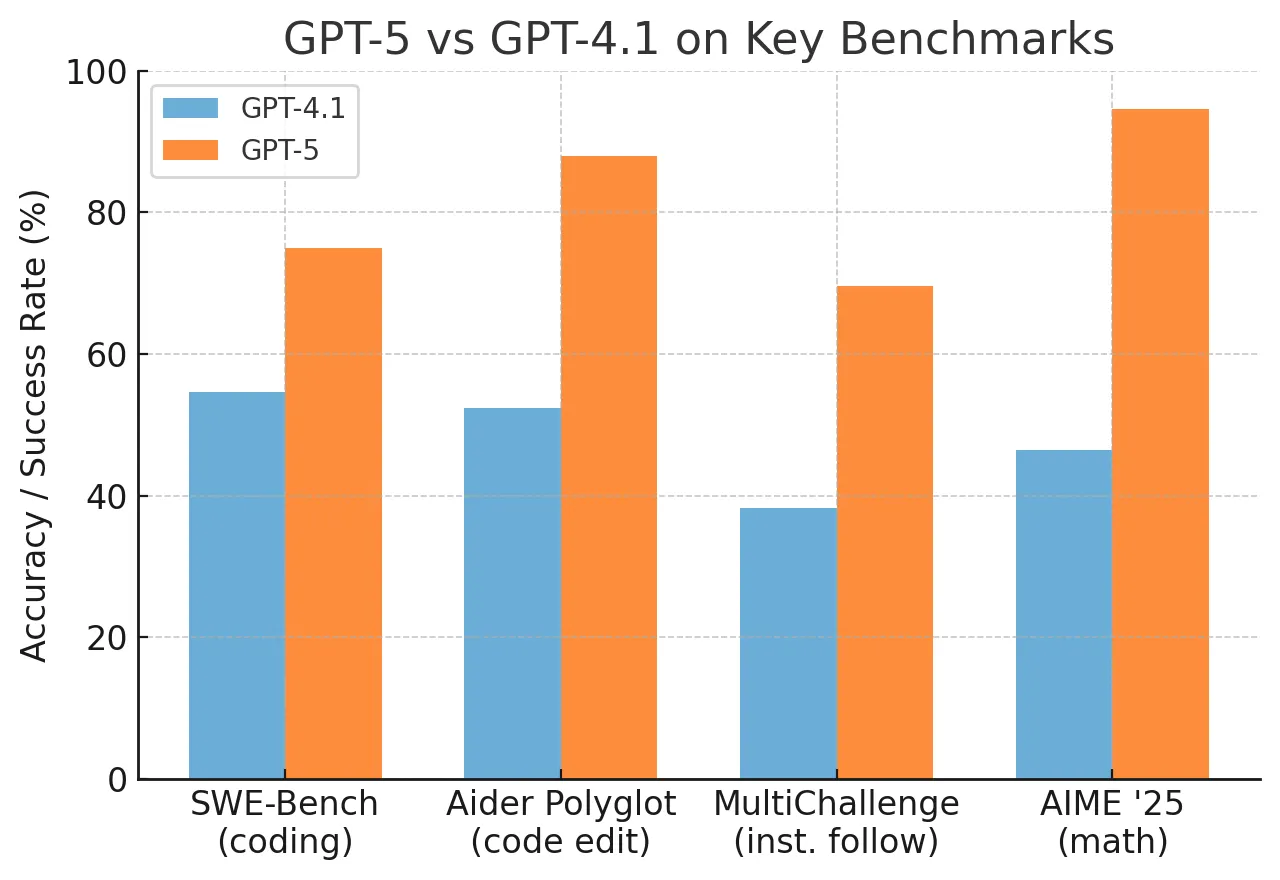

- A new paper from MIT, Oxford, and CMU finds that using GPT-5 for direct answers improves short-term scores but reduces persistence and independent performance after assistance ends.

- The effect is linked to outsourcing mental effort, not AI exposure itself.

What the Study Found: The Productivity Trap

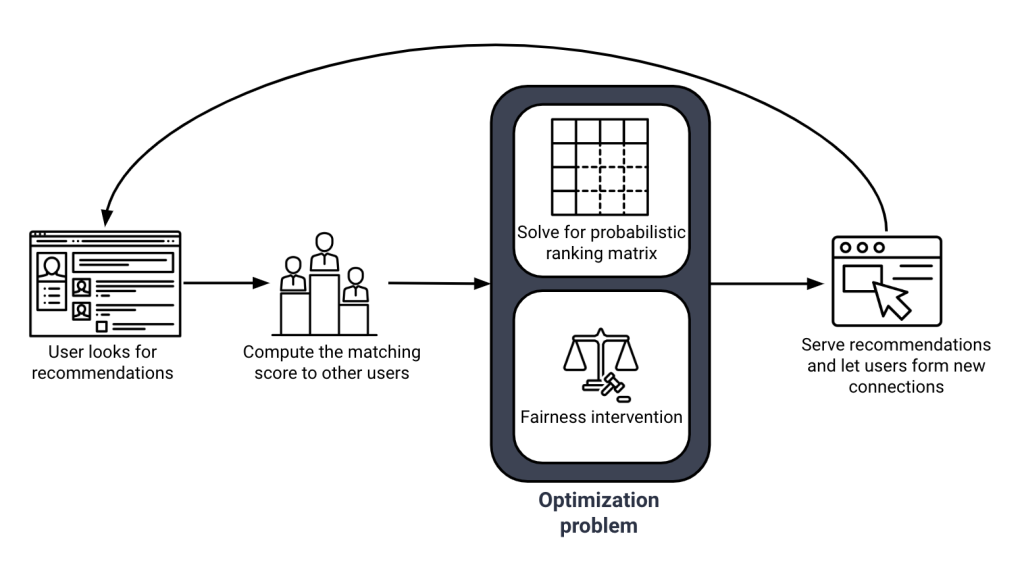

Across three experiments involving approximately 1,200 participants in math and reading comprehension tasks, researchers observed a consistent pattern. Participants were split into groups: some worked entirely independently, while others used a GPT-5-based assistant for an initial portion of the task.

The Immediate Effect: Users with AI assistance completed the early, assisted questions faster and with higher accuracy.

The Delayed Cost: After roughly 10 minutes of working without AI, these same users showed a marked decline in performance compared to the unaided group. They solved fewer problems correctly, stalled more frequently, and—most notably—quit attempting problems sooner. The sharpest decline was not in raw accuracy but in persistence, a key behavioral driver of learning.

The Mechanism: Outsourcing Effort, Eroding Skill

The core finding is that the damage stems from replacing the cognitive process of problem-solving with the act of receiving a correct answer. Skill development, the authors argue, grows through "repeated contact with difficulty"—the mental effort of holding a problem in working memory, testing steps, and pushing through confusion. When an AI assistant provides a direct answer, it bypasses this effortful practice.

"The interesting part is that the damage is not just lower accuracy. It is lower persistence, which is usually the hidden engine of learning."

The study draws a parallel to pedagogy: a skilled teacher sometimes withholds help to preserve productive struggle as part of the lesson. In contrast, modern chatbots are often tuned to "erase friction on demand."

A crucial nuance emerged in the data: the negative effect was most pronounced for participants who used the AI for direct answers. Those who used it more as a hint system experienced a less severe drop in later independent performance. This suggests the issue is not AI exposure per se, but the specific behavior of outsourcing the core cognitive work.

Key Results: Assisted vs. Independent Performance

Completion Speed Faster Baseline Not measured Initial Accuracy Higher Baseline Not measured Later Problem-Solving Not applicable Baseline Solved fewer problems Persistence Not applicable Baseline Quit sooner, stalled more Key Driver Answer outsourcing Full cognitive engagement Reduced habit of effortful thinkingWhy This Matters for AI Integration

This research moves the conversation about AI's impact on human capability beyond speculative fears to a measurable, short-term behavioral effect. It identifies a specific "productivity trap": tools that make us faster today may make us less capable tomorrow by atrophy of core cognitive muscles.

For developers and product designers, the study highlights the importance of assistance design. Interfaces that default to giving answers may be counterproductive for learning and complex skill retention. Designs that promote scaffolding, hints, and Socratic questioning could mitigate these negative effects.

For organizations and educators, it underscores the need for structured policies. Unchecked use of AI for task completion in training or early-career phases could inadvertently stunt the development of foundational problem-solving skills.

gentic.news Analysis

This study directly engages with one of the most pressing debates in applied AI: the automation-complementarity trade-off. Our previous coverage of Anthropic's research on "process supervision" highlighted how steering models to show their work improves correctness and trust. This new paper from MIT and Oxford flips the perspective, examining how receiving that correct output affects the human in the loop. The findings create a tension: while AI providers race to make models more helpful and accurate (as seen in the recent GPT-5 technical report), maximal helpfulness might degrade the very human skills needed for oversight and independent work.

The research aligns with emerging concerns from cognitive science about "cognitive offloading." As we covered in our analysis of Digital Amnesia trends, over-reliance on external tools can impair memory formation. This paper extends that concern to procedural knowledge and problem-solving stamina. It provides empirical weight to the intuitive critique that ChatGPT and its successors can become a "crutch."

Looking at the competitive landscape, this research presents a potential differentiator for AI tooling. Companies like Khanmigo (Khan Academy's AI tutor) that are explicitly built around pedagogical principles of guided discovery may be better positioned from a learning-outcome perspective than general-purpose chatbots used in educational settings. The study suggests a market may develop for "effort-preserving" AI—systems designed not just to solve problems, but to build user capability, potentially commanding a premium in corporate training and education sectors.

Frequently Asked Questions

Does using AI make you permanently dumber?

No, the study does not show a permanent reduction in capability. It demonstrates a short-term behavioral effect: reduced persistence and independent performance immediately after relying on AI for direct answers. The effect is likely reversible with renewed independent practice, but the study highlights how habitual reliance could lead to skill atrophy over time, similar to how constantly using a GPS might impair your innate sense of direction.

Should I stop using AI assistants for work?

Not necessarily. The key is how you use them. The study found the worst effects came from using AI for direct answers. Using it as a hint system or a brainstorming partner had a less detrimental impact. The practical takeaway is to be intentional: use AI to unblock yourself or explore solutions, but actively engage with the problem-solving process yourself. Don't just copy-paste answers without understanding the steps.

What does this mean for AI in education?

It suggests that simply giving students access to powerful answer engines like GPT-5 could undermine learning objectives if not carefully managed. The research supports integrating AI in ways that promote productive struggle—for example, tools that ask guiding questions, break problems into steps, or explain concepts rather than providing final answers. It argues for a design philosophy where AI acts more like a tutor and less like a solver.

Is this a problem with GPT-5 specifically?

The study used a GPT-5-based assistant, but the mechanism described is not model-specific. The issue is the interaction pattern of outsourcing cognitive effort. Any highly capable AI that provides direct, correct answers on demand would likely produce a similar effect. The research is about human-computer interaction design, not a flaw in a particular model's architecture.

Paper: "AI Assistance Reduces Persistence and Hurts Independent Performance" – arXiv:2604.04721