What Happened: The Silent Degradation of Benchmarks

The article highlights a pervasive but often overlooked problem in AI development: evaluation drift. Benchmarks—the standardized datasets and metrics used to measure and compare AI model performance—are not static. Over time, they quietly "rot," losing their validity and becoming poor proxies for real-world performance. The author outlines seven specific mechanisms through which this decay occurs, warning that once-trustworthy evaluations can start steering teams toward bad decisions if left unchecked.

While the full list of seven ways is not detailed in the provided snippet, the core premise is clear. Benchmarks can suffer from issues like data distribution shift (the real-world data a model encounters drifts from the benchmark's training/test data), overfitting to the test set (where models are optimized for the benchmark itself, not the underlying task), and conceptual drift (where the definition of a "correct" answer evolves, but the benchmark does not). This creates a dangerous illusion of progress or stability.

Technical Details: Understanding the Rot

Evaluation drift is a facet of the broader MLOps challenge of maintaining models in production. It's distinct from model drift (where a model's performance degrades on live data) and data drift (where the input data distribution changes). Eval drift is about the measuring stick itself becoming warped.

Strong engineering teams, the article suggests, don't treat benchmarks as one-time pass/fail gates. Instead, they treat model evaluation as a continuous, living process. This involves:

- Proactive Benchmark Maintenance: Regularly auditing benchmark datasets for relevance, potential biases, and alignment with current real-world conditions.

- Dynamic Test Set Creation: Supplementing or periodically refreshing static benchmarks with new, curated data that reflects the latest trends and edge cases.

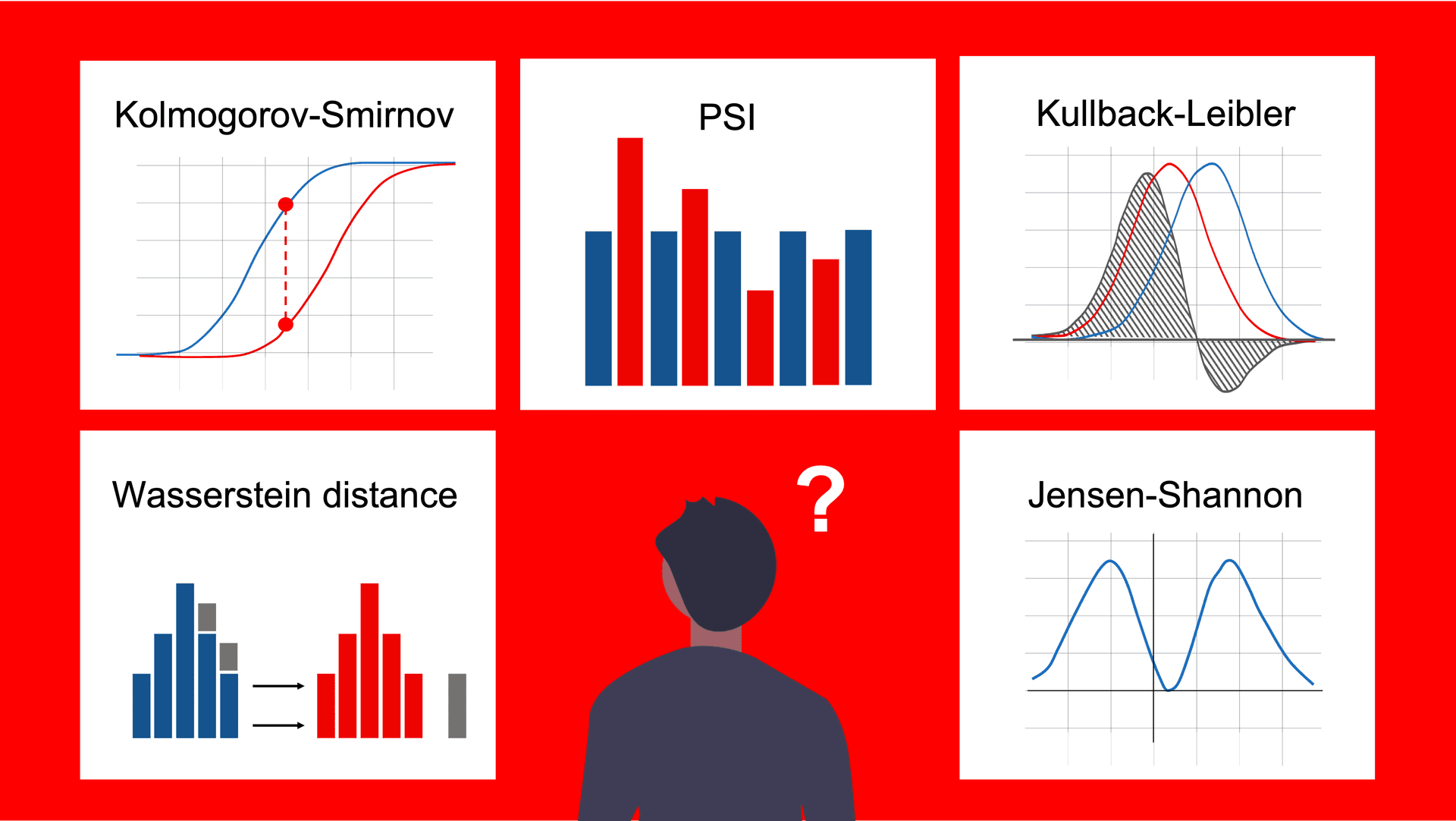

- Multi-Faceted Evaluation: Moving beyond a single aggregate score (like accuracy or F1) to a dashboard of metrics, including real-world A/B test results, qualitative human reviews, and business KPIs.

The failure mode is clear: a team ships a model because it achieves a new state-of-the-art score on an academic leaderboard, only to find it performs poorly in the nuanced, messy environment of a live application. The benchmark gave a false signal because it no longer correlated with reality.

Retail & Luxury Implications: When Your AI Metrics Lie

For retail and luxury AI practitioners, the implications of eval drift are particularly acute. The domain is defined by rapid shifts in trends, consumer language, visual aesthetics, and purchasing behavior. A benchmark frozen in time is almost guaranteed to decay.

Concrete Scenarios of Drift:

- Visual Search & Recommendation: A model benchmarked on 2023 fashion imagery may excel at identifying "quiet luxury" silhouettes but fail on 2025's emerging trend. The benchmark's test set doesn't contain the new trend, so the model appears stable while its real-world utility plummets.

- Sentiment & Customer Intent Analysis: The language and slang used in customer reviews and service chats evolve quickly. A sentiment model benchmarked on last year's data may misinterpret new phrases, leading to inaccurate insights into customer satisfaction or emerging product issues.

- Personalization Engines: A recommendation system evaluated on historical purchase data may optimize for past correlations that no longer hold (e.g., a macroeconomic shift changes purchasing power, or a viral social media trend creates new, non-intuitive product affinities).

- Virtual Try-On & AR: Benchmarking against a static set of model poses and lighting conditions will not reflect the diverse, unpredictable environments of end-users. Performance in the benchmark suite may not translate to a smooth customer experience.

What Strong Retail AI Teams Should Do:

The article's advice translates directly to retail operations:

- Instrument Everything: Beyond offline benchmark scores, instrument your production AI features to log key performance indicators (conversion lift, engagement time, return rates attributed to recommendations). This live data is your most important benchmark.

- Establish a Human-in-the-Loop (HITL) Review Cadence: Regularly sample the inputs and outputs of your production models for qualitative review by merchandisers, brand experts, and customer service leads. They will spot drift—a weird recommendation, a mis-categorized product—long before a numeric metric will.

- Curate Domain-Specific "Challenge Sets": Build and continuously update internal evaluation datasets that reflect your brand's unique challenges: new product categories, seasonal campaigns, known edge cases (e.g., differentiating between similar leather grains), and potential failure modes from past incidents.

Ignoring eval drift means potentially wasting significant resources on model iterations that look good on paper but deliver no business value—or worse, degrade the customer experience. The solution is to shift from a static, project-based evaluation mindset to a dynamic, product-based monitoring discipline.