In the rapidly evolving landscape of AI agents, a persistent challenge has been creating reliable systems that can effectively interact with real-world environments like computer terminals. While large language models (LLMs) have demonstrated remarkable capabilities in text generation and reasoning, translating these abilities into consistent, actionable terminal operations has proven difficult. Researchers from NVIDIA have now introduced Nemotron-Terminal, a systematic data engineering pipeline specifically designed to scale LLM-based terminal agents, addressing what they identify as a critical gap in current methodologies.

The Terminal Agent Challenge

Terminal agents—AI systems that can execute commands, navigate file systems, and perform tasks within command-line interfaces—represent a frontier in practical AI deployment. They promise to automate complex workflows, assist developers, and manage systems. However, their development has been hampered by the quality and scalability of training data. Most approaches rely on limited, often synthetic datasets that fail to capture the complexity, noise, and unpredictability of real terminal sessions. This results in agents that perform well in controlled benchmarks but struggle with generalization and reliability in production environments.

Nemotron-Terminal emerges as a response to this bottleneck. As highlighted in the research announcement shared by HuggingFace Papers, the pipeline is engineered to "bridge the gap between raw terminal data and high-quality training datasets." This is not merely a new model architecture, but a foundational infrastructure play aimed at the data layer—where many AI systems ultimately succeed or fail.

How the Nemotron-Terminal Pipeline Works

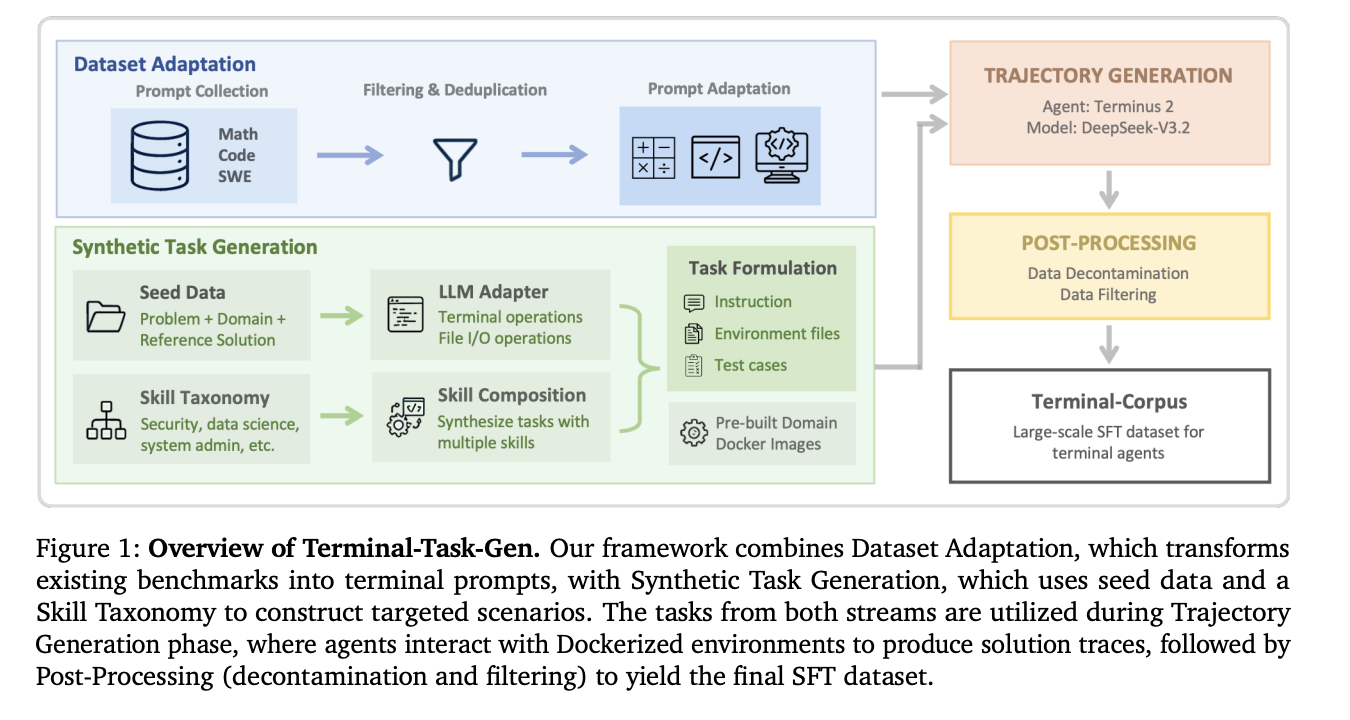

The core innovation of Nemotron-Terminal lies in its systematic approach to data curation and synthesis. While the source material provides a high-level overview, the stated goal is to transform raw, unstructured terminal interaction logs—which can be messy, redundant, or incomplete—into structured, diverse, and instruction-following training examples suitable for LLMs.

Key components of the pipeline likely include:

- Data Collection & Filtering: Aggregating terminal sessions from diverse sources (e.g., developers, sysadmins, open-source projects) and implementing rigorous filtering to remove sensitive information, noise, and low-quality interactions.

- Instruction Synthesis: Automatically generating natural language instructions that correspond to the observed terminal commands and outputs. This step is crucial for training LLMs to understand user intent.

- Trajectory Augmentation: Artificially expanding the dataset by creating variations of successful command sequences, simulating errors and recoveries, and introducing edge cases to improve robustness.

- Quality & Safety Alignment: Implementing checks to ensure the generated training data promotes helpful, harmless, and honest agent behavior, avoiding the execution of dangerous or destructive commands.

By treating data engineering as a first-class, systematic discipline, the Nemotron-Terminal pipeline aims to produce datasets that are orders of magnitude larger and more varied than what was previously available, directly targeting the generalization problem.

Implications for AI Agent Development

The introduction of Nemotron-Terminal signals a strategic shift in AI agent research. For years, the focus has been predominantly on model scaling—making LLMs larger and more powerful. NVIDIA's work underscores a growing recognition that data scaling and quality are equally critical, especially for agents that operate in constrained, actionable environments like terminals.

This pipeline could dramatically accelerate the development of competent coding assistants, DevOps automation bots, and system management tools. By providing a reproducible, high-quality data foundation, it lowers the barrier for other research teams and companies to build and refine their own terminal agents, potentially leading to a wave of innovation in practical AI tools.

Furthermore, the systematic approach championed by Nemotron-Terminal may serve as a blueprint for other specialized agent domains. The principles of curating raw interaction data, synthesizing instructions, and augmenting trajectories could be adapted for training agents that interact with databases, graphical user interfaces (GUIs), or even robotic control systems.

The Road Ahead

As with any new research framework, the true test for Nemotron-Terminal will be in its adoption and the performance of the agents trained on its output. Key questions remain: How well do these agents perform on unseen, complex multi-step tasks? Can they safely handle ambiguity and user error? The research community will likely be watching for benchmarks and real-world evaluations.

NVIDIA's move also highlights the increasing importance of vertical integration in AI. By building the data pipeline (Nemotron-Terminal) to feed its AI models (likely built on its own hardware and software stack), NVIDIA is strengthening its ecosystem for developing and deploying enterprise-grade AI agents. This development is not just a technical contribution; it's a strategic one in the competitive landscape of AI infrastructure.

Source: Research announcement via HuggingFace Papers, citing work from NVIDIA researcher Renjie Pi.

The development of Nemotron-Terminal represents a pivotal step from theoretical agent capabilities toward practical, reliable tools. By solving the data problem at scale, NVIDIA is not just building a better terminal agent—it's building the factory that produces them.