OpenAI President Greg Brockman has offered a brief, tantalizing glimpse into the company's pipeline, mentioning an upcoming model currently codenamed 'Spud'. The comment, made during a public appearance, provides no concrete specifications but frames the project as a significant culmination of research efforts.

What Happened

During a talk, Brockman was asked about OpenAI's future developments. In his response, he referenced "two years worth of research that is coming to fruition in this model," which the company internally calls 'Spud'. The remark was shared via a social media post by observer Rohan Paul. No further technical details—such as model architecture, scale, capabilities, or a release window—were disclosed. The codename 'Spud' follows OpenAI's tradition of using internal, often whimsical project names (like 'Strawberry' or 'Arrakis') before public release.

Context & Speculation

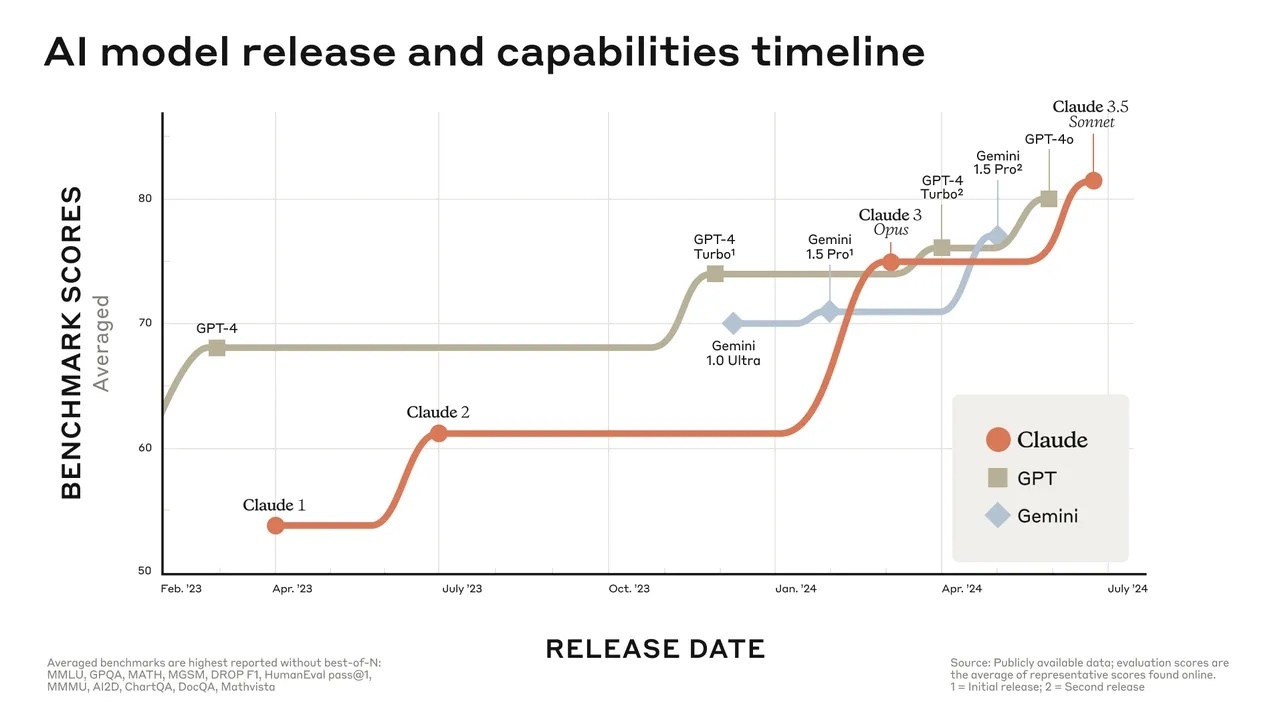

The mention of a two-year research cycle is notable. OpenAI's major model releases, like GPT-4 (2023) and the subsequent GPT-4 Turbo and o1 series, were built on research spanning multiple years. A two-year focused effort suggests 'Spud' could represent a substantial step rather than an incremental update. Given the competitive landscape in 2026—with competitors like Anthropic's Claude 3.5 Sonnet, Google's Gemini 2.0, and Meta's Llama 3 series—OpenAI is under pressure to maintain its perceived leadership in frontier model development.

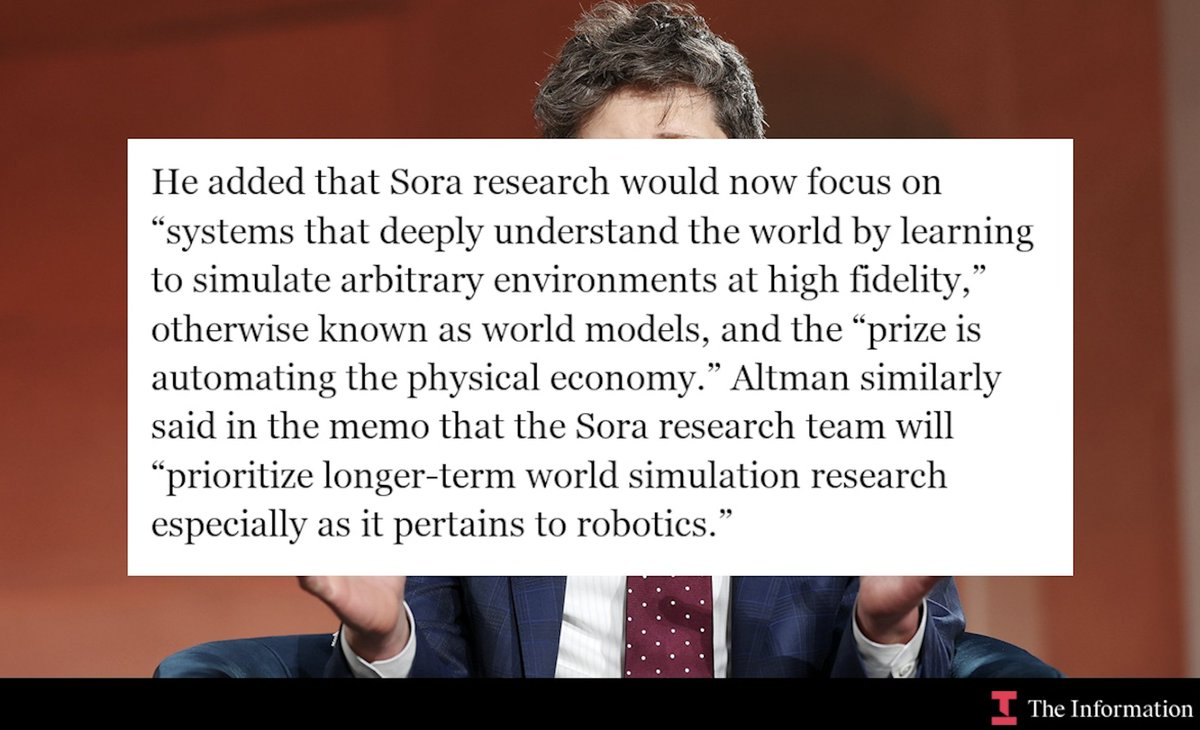

The nature of the research is unknown. It could pertain to fundamental advancements in reasoning, long-context understanding, multimodality, agentic capabilities, or training efficiency. The comment implies that disparate research threads from the past 24 months are being synthesized into a single, cohesive model.

What's Missing & What to Expect

Crucially, the announcement lacks all substance required for technical evaluation: there are no benchmarks, parameters, modalities, or APIs. The statement is purely forward-looking. Historically, such high-level teases from OpenAI executives precede more detailed announcements or releases by weeks or months.

Practitioners should treat this as a signal of ongoing activity, not a product roadmap. The real information will come when OpenAI releases a research paper, a technical blog post, or API documentation with measurable capabilities.

gentic.news Analysis

This tease fits a clear pattern in OpenAI's communication strategy: using high-level executive comments to maintain market mindshare and signal continued momentum without committing to technical details. As we covered in our analysis of the GPT-4.5 leak rumors in late 2025, the company often operates under a veil of secrecy, making controlled, vague previews a key tool.

The two-year timeline is significant. It places the genesis of 'Spud's' core research around early-to-mid 2024, a period of intense competition following the release of GPT-4. This suggests OpenAI may have been pursuing a distinct architectural or training paradigm separate from the scaled-up transformer path. The mention of "research coming to fruition" also hints that some of the capabilities demonstrated in recent research papers—such as improved reasoning, process supervision, or speculative decoding—may be integrated at scale.

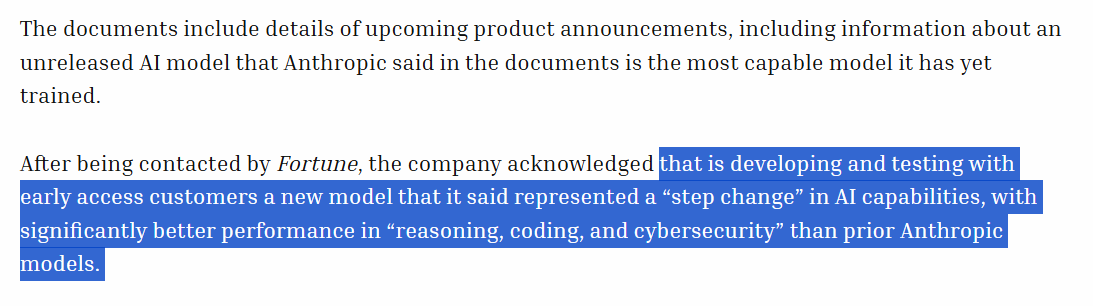

Furthermore, this aligns with the broader industry trend we identified in our 2026 AI Roadmap report, where major labs are shifting from pure scale to efficiency and reliability breakthroughs. If 'Spud' delivers, it will likely be evaluated against Anthropic's Claude 3.5 Sonnet, which set a high bar for coding and reasoning, and Google's Gemini 2.0, which excels in multimodal understanding. The pressure is on for 'Spud' to demonstrate not just incremental gains, but a qualitatively new level of capability or usability to justify its extended development cycle.

Frequently Asked Questions

What is the OpenAI 'Spud' model?

'Spud' is an internal codename for an upcoming OpenAI AI model. President Greg Brockman stated it represents "two years worth of research that is coming to fruition." No technical details, capabilities, or release date have been announced.

When will the OpenAI Spud model be released?

There is no official release timeline. Based on past patterns, a vague executive tease like this could precede a more detailed announcement or a release within a few months, but this is speculative. OpenAI has not committed to any date.

What does 'two years of research' mean for an AI model?

It suggests the model incorporates fundamental advancements from a sustained, multi-year research program, rather than being a quick iteration on an existing design. This could point to breakthroughs in architecture, training methods, or new capabilities like advanced reasoning or planning.

How does 'Spud' relate to GPT-5 or other OpenAI models?

The relationship is unknown. 'Spud' could be a codename for what will eventually be branded as a successor to GPT-4 (e.g., GPT-5), or it could be a specialized model for a specific capability like reasoning or agentic work. OpenAI often uses internal codenames that differ from final product names.