A report from an independent AI researcher, citing internal sources, claims OpenAI has made significant strategic shifts, including redirecting its Sora video generation team toward foundational world-model research and canceling the Sora product to allocate compute for a new large language model.

What the Report Claims

The report, shared by researcher @kimmonismus on X, outlines several key assertions about OpenAI's internal reorganization and priorities:

- Team Shift: The team behind Sora, OpenAI's high-profile text-to-video model, is now focused on world-model research, with a priority on longer-term simulation, particularly as it pertains to robotics applications.

- Product Cancellation: The Sora video model has reportedly been canceled. The stated reason is to free up the substantial computational resources required for training and inference to be used for a new, upcoming large language model.

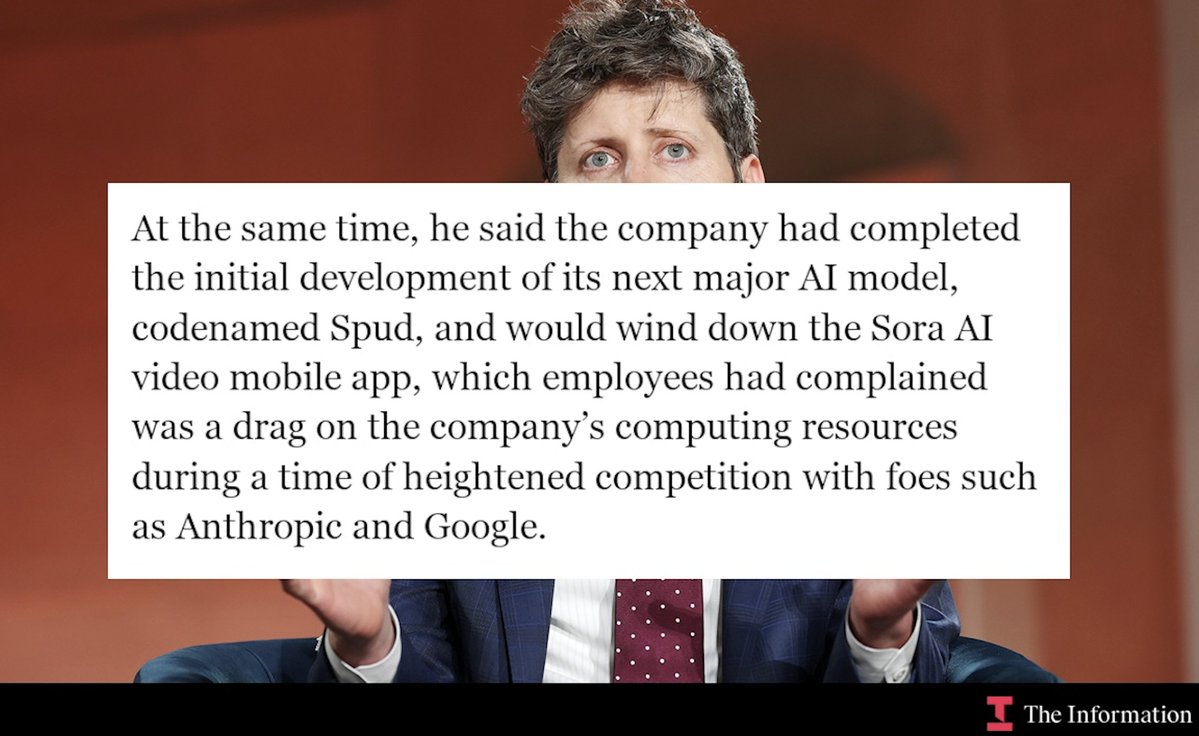

- New LLM & Reorg: The new LLM is internally codenamed "Spud" and is described as "very very strong" and something that "accelerates the economy." Its release is anticipated "in a few weeks." Concurrently, OpenAI's product organization has been renamed to "AGI Deployment."

- Executive Focus: CEO Sam Altman is said to be shifting his focus toward "raising capital, supply chains and 'building datacenters at unprecedented scale.'"

The source's interpretation is that these moves signal OpenAI is preparing for an eventual Initial Public Offering (IPO) and intends to formally declare the achievement of AGI beforehand.

Context and Unanswered Questions

OpenAI has not publicly commented on these claims. The Sora model, first unveiled in February 2024, was demonstrated generating highly realistic and physically plausible short video clips from text prompts. Its underlying architecture, which uses a diffusion transformer, was seen as a significant step toward models that understand and simulate real-world dynamics.

A pivot from a pure media-generation product to world-model research for robotics represents a fundamental shift from a consumer-facing application to a core, foundational AI capability. World models are AI systems that learn an internal representation of an environment to predict future states, which is critical for planning and autonomous agent behavior—a key challenge in robotics and reinforcement learning.

The claim of canceling Sora to reallocate compute is plausible given the immense resource demands of state-of-the-art model training. It highlights the intense internal competition for GPU clusters within major AI labs.

gentic.news Analysis

This report, if accurate, fits a clear pattern of strategic consolidation at OpenAI toward its stated AGI mission, even at the expense of flashy, publicly demonstrated products. The renaming of the product org to "AGI Deployment" is a stark, internal declaration of this priority. It follows a period where OpenAI has faced scrutiny over the balance between product commercialization (ChatGPT, GPT-4, etc.) and its original safety-focused, non-profit roots.

The pivot to world-models for robotics directly aligns with and likely accelerates trends we've covered extensively. It connects directly to the research frontier embodied by Google DeepMind's RT-2 and other vision-language-action models, which aim to ground language models in physical reality. Furthermore, it contextualizes OpenAI's recent partnership with Figure AI to develop humanoid robots, a move we analyzed as a bid to ground its models in the physical world. This shift suggests the Figure partnership may be more central to OpenAI's core research than a mere business development deal.

The reported focus on compute and infrastructure by Sam Altman is not new but appears to be intensifying. It corroborates our previous reporting on the global scramble for AI chips and Altman's ambitions to raise trillions for semiconductor manufacturing. Canceling a compute-intensive model like Sora to feed a new LLM underscores that for OpenAI, the priority is scaling the core intelligence engine above all else. The description of "Spud" as an economy-accelerating model suggests a focus on complex, multi-step reasoning and agentic capabilities, moving beyond pure conversation.

Frequently Asked Questions

What is a "world-model" in AI?

A world-model is an AI system that learns a compressed, internal representation of an environment. It allows the AI to predict or simulate future states based on current actions without needing to interact with the real world at every step. This is a cornerstone of advanced planning and is considered essential for developing generally capable agents, especially in robotics, where real-world trial-and-error is slow and costly.

Was OpenAI's Sora video model ever publicly released?

No. Sora was announced and demonstrated in February 2024, but access was limited to a small group of red teamers, visual artists, and filmmakers for safety and feedback purposes. It was never released as a public product or API.

What does "AGI Deployment" mean for OpenAI's products?

The reported renaming of the product organization to "AGI Deployment" signals an internal reframing of all product work as directly serving the goal of building, refining, and deploying Artificial General Intelligence. In practice, this likely means future product developments—like the rumored "Spud" LLM—will be evaluated primarily on how they advance capabilities toward AGI, rather than on standalone commercial metrics.

How does this relate to OpenAI's robotics efforts?

The shift of the Sora team to world-model research "especially as it pertains to robotics" provides a technical backbone for OpenAI's renewed push into robotics, exemplified by its partnership with Figure AI. The physics and scene understanding developed for Sora's video generation could be foundational for creating AI models that can predict physical outcomes and plan actions for robots in the real world.