OpenAI is undergoing a significant internal reallocation, shifting compute resources and top engineering talent away from certain existing projects and toward the development of its next generation of models. According to a report, the lab's focus is now squarely on building "automated researchers" and sophisticated agent-based systems designed to execute complex, multi-step tasks autonomously.

This strategic shift mirrors the internal reallocation that preceded the development of GPT-3, suggesting OpenAI leadership views this direction as the next major evolutionary step beyond current AI tools like ChatGPT.

What's Changing: From Sora to Agents

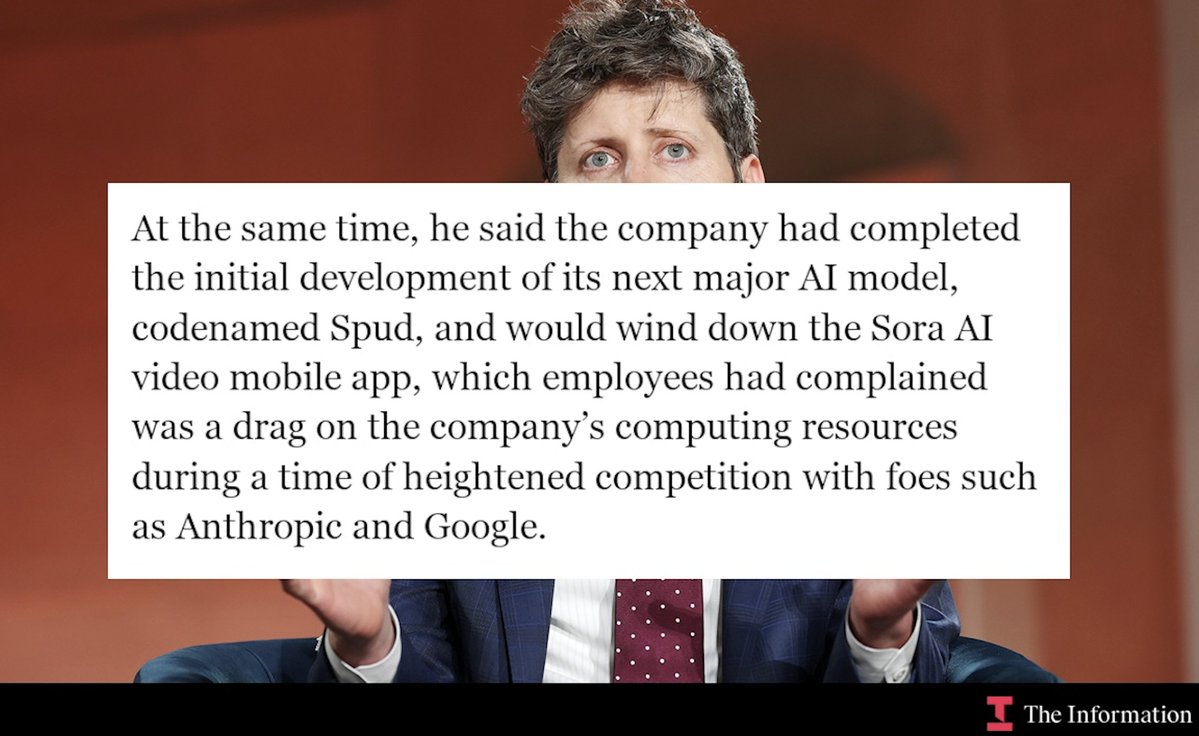

The report indicates this reallocation has come at the expense of other projects. It specifically notes that the high-profile video generation model Sora was "sacrificed" in favor of this new priority. This suggests that the computational horsepower and human capital required to push the boundaries of agentic AI are being drawn from other advanced research and development lanes within the company.

The new target is not merely a more capable chatbot, but systems that function as "automated researchers"—AI that can formulate hypotheses, design and run experiments, analyze results, and iterate on its own understanding. This points toward a future of AI that can engage in open-ended problem-solving and discovery with minimal human supervision.

The Technical Ambition: End-to-End Agentic Systems

The core technical ambition, as stated, is to create agent-based systems that can execute complex tasks end-to-end. In practice, this means moving beyond models that respond to single prompts and toward persistent AI entities that can:

- Accept a high-level goal or problem statement.

- Break it down into a series of actionable sub-tasks.

- Navigate digital tools and environments (browsers, code editors, APIs) to execute those tasks.

- Handle errors, ambiguities, and unexpected outcomes autonomously.

- Deliver a final, verified result.

This requires advances in planning, long-horizon reasoning, tool use, and memory—capabilities that have been the focus of intense research across the industry, including at Anthropic with its Claude 3.5 Sonnet and its demonstrated proficiency in coding and agentic workflows.

Why This Strategic Pivot Matters

OpenAI's public product focus has largely been on its conversational ChatGPT and its underlying GPT-4 family of models. This internal shift signals a belief that the next significant leap in capability and utility will come from autonomous, goal-directed systems, not just larger or more fluent next-token predictors.

By echoing the resource consolidation that led to GPT-3, OpenAI is betting that a similar "all-in" approach on agentic AI will produce a comparable paradigm shift. The implication is that the future of AI at OpenAI is less about generating text, images, or video on command, and more about deploying AI "workers" that can independently accomplish complex digital jobs.

gentic.news Analysis

This strategic reallignment is a direct escalation in the core strategic race of the 2025-2026 period: the development of reliable, generalist AI agents. It follows OpenAI's own pattern of consolidating resources for breakthrough efforts, a tactic that yielded transformative results with GPT-3. The explicit mention of sacrificing Sora is a stark admission of the immense compute and talent costs required to compete at the frontier of agentic AI, where every major lab is now placing its biggest bets.

This move aligns with and intensifies a trend we've been tracking. In our December 2025 coverage of Google's Gemini 2.0 launch, we noted its heavy emphasis on "planning" and "agentic task completion" as the new benchmark. Similarly, Anthropic's Claude 3.5 Sonnet has set a high bar for practical coding and reasoning agents, as detailed in our analysis of its SWE-bench performance. OpenAI's pivot confirms that the entire industry sees this as the primary battleground, moving beyond static benchmarks toward dynamic, task-completion efficacy.

The competition is now defined by who can build the first AI system that can reliably function as an automated software engineer, data analyst, or research assistant. OpenAI's decision to reallocate from Sora—a model that itself defined a new frontier in video generation—underscores the perceived magnitude of the opportunity and the threat of falling behind in the agent race.

Frequently Asked Questions

What are "automated researchers"?

"Automated researchers" refer to AI systems designed to autonomously conduct research processes. This includes formulating questions, reviewing literature, designing experimental approaches (like code or simulation setups), executing them, analyzing results, and synthesizing findings—all with minimal human intervention. It represents a shift from AI as a tool for answering questions to AI as an active participant in the discovery process.

Why would OpenAI sacrifice Sora for this?

Developing frontier AI models requires enormous computational resources (GPU clusters) and highly specialized engineering talent. Sora represents a state-of-the-art model in a specific modality (video). OpenAI's leadership has evidently calculated that the potential payoff and strategic necessity of winning the agentic AI race is greater than advancing in video generation at this moment. It's a classic portfolio prioritization decision under resource constraints.

How does this relate to ChatGPT and GPT-4?

The underlying language models like GPT-4 are the foundational intelligence for these agentic systems. Think of ChatGPT as the conversational interface to the model's knowledge. The new "automated researchers" would use a similar or more advanced core model as their reasoning engine, but wrapped in layers of software that enable planning, tool use, and persistent task execution. This is an evolution of the technology stack, not a replacement for the core language model technology.

What does this mean for AI competition with Google and Anthropic?

It signals that OpenAI is going "all-in" on what has become the central competition of 2026. Google DeepMind's Gemini 2.0 and Project Astra are explicitly agent-focused, and Anthropic's Claude has strong agentic capabilities. By reallocating resources so dramatically, OpenAI is acknowledging this is the critical path and attempting to marshal its forces to take or maintain a lead. The next 12-18 months will likely see the release of increasingly capable AI agents from all major labs, each aiming to claim dominance in this new paradigm.