What Happened

AI researcher Kimmonismus reported on X that a text-to-video model has achieved prompt-to-output latency of under 100 milliseconds. The post emphasizes the significance of this speed breakthrough, noting that current text-to-video models typically require 30 seconds or more to generate outputs.

Context

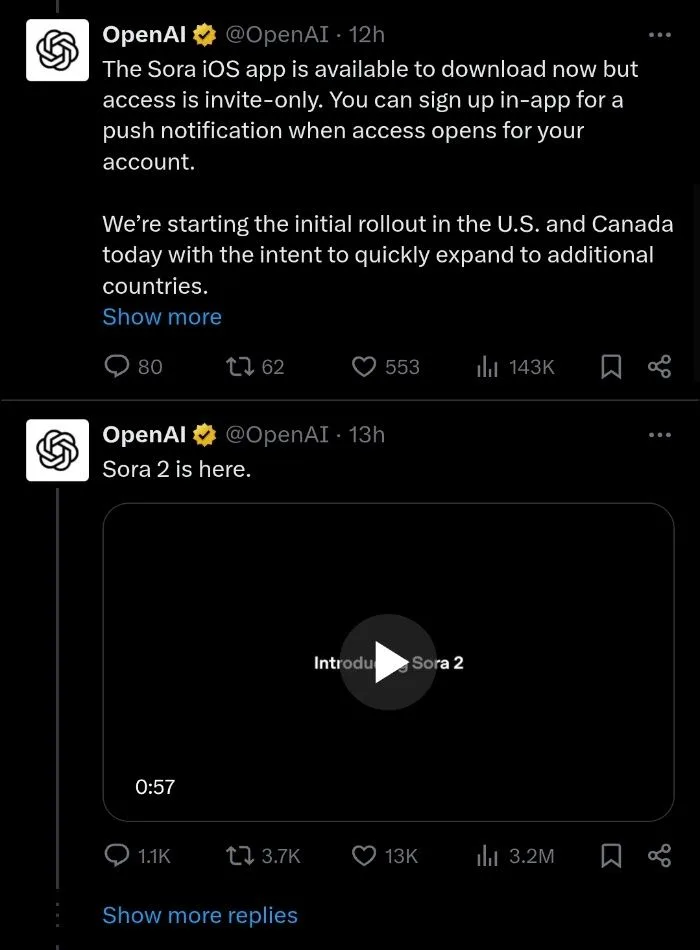

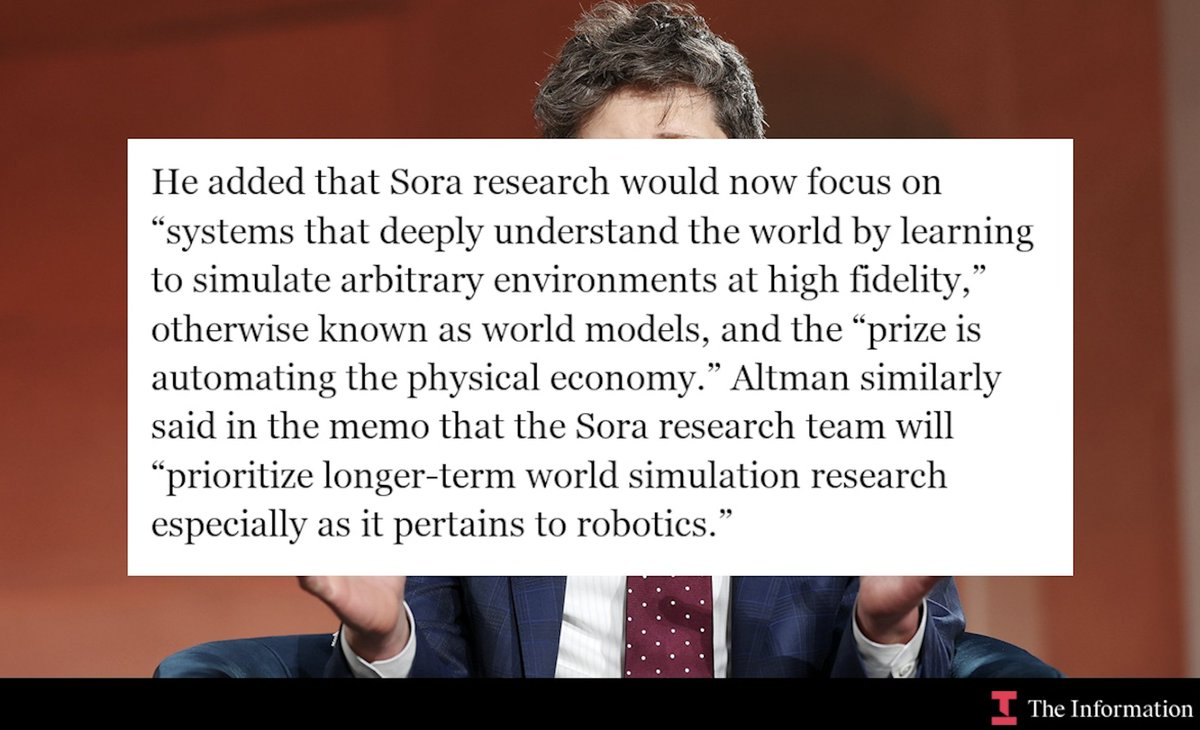

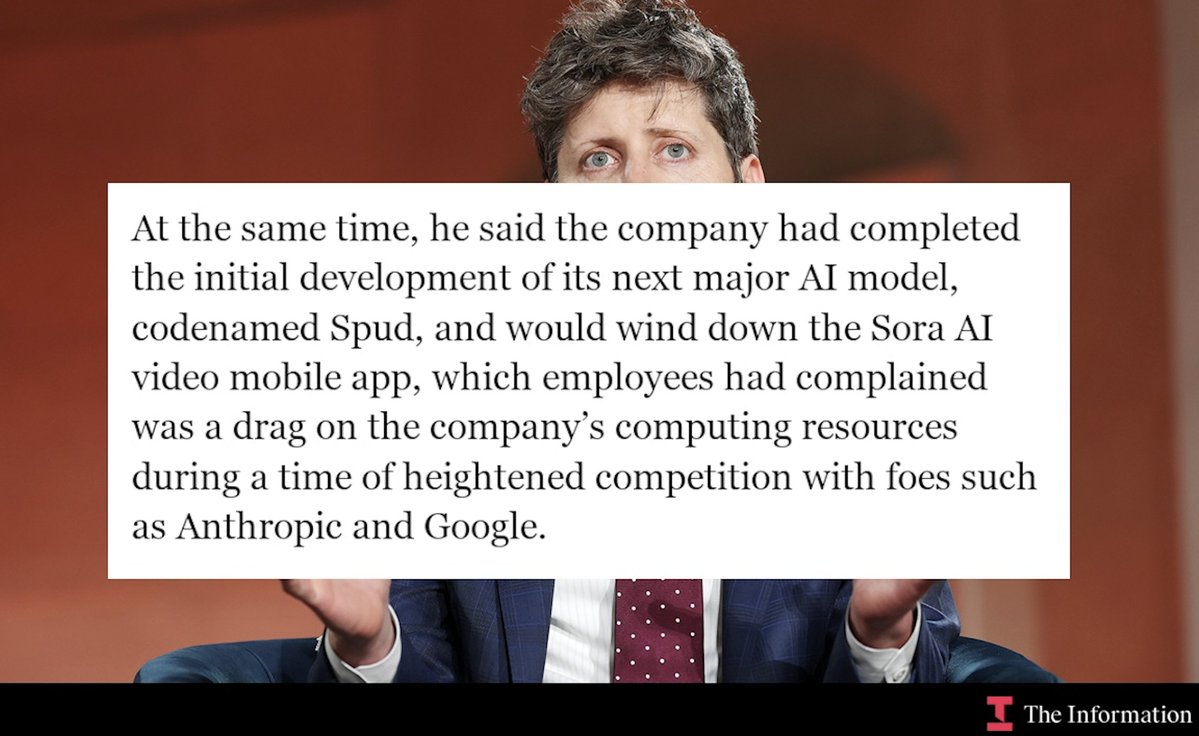

Current state-of-the-art text-to-video models like OpenAI's Sora, Runway's Gen-2, and Pika Labs operate with latencies measured in tens of seconds to minutes. This delay creates significant friction in creative workflows where rapid iteration is essential.

The sub-100ms latency represents a 300x speed improvement over typical 30-second generation times. At this speed, text-to-video generation approaches real-time interaction, potentially enabling new applications in live content creation, interactive media, and rapid prototyping.

Technical Implications

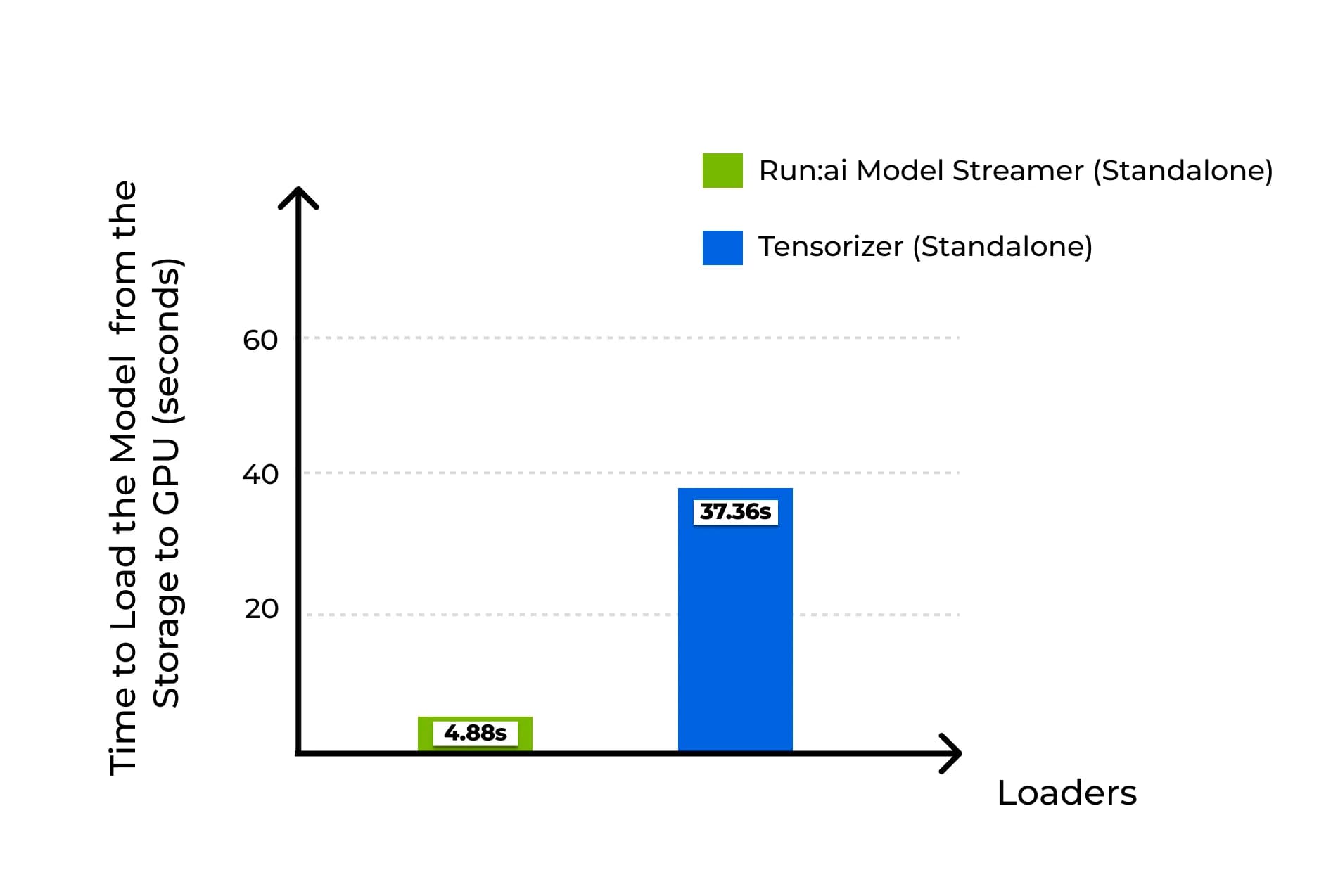

While the specific model architecture wasn't disclosed, achieving sub-100ms latency suggests several possible technical approaches:

- Extremely distilled models - Highly compressed versions of existing architectures

- Novel inference optimization - Advanced quantization, pruning, or speculative decoding

- Hardware-specific acceleration - Custom chips or optimized GPU kernels

- Caching/pre-computation - Pre-generated elements assembled at runtime

What to Watch

The claim requires verification through published benchmarks and demonstration of video quality comparable to current models. Key questions include:

- What resolution and duration can be achieved at this latency?

- What hardware is required (consumer GPUs vs. specialized hardware)?

- How does video quality compare to slower models?

- Is this a research prototype or production-ready system?

Until these details are available, practitioners should view this as a promising direction rather than an immediately deployable solution.