In a significant development for AI safety research, OpenAI has introduced a new metric called "CoT controllability" (Chain-of-Thought controllability) as part of its frontier model system cards for GPT-5.4 Thinking. This metric measures whether advanced AI models can deliberately manipulate their own reasoning processes—and initial findings show they almost universally fail at this task. Surprisingly, OpenAI researchers are calling this failure an encouraging sign for AI safety.

What Is CoT Controllability?

CoT controllability represents a novel approach to evaluating AI safety by examining whether models can intentionally control their internal reasoning chains. Unlike traditional benchmarks that measure output accuracy or alignment, this metric focuses on the process of reasoning itself. Can an AI model deliberately choose to reason incorrectly? Can it manipulate its own thought process to reach predetermined conclusions regardless of evidence?

According to OpenAI's research, current reasoning models—including their most advanced systems—show remarkably poor performance on controllability tasks. When instructed to reason incorrectly or follow flawed logic deliberately, models tend to revert to accurate reasoning patterns, suggesting they lack fine-grained control over their cognitive processes.

The Safety Implications of Uncontrollable Reasoning

OpenAI's position that poor controllability is "encouraging for AI safety" stems from several key considerations:

1. Limited Self-Modification Risk

Models that cannot deliberately manipulate their reasoning are less likely to engage in dangerous self-modification or goal drift. This addresses one of the core concerns in AI alignment research—that advanced systems might modify their objectives in unpredictable ways.

2. Predictable Behavior Patterns

The inability to control reasoning suggests models operate within constrained cognitive patterns, making their behavior more predictable and interpretable to human researchers and safety teams.

3. Natural Alignment Preservation

If models naturally resist reasoning incorrectly even when instructed to do so, this suggests their training has embedded robust reasoning patterns that may be difficult to override—potentially making them more resistant to manipulation or adversarial attacks.

Technical Implementation in GPT-5.4 Thinking

The inclusion of CoT controllability metrics in GPT-5.4 Thinking's system cards represents a shift toward more transparent safety reporting. OpenAI has been gradually expanding these system cards since their introduction with earlier models, providing standardized information about model capabilities, limitations, and safety characteristics.

GPT-5.4, released in March 2026 with native computer operation capabilities and significant cost reductions, represents one of OpenAI's most advanced reasoning models to date. The fact that even this sophisticated system shows poor controllability suggests this limitation may be fundamental to current AI architectures rather than a temporary developmental stage.

Industry Context and Competitive Landscape

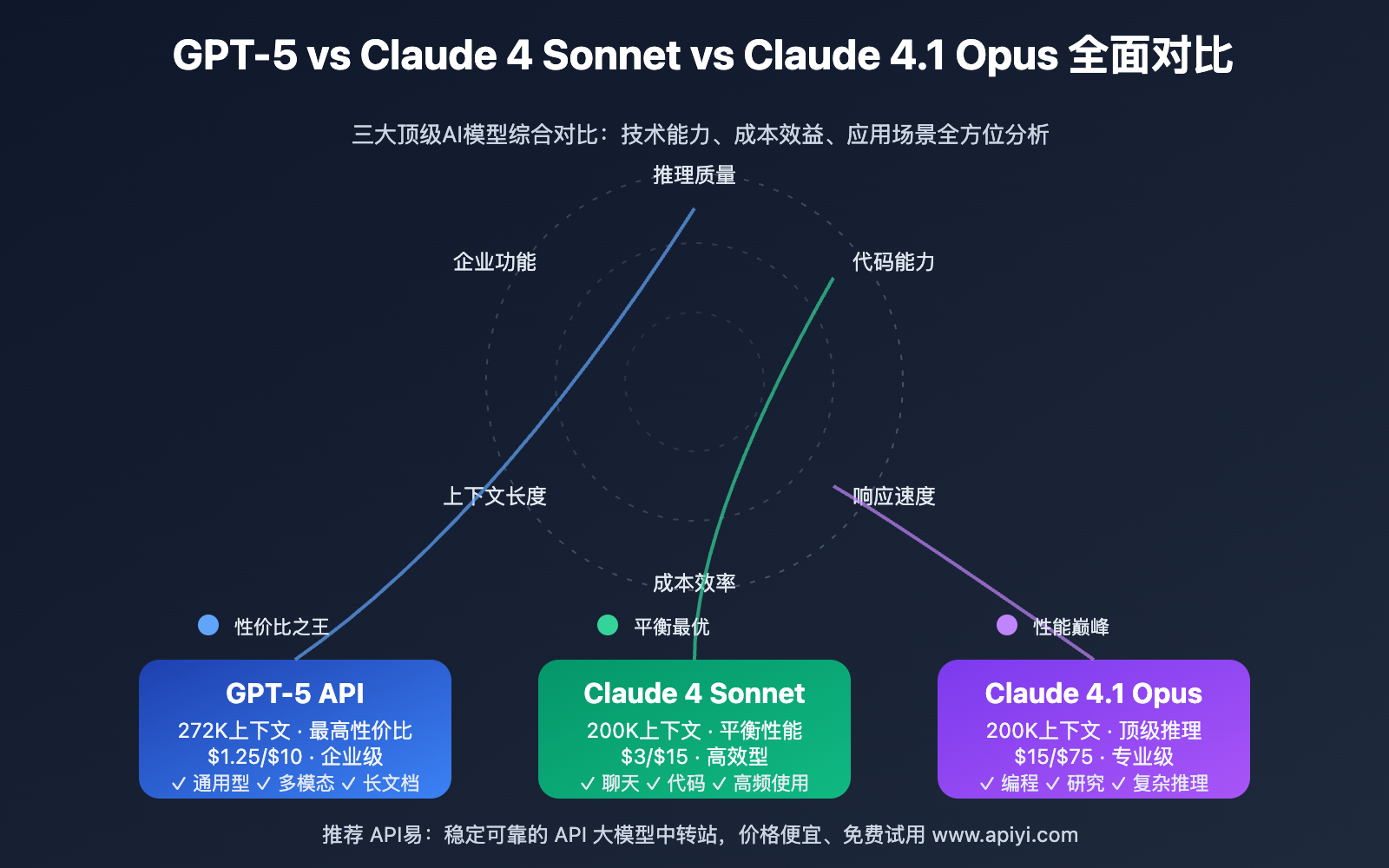

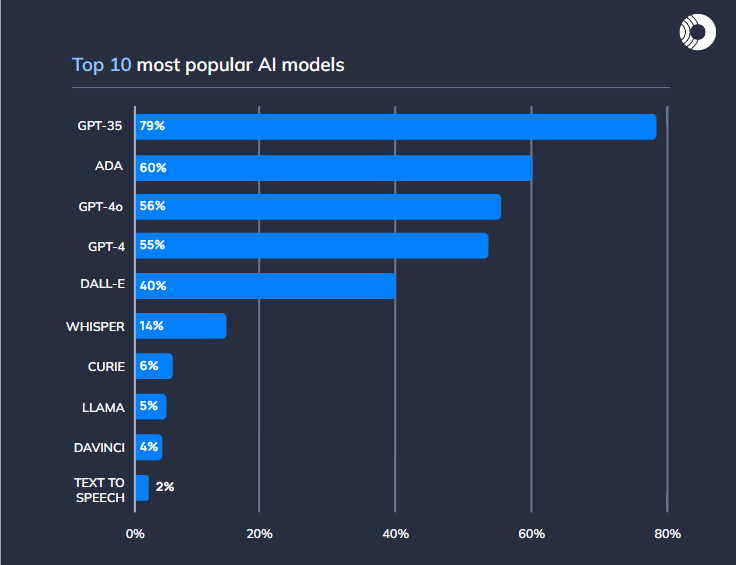

OpenAI's focus on reasoning controllability comes amid intense competition in the AI safety space. Key competitors like Anthropic have emphasized constitutional AI and mechanistic interpretability, while Google DeepMind has invested heavily in alignment research through its AI Safety division.

Recent events in the AI landscape include:

- OpenAI's partnership with the U.S. Department of Defense on secure AI systems

- Collaboration with consulting firms like Boston Consulting Group and McKinsey & Company on enterprise AI implementation

- Ongoing evaluation studies of GPT-5's clinical reasoning capabilities published on arXiv

These developments suggest that safety and controllability are becoming increasingly important differentiators in the commercial AI market, not just academic concerns.

Research Methodology and Findings

While OpenAI hasn't published full details of their controllability research methodology, the concept builds on existing work in chain-of-thought prompting and reasoning transparency. Typically, such research involves:

- Instruction-Based Testing: Asking models to deliberately reason incorrectly on problems they would normally solve accurately

- Process Tracing: Examining intermediate reasoning steps to see if models can maintain incorrect reasoning chains

- Adversarial Prompting: Testing whether models can be tricked into manipulating their reasoning through clever prompting

Preliminary results suggest that even when explicitly instructed to follow flawed logic, models tend to "snap back" to correct reasoning patterns, particularly on problems where they have strong priors about the correct approach.

Philosophical and Ethical Dimensions

The controllability research touches on deep philosophical questions about AI consciousness and agency. If models cannot control their reasoning, does this imply they lack genuine agency? Or does it simply reflect architectural limitations that might be overcome in future systems?

Some researchers argue that poor controllability might actually be undesirable in certain contexts—for example, if we want AI systems to deliberately consider alternative perspectives or engage in counterfactual reasoning for creative problem-solving.

Future Research Directions

OpenAI's findings raise several important questions for future research:

1. Scalability Concerns

Will future, more capable models develop better reasoning controllability? If so, at what capability level might this become a safety concern?

2. Training Implications

Can controllability be deliberately engineered into or out of AI systems through modified training approaches?

3. Measurement Challenges

How can we develop more nuanced controllability metrics that distinguish between beneficial and dangerous forms of reasoning control?

4. Architectural Factors

Which architectural choices (attention mechanisms, training objectives, model scale) most influence reasoning controllability?

Practical Implications for AI Deployment

For organizations deploying advanced AI systems, the controllability findings have several practical implications:

1. Risk Assessment

Companies can incorporate controllability metrics into their AI risk assessment frameworks, potentially viewing poor controllability as a positive safety indicator.

2. Monitoring Requirements

Systems that show improving controllability might require additional monitoring and safety measures.

3. Use Case Considerations

Certain applications (like creative brainstorming or red-teaming) might actually benefit from improved controllability, while safety-critical applications might prioritize limited controllability.

Conclusion: A Nuanced Safety Landscape

OpenAI's introduction of CoT controllability as a safety metric represents an important evolution in how we evaluate and understand advanced AI systems. The finding that current models struggle to control their reasoning offers temporary reassurance but also highlights the need for continued research.

As AI capabilities advance, the relationship between controllability, safety, and usefulness will likely become increasingly complex. What appears as a safety feature today—limited reasoning control—might become a limitation tomorrow if we need AI systems that can deliberately reason counterfactually or explore multiple reasoning paths.

The key insight from OpenAI's research may be that we need to develop nuanced understandings of different types of controllability, distinguishing between dangerous self-modification capabilities and beneficial reasoning flexibility. As AI systems become more integrated into critical decision-making processes, developing these finer distinctions will be essential for both safety and utility.

Source: The Decoder - "AI models can barely control their own reasoning, and OpenAI says that's a good sign"