OpenAI has published new technical documentation detailing how its Responses API functions by executing AI agents within a secure and managed computer space. This development represents a significant step forward in making agentic AI systems more reliable and safe for production use.

What the Responses API Does

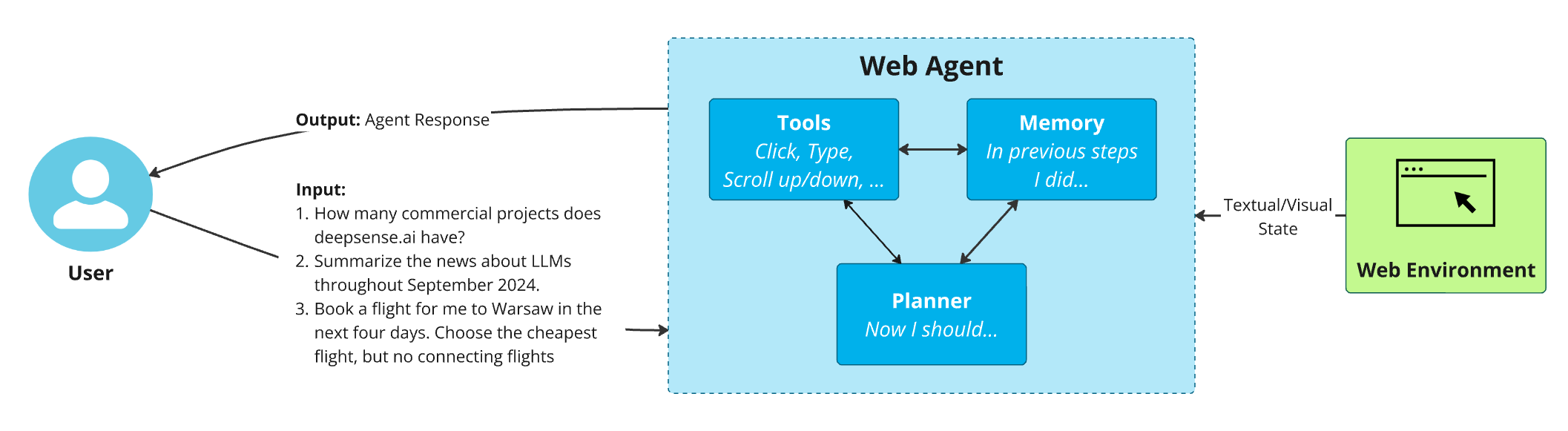

According to OpenAI's published documentation, the Responses API is designed specifically for running AI agents—autonomous systems that can perform tasks, make decisions, and interact with external tools and data. Unlike traditional API calls that simply generate text, agents require persistent execution environments where they can maintain state, access tools, and operate over extended periods.

The key innovation lies in OpenAI's approach to containerization and isolation. By placing each agent instance into a secure, managed computing environment, the company can ensure that:

- Agent activities are contained and cannot interfere with other systems

- Resource usage is monitored and controlled

- Security vulnerabilities are minimized through isolation

- Execution environments are consistent and reproducible

The Technical Architecture

While the source material doesn't provide exhaustive technical details, the description suggests a sandboxed execution model similar to container technologies like Docker but specifically optimized for AI agent workloads. This managed space likely includes:

- Isolated runtime environments for each agent instance

- Controlled resource allocation (CPU, memory, storage)

- Network access restrictions to prevent unauthorized external connections

- State management systems to preserve agent context across sessions

- Monitoring and logging infrastructure for debugging and compliance

This architecture addresses one of the fundamental challenges in deploying AI agents: ensuring they operate predictably and safely, especially when given access to tools or external data sources that could potentially be misused.

Implications for AI Development

The introduction of the Responses API with built-in security measures has several important implications:

For Developers: Building agentic applications becomes significantly easier and safer. Developers can focus on designing agent behaviors and workflows without needing to become experts in container security, resource management, or isolation techniques.

For Enterprise Adoption: Security-conscious organizations now have a more viable path to deploying AI agents in production environments. The managed, secure execution space reduces many of the risks associated with autonomous AI systems.

For the AI Ecosystem: This move represents OpenAI's continued evolution from a model provider to a platform company. By offering not just models but the infrastructure to run them safely, they're creating a more comprehensive ecosystem for AI application development.

Safety and Reliability Considerations

OpenAI's approach to agent security appears to prioritize defense in depth. The secure, managed computer space serves as a foundational layer of protection, upon which additional safety measures can be built. This is particularly important as AI agents become more capable and autonomous.

The managed environment likely includes:

- Rate limiting to prevent resource exhaustion attacks

- Input/output validation to detect and block malicious content

- Activity monitoring to identify unusual behavior patterns

- Automatic termination for agents that exceed operational boundaries

These features collectively reduce the risk of agents causing harm, whether intentionally (through prompt injection or other attacks) or accidentally (through bugs or unexpected behaviors).

Comparison with Existing Approaches

Traditional approaches to running AI agents have typically involved:

- Self-hosted solutions where developers manage their own infrastructure, requiring significant security expertise

- Virtual machines that provide isolation but with substantial overhead

- Serverless functions that offer some isolation but limited persistence for stateful agents

OpenAI's Responses API appears to offer a middle ground: the security and isolation of containerization with the convenience and scalability of a managed service. This could make sophisticated AI agents accessible to a much broader range of developers and organizations.

Future Directions

While the current documentation focuses on the secure execution environment, this foundation enables several future capabilities:

- Multi-agent systems where multiple agents can collaborate within controlled boundaries

- Tool integration frameworks that allow agents to safely interact with external APIs and services

- Compliance features for regulated industries requiring audit trails and governance controls

- Performance optimization through specialized hardware or software configurations

The secure sandbox approach also opens possibilities for verification and validation of agent behaviors, potentially allowing developers to prove certain safety properties about their agents before deployment.

Conclusion

OpenAI's publication of how their Responses API works represents more than just technical documentation—it's a statement about the future of AI development. By providing a secure, managed environment for running AI agents, they're addressing fundamental concerns about safety and reliability that have hindered broader adoption of agentic AI systems.

This development lowers barriers for developers while raising the safety floor for AI applications. As AI agents become increasingly capable and autonomous, having robust infrastructure for their secure execution will be essential. OpenAI's approach with the Responses API provides a model that other AI platforms will likely follow, potentially establishing new standards for how autonomous AI systems should be deployed and managed in production environments.

Source: OpenAI technical documentation as referenced in @rohanpaul_ai's coverage on X.