OpenClaw, the open-source framework for building long-context AI agents, has introduced a new experimental module called OpenClaw-RL. This system enables live reinforcement learning (RL) training on self-hosted model backbones, allowing agents to adapt their core reasoning and policy weights based on real interaction data—all while continuing to serve requests.

This addresses a fundamental limitation in current agent architectures: most agent frameworks, including OpenClaw's standard setup, adapt behavior solely through prompt engineering, memory files, and predefined skills. The underlying base model weights remain static. OpenClaw-RL proposes a method to continuously update those weights based on user feedback and reward signals.

What OpenClaw-RL Does

The system works by wrapping a self-hosted language model (like Llama 3.1 or Qwen 2.5) as an OpenAI-compatible API endpoint. It then intercepts live conversations from an OpenClaw agent running on that endpoint. In the background, an asynchronous training pipeline scores the model's responses, computes rewards, and performs policy gradient updates.

The key architectural innovation is its fully async design. The serving of responses, the scoring of rewards, and the training process all run in parallel. Critically, once a training batch is complete, the updated model weights are hot-swapped into the serving system without requiring a restart or causing downtime for the agent.

Two Training Modes

The initial release supports two distinct RL training paradigms, targeting different types of feedback:

Binary RL (GRPO): A reward model scores each agent turn as "good," "bad," or "neutral," producing a scalar reward. This reward drives policy updates using a PPO-style clipped objective (GRPO, or Group Relative Policy Optimization). This mode is useful for general behavioral shaping based on overall success/failure signals.

On-Policy Distillation: When a user provides concrete, instructional feedback—such as "you should have checked that file first"—this mode uses the feedback as a richer, directional training signal at the token level. It essentially distills the corrected behavior directly into the policy, which can be more sample-efficient for specific error patterns.

When to Use RL vs. Prompt-Based Methods

The project maintainer, Akshay Pachaar, provides clear guidance on the appropriate use case for this RL layer. He notes that many agent failures are not weight-level problems.

- Memory Problems: If an agent keeps forgetting user preferences, the solution is better memory design and context management.

- Skill Problems: If an agent doesn't know how to execute a specific workflow, the solution is to build or refine a skill (a reusable prompt/function).

These are solved at the prompt and context layer, which is the strength of OpenClaw's existing skill ecosystem and community-built self-improvement tools.

RL becomes necessary when the failure pattern is embedded in the model's intrinsic reasoning. Examples include:

- Consistently poor tool selection order in multi-step plans.

- Weak multi-step planning that prompt-tuning cannot fix.

- Failure to interpret ambiguous instructions in a user-specific way.

- Inability to recover gracefully from tool execution errors mid-task.

Research on agentic RL, such as ARTIST and Agent-R1, has shown that these deep behavioral patterns often hit a ceiling with prompt-based approaches alone. OpenClaw-RL explicitly targets this layer of adaptation.

Technical Implementation & Availability

The system is designed for developers and researchers who self-host their models and want to move beyond static weights. It requires managing the RL training loop, including reward model design (or using a pre-trained one) and ensuring training stability.

The code has been released as an experimental repository, linked from the original announcement. It represents a significant step toward continuously learning agents that improve from deployment experience, bridging the gap between research concepts like online RL and practical, deployable agent systems.

gentic.news Analysis

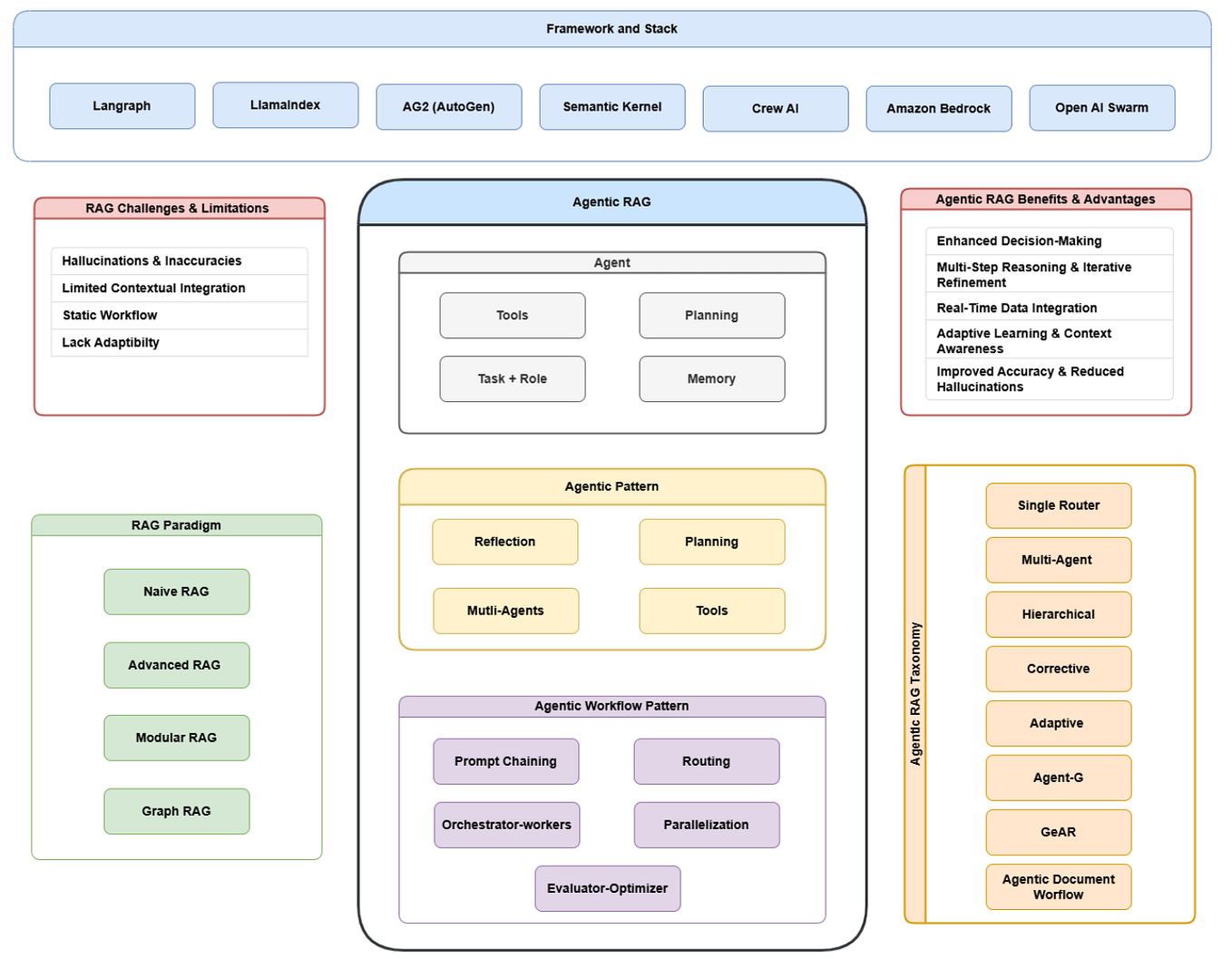

OpenClaw-RL enters a rapidly evolving niche: applied reinforcement learning for production agents. This follows a clear trend we've tracked since late 2024, where research breakthroughs in agentic RL (like Google's ARTIST and Anthropic's work on Constitutional AI) have begun spawning open-source implementations aimed at practitioners. The direct lineage is evident: OpenClaw-RL's binary RL mode cites GRPO, a simplification of PPO that has gained traction for language model alignment, while its distillation mode mirrors techniques used in instruction-following datasets.

This development also highlights a strategic divergence in the agent framework landscape. While platforms like LangChain and LlamaIndex focus on orchestration and retrieval, and CrewAI on multi-agent workflows, OpenClaw is carving a niche with a deeper focus on agent memory, skill composition, and now, core model adaptation. This positions it closer to research frameworks like Microsoft's AutoGen but with a stronger emphasis on deployability and continuous learning.

The "hot-swap" async architecture is a pragmatic engineering solution to a major deployment hurdle: training downtime. If successful, it could lower the barrier for teams to run their own lightweight RL fine-tuning loops in staging environments, using real user interactions as a reward signal. However, the major challenge remains reward design—creating a scoring function that reliably captures desired behavior without introducing unintended side-effects or "reward hacking." This is the same fundamental problem that large labs face with RLHF, now brought to the team level.

Frequently Asked Questions

What is OpenClaw?

OpenClaw is an open-source framework for building AI agents that can maintain long-term memory, use tools (skills), and operate over extended conversations. It allows developers to create persistent assistants that remember user context and preferences across sessions.

How is OpenClaw-RL different from standard fine-tuning?

Standard fine-tuning (like supervised fine-tuning on a dataset) is a one-off, offline process. OpenClaw-RL enables online reinforcement learning, where the model learns continuously from live interactions and feedback. The model updates happen asynchronously in the background while the agent is still serving users, and new weights are hot-swapped in without interruption.

Do I need a reward model to use OpenClaw-RL?

For the Binary RL (GRPO) mode, yes. You need a process to score each agent turn as good, bad, or neutral. This could be a simple heuristic, a user feedback button, or a separate trained reward model. The On-Policy Distillation mode uses direct human corrective feedback (like "you should have said X") and does not require a separate reward model.

What models are compatible with OpenClaw-RL?

It is designed to work with any language model that can be served via an OpenAI-compatible API endpoint. This typically includes popular open-source models like those from the Llama, Mistral, and Qwen families when served using libraries like vLLM or TGI (Text Generation Inference).