A groundbreaking approach to AI memory that treats information as continuous fields governed by physics-inspired equations has demonstrated remarkable improvements in long-context reasoning, potentially solving one of AI's most persistent challenges: the forgetting problem. Published on arXiv on January 31, 2026, the research presents a field-theoretic memory system that could fundamentally change how AI agents retain and process information over extended interactions.

The Forgetting Problem in Modern AI

Recent research has revealed that most AI agent failures stem from forgetting instructions rather than insufficient knowledge. This limitation becomes particularly acute in long-running conversations, multi-session interactions, and complex reasoning tasks that require maintaining context over hundreds of turns. Traditional memory systems treat information as discrete entries in databases or fixed-length context windows, creating artificial boundaries that disrupt continuity and lead to information loss.

The field-theoretic approach addresses this by drawing inspiration from classical physics, where memories are treated as continuous fields that evolve according to partial differential equations rather than static, isolated data points.

How Field-Theoretic Memory Works

The system models memories as continuous fields that diffuse through semantic space, similar to how heat diffuses through a material or how particles move in a fluid. This diffusion allows related memories to naturally influence each other, creating semantic connections that traditional discrete systems struggle to maintain.

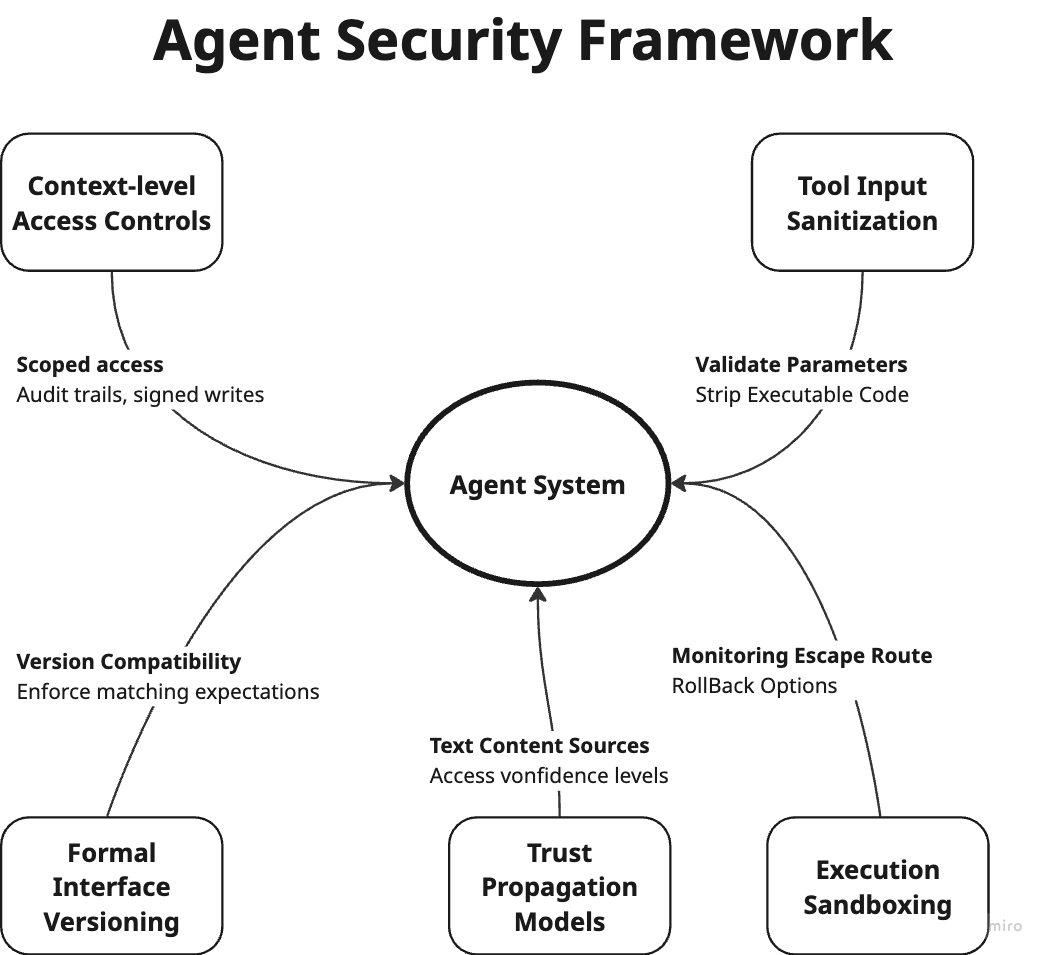

Three key mechanisms govern the memory dynamics:

Semantic Diffusion: Memories spread through related concepts, allowing the system to maintain connections between related information even when not explicitly linked

Thermodynamic Decay: Less important memories naturally fade over time, while crucial information persists, mimicking how human memory prioritizes significant experiences

Field Coupling: In multi-agent scenarios, memory fields interact and influence each other, enabling sophisticated collective intelligence

Benchmark Performance: Dramatic Improvements

The researchers evaluated their system on two established long-context benchmarks with impressive results:

LongMemEval (ICLR 2025)

- Multi-session reasoning: +116% F1 improvement (p<0.01, d=3.06)

- Temporal reasoning: +43.8% improvement (p<0.001, d=9.21)

- Knowledge update retrieval: +27.8% recall improvement (p<0.001, d=5.00)

LoCoMo (ACL 2024)

The system was tested on 300-turn conversations across 35 sessions, demonstrating robust performance in maintaining context over extended interactions that would typically overwhelm traditional AI memory systems.

Perhaps most strikingly, multi-agent experiments showed near-perfect collective intelligence (>99.8%) through field coupling, suggesting this approach could enable unprecedented coordination between AI agents.

Implications for AI Development

This research arrives at a critical moment in AI evolution. As Ethan Mollick recently predicted, AI agents are poised to dominate public digital platforms while humans retreat to private spaces. However, this transition has been hampered by agents' inability to maintain consistent context and memory across extended interactions.

The field-theoretic approach offers several transformative possibilities:

Continuous Learning Systems: Unlike current AI that often requires retraining or suffers from catastrophic forgetting, continuous field memory could enable truly lifelong learning agents that accumulate knowledge without losing previous capabilities.

Multi-Agent Coordination: The near-perfect collective intelligence demonstrated in experiments suggests this approach could revolutionize how AI agents collaborate on complex tasks, from scientific research to large-scale system management.

Human-AI Interaction: By maintaining context over hundreds of turns and multiple sessions, AI assistants could develop much more nuanced understanding of individual users, preferences, and ongoing projects.

Technical Implementation and Availability

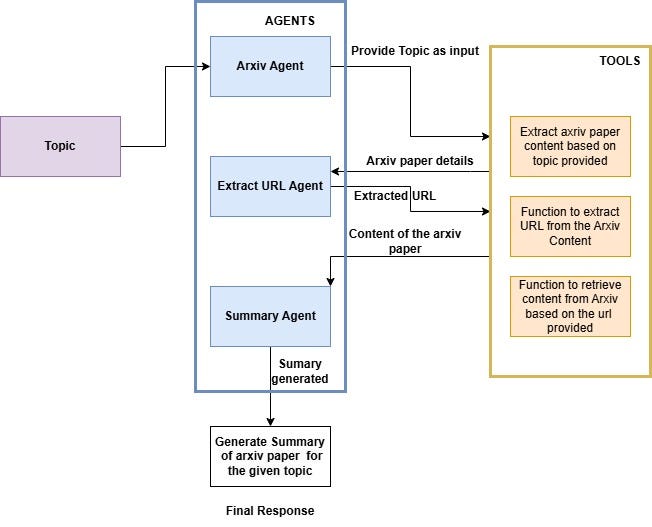

The research team has made their code available at github.com/rotalabs/rotalabs-fieldmem, encouraging further development and experimentation. The implementation treats memory as a continuous function over semantic space, with evolution governed by partial differential equations that balance diffusion, decay, and interaction terms.

This represents a significant departure from current approaches that typically use vector databases, attention mechanisms with limited context windows, or hierarchical memory structures. Instead of fighting against the discrete nature of digital systems, the field-theoretic approach embraces continuity as a fundamental property of intelligent memory.

Future Directions and Challenges

While the results are promising, several challenges remain. The computational requirements for solving partial differential equations in high-dimensional semantic spaces are substantial, though the researchers note that efficient numerical methods and approximations make the approach practical.

Additionally, questions about interpretability arise: how do we understand and debug memory systems that operate through continuous field dynamics rather than discrete retrievals? The researchers suggest visualization techniques from scientific computing could help make these systems more transparent.

Looking forward, this approach could merge with other AI advancements, potentially creating hybrid systems that combine the strengths of discrete symbolic reasoning with continuous field memory. As AI capabilities continue their rapid advancement—threatening traditional software models according to recent analyses—innovations in fundamental architectures like memory systems will likely play a crucial role in determining which approaches dominate the next generation of intelligent systems.

Source: arXiv:2602.21220v1, "Field-Theoretic Memory for AI Agents: Continuous Dynamics for Context Preservation" (2026)