What Happened

A post on X (formerly Twitter) from account @rohanpaul_ai combines two separate pieces of information related to OpenAI.

First, it references a recent statement from OpenAI CEO Sam Altman. In a talk, Altman framed artificial intelligence as a future commodity, stating, "intelligence will be a utility, like electricity or water." He outlined a strategic goal to "flood the market with tokens," which he described as "the best strategy for capitalism & innovation."

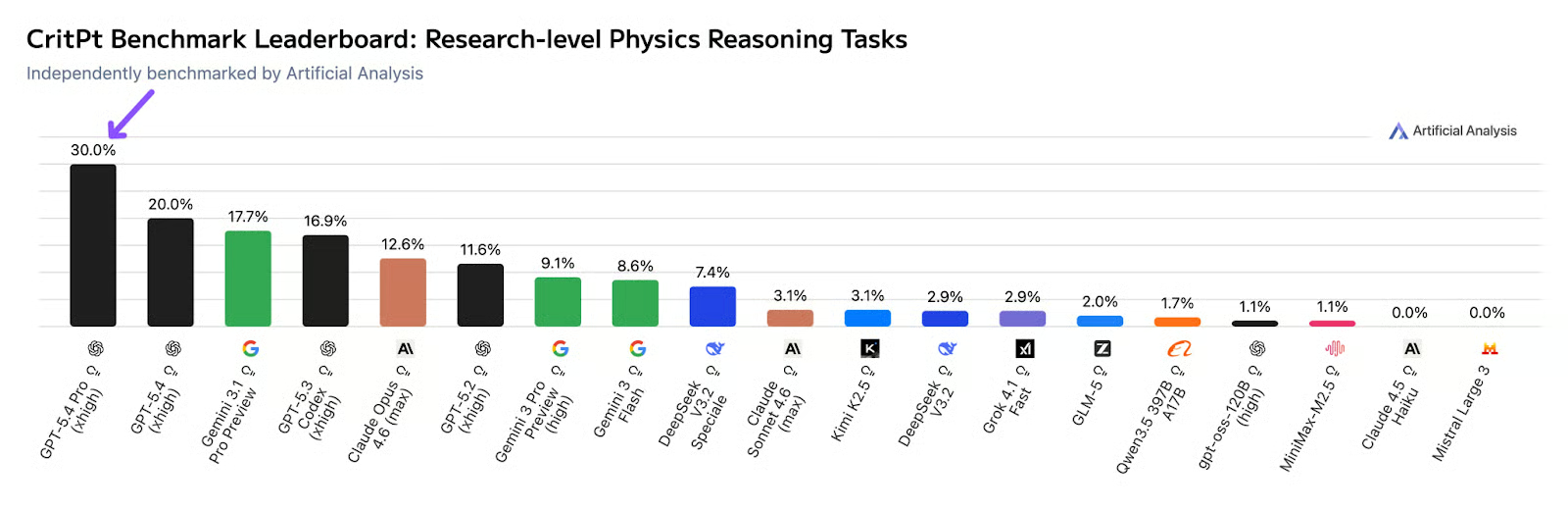

Second, the post makes an unverified claim about a new model, stating: "GPT-5.4 breaks records in 1st week. '5T tokens per day'." This suggests a model referred to as "GPT-5.4" is reportedly processing five trillion tokens daily. The post does not specify if this refers to training throughput, inference traffic, or another metric. No official announcement, technical paper, or performance benchmarks from OpenAI accompany this claim.

Context

The concept of treating AI inference as a low-cost, high-volume utility aligns with Altman's previous comments and OpenAI's product direction, particularly the push for its API and ChatGPT to become ubiquitous platforms. The scale of "5T tokens per day" is immense. For context, OpenAI's GPT-4 was reportedly trained on an estimated 13 trillion tokens. A daily processing rate approaching half of GPT-4's entire training dataset would represent an unprecedented scale of operational inference or training.

The naming "GPT-5.4" is unconventional and not part of OpenAI's official versioning. The company's public releases have followed a pattern of GPT-3, GPT-3.5, and GPT-4. The jump to a "5.4" designation in external reporting is unusual and should be treated with caution until confirmed by the company.

Important Caveat: The core technical claim in this report—the existence and performance of "GPT-5.4"—originates from a social media post without corroborating evidence from OpenAI or published research. Readers should treat this as a rumor, not a confirmed development.