A series of violent attacks targeting OpenAI CEO Sam Altman, including incidents involving a Molotov cocktail and gunfire, has underscored a dangerous escalation in anti-AI sentiment. Authorities investigating the attacks reportedly uncovered a "kill list" targeting technology executives, linked to extremist fears about artificial intelligence causing human extinction.

This climate of fear is not baseless public hysteria but reflects tangible, expert-warned tensions. These include widespread job displacement anxieties, the environmental and community strain from massive, resource-hungry data centers, and the persistent public discourse around AI's existential risks. Surveys indicate these concerns now affect over 50% of the global population.

In direct response to this volatile landscape, OpenAI has moved beyond technical research to enter the policy arena. The company recently published a significant 13-page policy paper outlining proposals for a new "social contract" designed to mitigate the societal disruptions caused by AI advancement and distribute its economic benefits more broadly.

What OpenAI's Policy Paper Proposes

The document is a direct attempt to address the sources of public anxiety fueling anti-AI backlash. Its core proposals form a triad of socio-economic interventions:

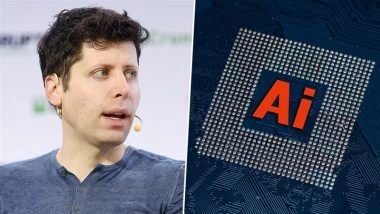

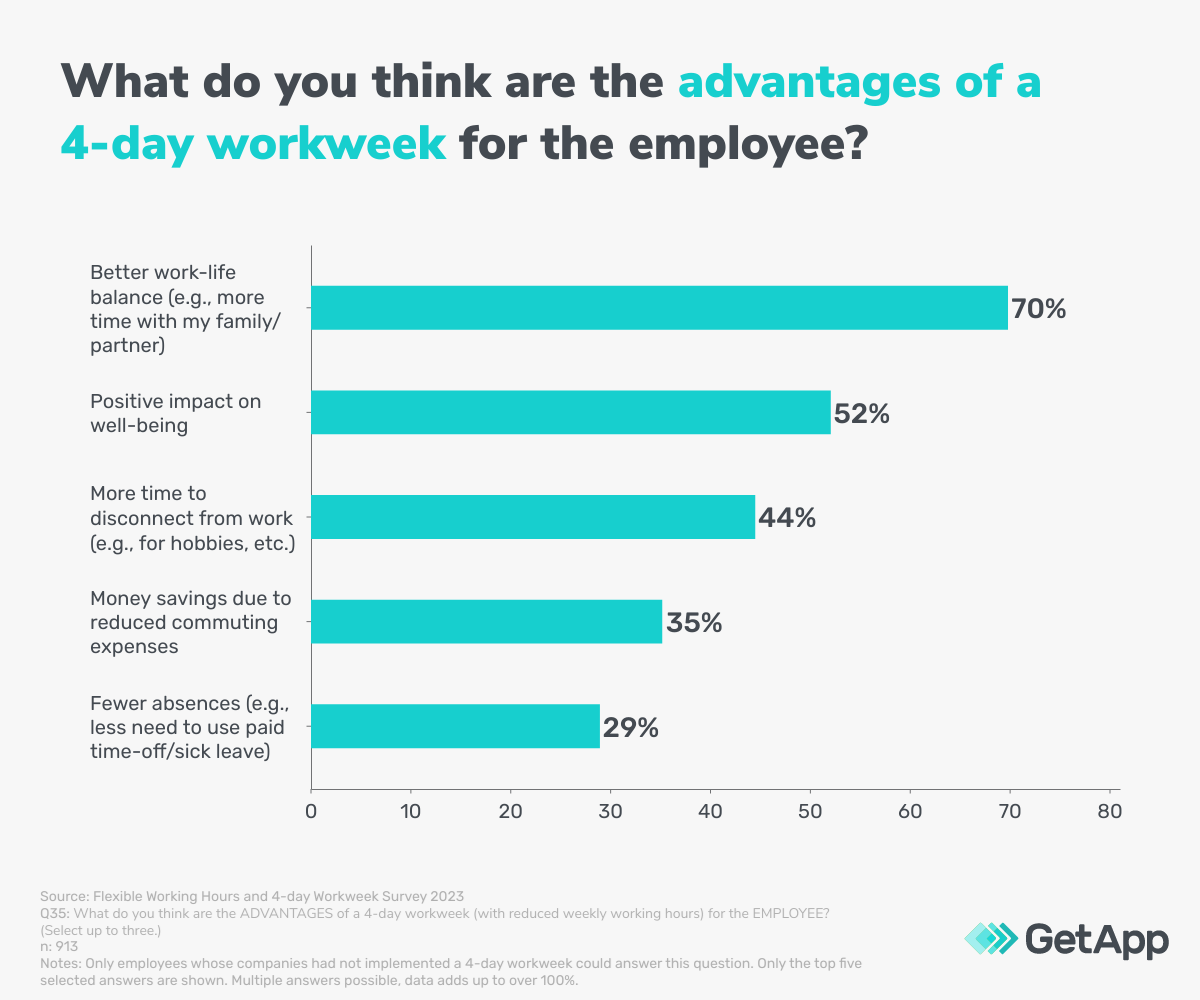

- A Four-Day Workweek: A formal recommendation to reduce the standard workweek to 32 hours. This is framed as a necessary adaptation to AI-driven productivity gains, aiming to prevent mass unemployment by redistributing work and freeing human time for other pursuits.

- A "Robot Tax": A levy on companies that displace human labor with AI automation. The revenue from this tax would be specifically earmarked to fund social safety nets, retraining programs for displaced workers, and the proposed AI dividend system.

- AI Dividends: A mechanism to directly share the wealth generated by AI with the public. The concept suggests channeling a portion of the profits from AI productivity into a universal fund, providing a direct financial stake for citizens in the AI-powered economy.

The paper explicitly ties these measures to preventing "social upheaval," acknowledging that the technological disruption caused by AI must be managed with deliberate policy to maintain social stability.

The Critical Caveat: Wealth Must Exist to Be Shared

The policy discussion includes a crucial, pragmatic limitation noted in the source material: redistribution can only occur where wealth is created. The proposals for taxes and dividends are fundamentally dependent on AI systems generating substantial new economic value within a jurisdiction. If Europe or Southeast Asia, for example, do not foster the right conditions for AI-driven wealth creation, there will be "nothing to redistribute," rendering the social contract moot. This highlights that the policy challenge is dual-faceted: stimulating AI innovation and productivity and designing the systems to capture and share its benefits.

The Violent Backdrop: From Debate to Attacks

The urgency of OpenAI's policy move is framed by a disturbing shift from online debate to physical violence. The attacks on Sam Altman represent a new threshold. While tech executives have long faced criticism, the reported discovery of a "kill list" suggests the mobilization of a violent fringe motivated by apocalyptic AI narratives. This moves the conversation from boardrooms and conference halls into the realm of personal security and law enforcement, marking a stark new phase in the public reception of AI.

gentic.news Analysis

This development represents a pivotal moment where a leading AI lab is proactively attempting to shape the socio-political framework for its own technology, likely in direct response to an untenable security and public relations crisis. The violent attacks on Altman are not an isolated event but a symptom of a growing, global anxiety that OpenAI can no longer afford to ignore. Their policy paper is a defensive maneuver, an attempt to offer a constructive narrative and policy toolkit to channel fears away from violence and toward political and economic negotiation.

This aligns with a trend we've covered of AI labs increasingly engaging in governance. For instance, our analysis of Anthropic's Constitutional AI framework highlighted how technical safety methods are being designed with value-alignment in mind. OpenAI's paper takes this a step further into macroeconomics and labor policy. However, it also creates a potential conflict of interest: the company proposing the rules (taxes, dividends) is also one of the primary entities that would be governed by them. This self-regulatory move will likely face scrutiny regarding its feasibility and the sincerity of its wealth redistribution mechanisms versus its utility as a public relations shield.

The caveat about wealth creation is the most technically salient point for our audience of builders. It underscores that the dazzling capabilities of frontier models have a downstream economic prerequisite. For the "robot tax" and "AI dividend" to be more than thought experiments, the AI industry must first succeed in creating massive, concentrated new value streams—precisely the outcome that fuels both investor excitement and public anxiety over power imbalances. The policy challenge is now running in parallel to the technical one.

Frequently Asked Questions

What is a "robot tax"?

A robot tax is a proposed levy on companies that replace human workers with automated systems, including AI software and physical robots. The tax revenue would fund social programs like retraining, unemployment benefits, or universal basic income to offset job losses caused by automation.

Has Sam Altman been physically attacked?

According to the source report, Sam Altman has been the target of recent attacks involving a Molotov cocktail and gunfire. Authorities investigating these incidents are reported to have found a "kill list" of tech executives, highlighting an escalation in violent anti-AI sentiment.

What is OpenAI's proposed social contract?

OpenAI's 13-page policy paper proposes a new social contract built on three main ideas: implementing a 32-hour, four-day workweek; instituting a tax on AI/robot automation; and creating a system to distribute AI-generated wealth directly to the public via dividends, all aimed at managing AI's disruptive societal impact.

Can AI dividends work if wealth isn't created locally?

No, and this is a key limitation. As noted in the discussion, redistribution policies like AI dividends require wealth to be generated within a taxing jurisdiction. If a region does not host successful, profitable AI companies or infrastructure, there is no substantial revenue to redistribute, making such policies ineffective there.