In a brief comment shared via social media, OpenAI CEO Sam Altman offered a forward-looking statement on the anticipated impact of advanced artificial intelligence. He suggested that the next generation of AI models will begin to serve as pivotal tools for scientific and research breakthroughs.

What Happened

Sam Altman stated: "With the next class of models, you start to see people say, this helped me make the most important discovery of my decade or maybe my career. Maybe not win a Nobel Prize on its own, but like a significant career-defining discovery."

The remark, shared in a post by AI commentator Rohan Pandey (@rohanpaul_ai), points to an expected qualitative shift in how researchers interact with AI. The focus is not on the AI autonomously winning prizes, but on its role as a catalyst that significantly amplifies human researchers' capabilities, leading to discoveries they would identify as the pinnacle of their work.

Context

This vision fits within the broader industry narrative of AI transitioning from a tool for content generation and coding assistance to a partner in complex reasoning and discovery. Altman's statement implicitly references models beyond the current public frontier, such as OpenAI's own GPT-4 series and competitors like Claude 3.5 Sonnet and Google's Gemini 1.5 Pro.

The prediction is aspirational and lacks specific technical details, benchmarks, or a defined timeline. It reflects a strategic vision for AI's value proposition in high-stakes, knowledge-intensive fields like biology, chemistry, physics, and materials science, where the cost of experimentation is high and the search space for solutions is vast.

gentic.news Analysis

Altman's comment is less a technical announcement and more a strategic positioning of OpenAI's roadmap within the intensifying competition for AI's "killer app" in enterprise and research. This follows OpenAI's established pattern of framing its technology's ultimate value in terms of macroeconomic impact and productivity, as seen in their previous emphasis on AI as a general-purpose technology.

This vision directly aligns with and contradicts other industry movements. It aligns with the push from companies like DeepMind (a subsidiary of Alphabet, Google's parent company), which has long focused on AI for scientific discovery, notably with AlphaFold for protein folding and more recent projects in mathematics and materials. However, it subtly contradicts the near-term, product-focused roadmaps of many other AI labs, which are prioritizing cost reduction, faster inference, and developer tooling.

Critically, this statement must be read in the context of the ongoing competitive pressure from open-source models and well-funded rivals like Anthropic and xAI. By pointing to a future of "career-defining" discoveries, Altman is attempting to define the high ground—suggesting that OpenAI's closed-approach research will yield a qualitative leap that justifies its model's premium positioning and computational cost, a topic we explored in our analysis "The Rising Cost of AI Leadership."

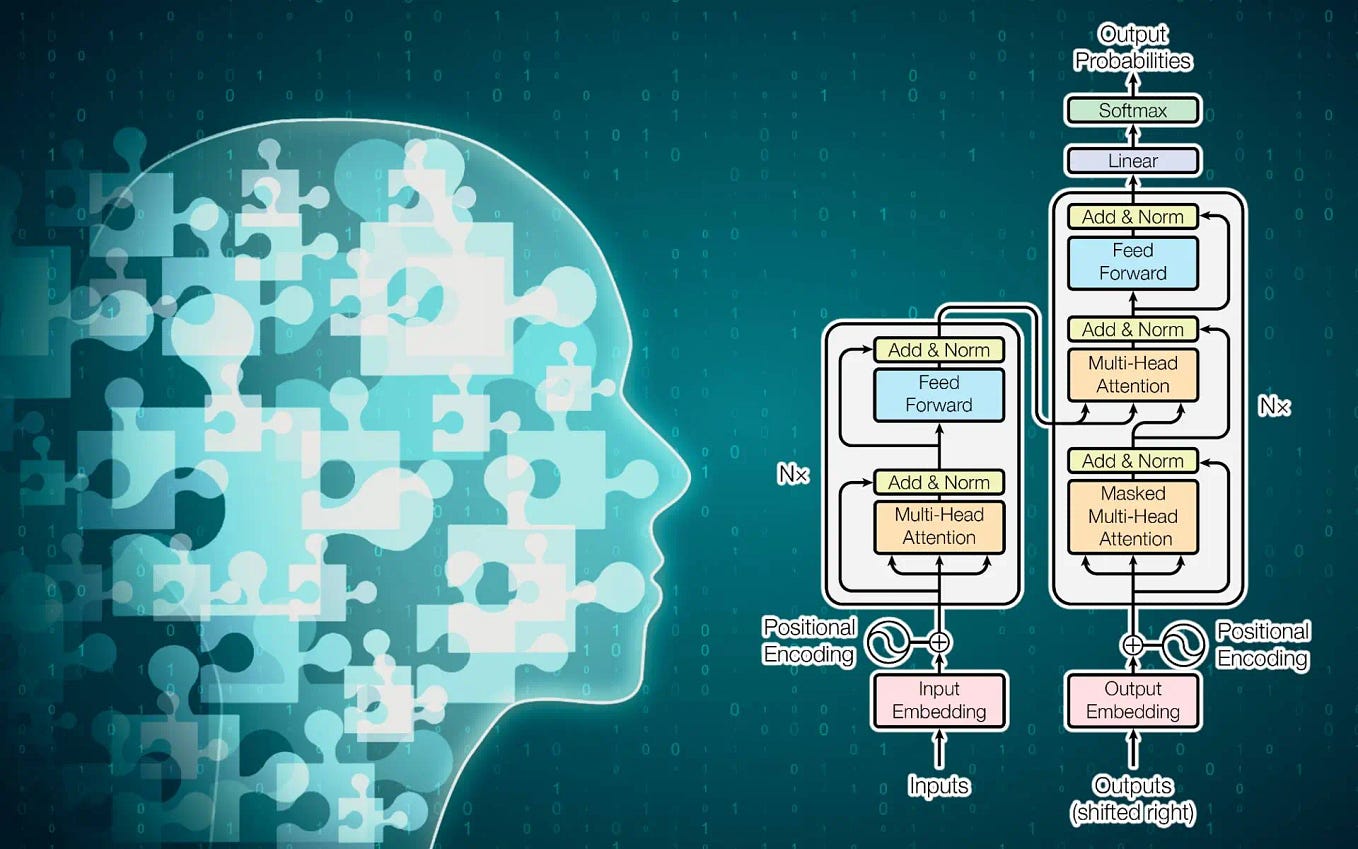

The key technical implication for practitioners is the implicit bet on scale and reasoning. For an AI to be a credible partner in novel discovery, it must move far beyond pattern recognition and retrieval to perform chains of complex, uncertain reasoning, potentially involving simulation and counterfactual exploration. This suggests OpenAI's next models may emphasize improvements in long-conchain reasoning, reliability, and the integration of tools for simulation and data analysis over mere parameter count increases.

Frequently Asked Questions

What did Sam Altman actually announce?

He did not announce a specific product or model. He made a predictive statement about the expected impact of the next generation of AI models, suggesting they will become instrumental tools that help researchers achieve the most significant discoveries of their careers.

Which AI models is Sam Altman referring to?

He is likely referring to the successor models to the current state-of-the-art, such as the anticipated follow-ups to OpenAI's GPT-4 Turbo, Anthropic's Claude 3.5 Sonnet, and Google's Gemini 1.5 Pro. The comment is generic and applies to the perceived next "class" or generation of models from leading labs.

Has AI already helped make major scientific discoveries?

Yes, but typically in a more constrained, domain-specific capacity. The most famous example is DeepMind's AlphaFold, which revolutionized protein structure prediction. Other examples include AI used to suggest new battery electrolyte compositions or optimize fusion reactor designs. Altman's prediction is that the next wave of general AI models will make this capability more accessible and powerful across many fields.

Does this mean AI will win a Nobel Prize?

Altman explicitly tempers this expectation, saying "Maybe not win a Nobel Prize on its own." His focus is on the AI as a crucial assistant that enables a human researcher to make a discovery worthy of such recognition, not on the AI being the named laureate.