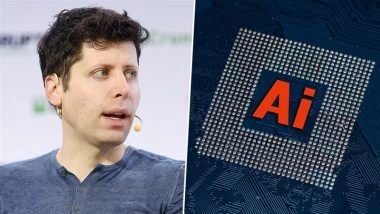

A second alleged attack on OpenAI CEO Sam Altman's San Francisco home occurred just two days after a Molotov cocktail incident, according to a report. Suspects are accused of firing a gun from a passing car before fleeing the scene.

San Francisco police later arrested two individuals and recovered three firearms. The incident intensifies concerns around the personal safety of high-profile AI leaders amid rising societal tensions tied to artificial intelligence's impact.

Key Takeaways

- Two days after a Molotov cocktail incident, suspects fired a gun at Sam Altman's home from a car.

- Police arrested two people and recovered three firearms, highlighting escalating tensions.

What Happened

The attack reportedly unfolded as a drive-by shooting at Altman's residence. This follows a previous incident where a Molotov cocktail was thrown at the property. The rapid succession of events—two attacks within 72 hours—suggests a targeted pattern rather than random violence.

Law enforcement responded quickly, apprehending two suspects and seizing multiple firearms. The investigation is ongoing, with authorities examining potential motives connected to anti-AI sentiment or other factors.

Context of Rising Tensions

Sam Altman, as CEO of OpenAI, has become one of the most visible figures in the artificial intelligence industry. His leadership during the release of GPT-4, ChatGPT, and subsequent models has positioned him at the center of global debates about AI safety, regulation, and economic disruption.

Physical attacks on technology executives remain rare but follow a pattern of increasing public animosity toward tech leaders perceived as driving rapid, disruptive change. The AI industry in particular faces polarized public opinion—celebrated for its potential benefits while simultaneously feared for job displacement, privacy concerns, and existential risks.

Security Implications for AI Leadership

The incidents at Altman's home highlight a new security dimension for AI industry leaders. As public debate grows more heated, physical security measures may become necessary for executives at companies developing advanced AI systems.

This development mirrors historical patterns where leaders of transformative technologies faced personal risks. However, the concentrated timeline—two attacks in three days—represents an escalation beyond typical protest activity.

gentic.news Analysis

This incident represents a concerning physical manifestation of the growing societal tensions surrounding artificial intelligence. While public debate about AI safety and ethics has been intense in academic and policy circles, direct physical threats against industry leaders mark a dangerous escalation.

The timing is particularly notable given OpenAI's recent trajectory. Following the company's restructuring and Altman's brief ouster in late 2023, OpenAI has continued to push the boundaries of AI capabilities with successive model releases. This technical progress has been accompanied by increasing public anxiety about AI's societal impact—concerns that now appear to be translating into physical threats.

From a security perspective, this incident may prompt other AI companies to reassess executive protection measures. The concentration of AI talent in the San Francisco Bay Area creates geographic vulnerabilities that companies like Anthropic, Google DeepMind, and Meta's AI research division will likely examine closely.

What's most troubling is the potential chilling effect on public discourse. If AI researchers and executives face physical threats for participating in public discussions about AI safety and policy, it could drive these conversations behind closed doors, reducing transparency and public accountability at precisely the moment when democratic oversight of AI development is most critical.

Frequently Asked Questions

What exactly happened at Sam Altman's home?

According to reports, suspects fired a gun from a passing car at Sam Altman's San Francisco residence. This occurred just two days after a Molotov cocktail was thrown at the same property. Police arrested two individuals and recovered three firearms.

Why would someone attack the OpenAI CEO?

While motives haven't been officially confirmed, the attacks likely relate to growing societal tensions around artificial intelligence. As a highly visible AI leader, Altman represents an industry that some fear will cause job displacement, privacy violations, or existential risks. Physical attacks represent an extreme manifestation of these anxieties.

How common are physical attacks on tech executives?

Physical attacks on technology executives are relatively rare but have occurred historically during periods of rapid technological disruption. What makes this case notable is the frequency—two attacks in three days—suggesting a targeted pattern rather than isolated incidents.

What security implications does this have for the AI industry?

The incidents may prompt AI companies to enhance executive security measures, particularly for leaders who are publicly visible. This could include increased personal protection, security assessments for residences, and revised protocols for public appearances. The geographic concentration of AI talent in the Bay Area creates particular security considerations.