What Happened

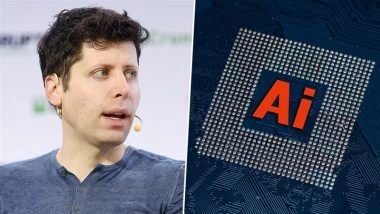

According to a report by the Financial Times cited in social media posts, OpenAI's Chief Executive Sam Altman has informed company staff that they are now dealing with a criminal attack, not merely criticism or protest. This statement suggests a significant escalation in the nature of the threats or adversarial actions facing the AI lab.

The source material is a retweet of the original report, providing no further details on the nature, source, or timing of the alleged criminal activity. The core claim is that leadership has characterized the situation internally as crossing a legal threshold.

Context

OpenAI, as a leader in frontier AI development, has long been a focal point for intense scrutiny, debate, and protest. Critics have raised concerns about AI safety, the concentration of power, labor practices, and the ethical implications of artificial general intelligence (AGI). Public protests and open letters from AI researchers and ethicists have been common.

The framing of current events as a "criminal attack" implies actions that may include cyber intrusions, harassment, threats, property damage, or other activities that violate criminal law, moving beyond protected speech or lawful assembly. This development occurs amidst a highly competitive and politically charged global AI landscape.

gentic.news Analysis

This report, if accurate, signals a hardening of posture at the highest levels of OpenAI. Characterizing adversarial actions as criminal rather than discursive is a serious step that typically precedes or follows engagement with law enforcement. For a company of OpenAI's profile, this could relate to several threat vectors: sophisticated cyber-espionage targeting model weights or proprietary data, coordinated harassment campaigns against researchers, or physical security threats.

This aligns with a broader trend of increasing securitization in AI. As covered in our analysis of the CIA's investment in AI tools for intelligence and the rising incidents of state-linked hacking targeting AI firms, the assets held by leading labs are now considered high-value national security and economic targets. OpenAI's closest competitor, Anthropic, has also reportedly invested heavily in operational security following intense scrutiny.

The timing is notable. OpenAI is in an advanced phase of developing its next-generation models, rumored to be on the path to GPT-5 and beyond. As we noted in our coverage of the GPT-4.5 leak incident, internal security and IP protection have become paramount. A "criminal attack" could represent an attempt to exfiltrate critical research or destabilize development timelines, directly impacting the competitive race with entities like Google DeepMind, Meta, and well-funded startups.

For practitioners and the tech community, this is a stark reminder that the AI arena is no longer just an academic or commercial field; it is a domain where geopolitical, criminal, and extremist interests are increasingly active. The response from OpenAI—whether it involves tighter information controls, reduced transparency, or legal actions—could set a precedent for how other labs manage security in this new environment.

Frequently Asked Questions

What kind of criminal attack could OpenAI be facing?

While specifics are unknown, likely vectors include cyber-attacks aimed at stealing model weights, training data, or source code; coordinated disinformation or smear campaigns; harassment or threats against employees and their families; or physical security breaches at offices or data centers. Given the value of OpenAI's IP, industrial espionage is a primary concern.

Has OpenAI been attacked or protested before?

Yes, extensively. OpenAI has been the subject of public protests from AI ethicists and concerned technologists, open letters signed by thousands calling for pauses in development, and criticism from competitors and policymakers. However, framing an incident as a criminal attack suggests a qualitative shift towards actions that are illegal, not just critical.

How might this affect OpenAI's operations and transparency?

It likely will lead to increased internal security, reduced sharing of technical details (even with the research community), and a more guarded public communications strategy. While necessary for protection, this could slow down scientific collaboration and increase opacity around the development of powerful AI systems, which itself is a concern for external oversight.

What should other AI companies learn from this?

Other labs should audit their own physical and cybersecurity postures, especially around protecting core model assets and researcher safety. This incident underscores that leading AI firms are now critical infrastructure and high-value targets, requiring security planning on par with defense contractors or financial institutions.