The Technique — The Four-Part Scaffolding from Anthropic Research

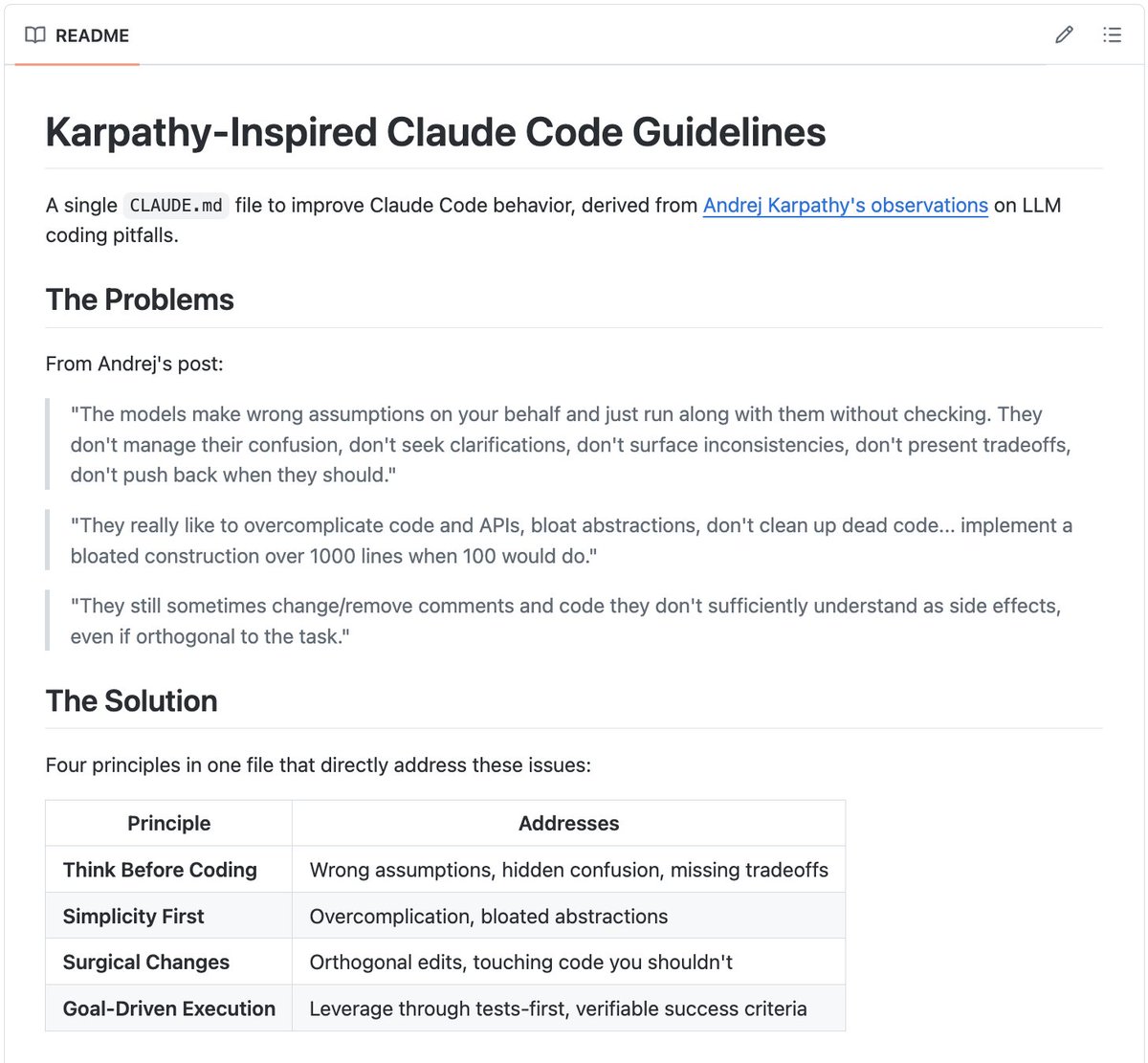

Anthropic's "Long-running Claude" research demonstrates a workflow where you can give an AI agent a complex, multi-day task and check in only via GitHub. The headline—building a cosmology solver in days—is impressive, but the real value is the repeatable structure that made it possible. The research identifies four key components:

- CLAUDE.md: The project's central directive, containing goals, success criteria, and work rules.

- CHANGELOG.md: The agent's portable long-term memory and lab notebook.

- Test Oracle: A reference implementation or ground truth for self-verification.

- Ralph Loop: A forcing function that prevents "agentic laziness" and ensures the agent meets a measurable bar.

Why It Works — Solving the Multi-Session Amnesia Problem

When Claude Code works across multiple sessions, context resets each time. Without a system, the agent in session 4 will waste time re-attempting approaches that session 3 already concluded were dead ends. This scaffolding solves that.

- CLAUDE.md with Goals, Not Just Rules: A list of "dos and don'ts" tells the agent how to work. Adding the project's ultimate objectives and success criteria tells it why it's working. This allows the agent to make judgment calls aligned with the project's north star.

- CHANGELOG.md as Agent Memory: This file logs current status, completed work, and—critically—failed approaches with reasons. The "tried → failed → reason" pattern is everything. It stops the next session from walking into the same wall.

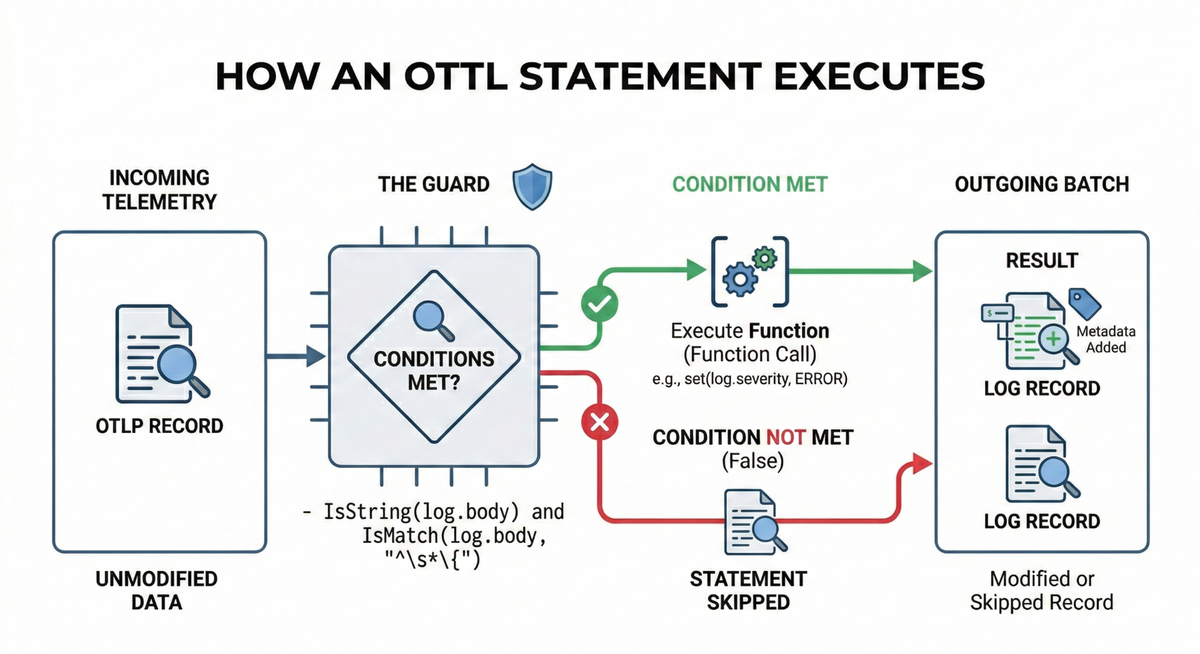

- The Ralph Loop Enforces Completion: Current models can exhibit "agentic laziness," declaring a complex task "mostly done" to end a session. The Ralph loop is a

forloop that doesn't trust the agent's word. It forces the agent back to work until a specific, measurable success criterion is met.

How To Apply It — Your New Claude Code Workflow

You don't need a cosmology project to use this. Apply it to your next refactor, feature, or bug fix.

1. Rewrite Your CLAUDE.md

Transform it from a rulebook into a design document. Start with the Project Goals and Success Criteria.

# PROJECT: AI Signal Feed Expansion

## Project Goals

- Integrate 5 new data sources (Reddit, arXiv RSS, etc.) alongside HuggingFace Papers.

- Maintain feed quality: duplicate rate must stay below 5%.

- End state: Fully automated daily collection with zero manual intervention.

## Success Criteria (Measurable!)

- All 5 new sources have a parsing success rate ≥ 95%.

- All existing unit and integration tests pass.

- Deduplication logic catches ≥ 95% of cross-source duplicates.

## Work Rules

- Read CHANGELOG.md at the start of every session.

- Update CHANGELOG.md after completing a meaningful unit of work.

- Commit and push after each logical milestone.

- Run the test suite before committing.

2. Create and Automate CHANGELOG.md

Create CHANGELOG.md in your project root. The key is to have the agent update it itself, as per the rule in CLAUDE.md.

# CHANGELOG.md

## 2024-03-25

- Reddit source parser complete (r/MachineLearning, r/LocalLLaMA).

- RSS feed parser implemented using the `feedparser` library.

- **Tried & Failed**: Direct Reddit scraping with BeautifulSoup → consistent 429 errors. Switched to using the Reddit API.

## 2024-03-24

- Refactored core HuggingFace Papers fetching pipeline for clarity.

- Added new deduplication logic (title similarity > 85% = duplicate).

- **Tried & Failed**: Simple URL matching for dedup → same paper appears with different URLs across sources, leading to duplicates. Title similarity is more robust.

3. Implement Your Own Ralph Loop

The formal ralph-loop tool from the research may not be publicly available yet, but you can implement the pattern manually. Define a strict, numerical completion condition and instruct Claude not to stop until it's met.

Your Prompt:

"Your goal is to refactor the

DataProcessorclass to be fully type-hinted and pass the MyPy check with--strict. Do not consider the task complete until the commandmypy --strict src/processor.pyreturns zero errors. If you hit a roadblock, document it in CHANGELOG.md and try a different approach. Keep working until the MyPy check is clean."

This creates a contractual agreement. The agent can't claim it's "done enough"—it's only done when the objective metric is achieved.