Recent reports circulating on social media and tech industry channels suggest a troubling inconsistency in how the U.S. Department of Defense evaluates AI companies' ethical frameworks. According to multiple sources, the DoD reportedly terminated negotiations with Anthropic over the company's insistence on maintaining its constitutional AI principles, while simultaneously finalizing an agreement with OpenAI that appears to accommodate similar ethical considerations.

The Alleged Anthropic Rejection

While official confirmation remains pending, multiple industry insiders report that Anthropic's negotiations with the Department of Defense broke down specifically over ethical concerns. Anthropic, founded by former OpenAI researchers concerned about AI safety, has built its company around a "constitutional AI" framework that includes specific principles governing how its models should be developed and deployed.

Sources suggest that when these principles conflicted with certain DoD requirements or potential applications, Anthropic leadership—reportedly including co-founder Dario Amodei—refused to compromise on core ethical commitments. The result, according to these reports, was not just a failed negotiation but a public rebuke that some characterize as humiliation for standing by ethical principles.

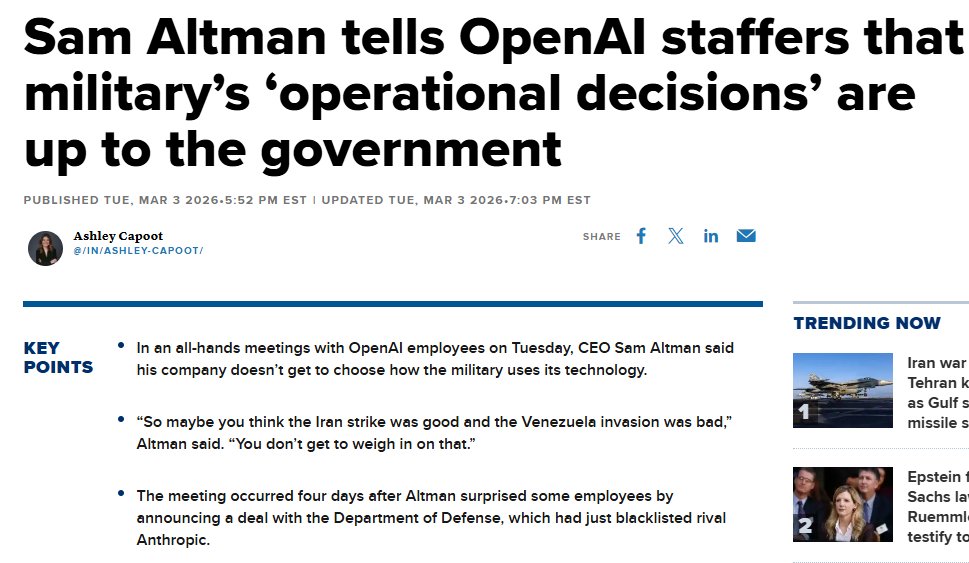

The OpenAI Agreement

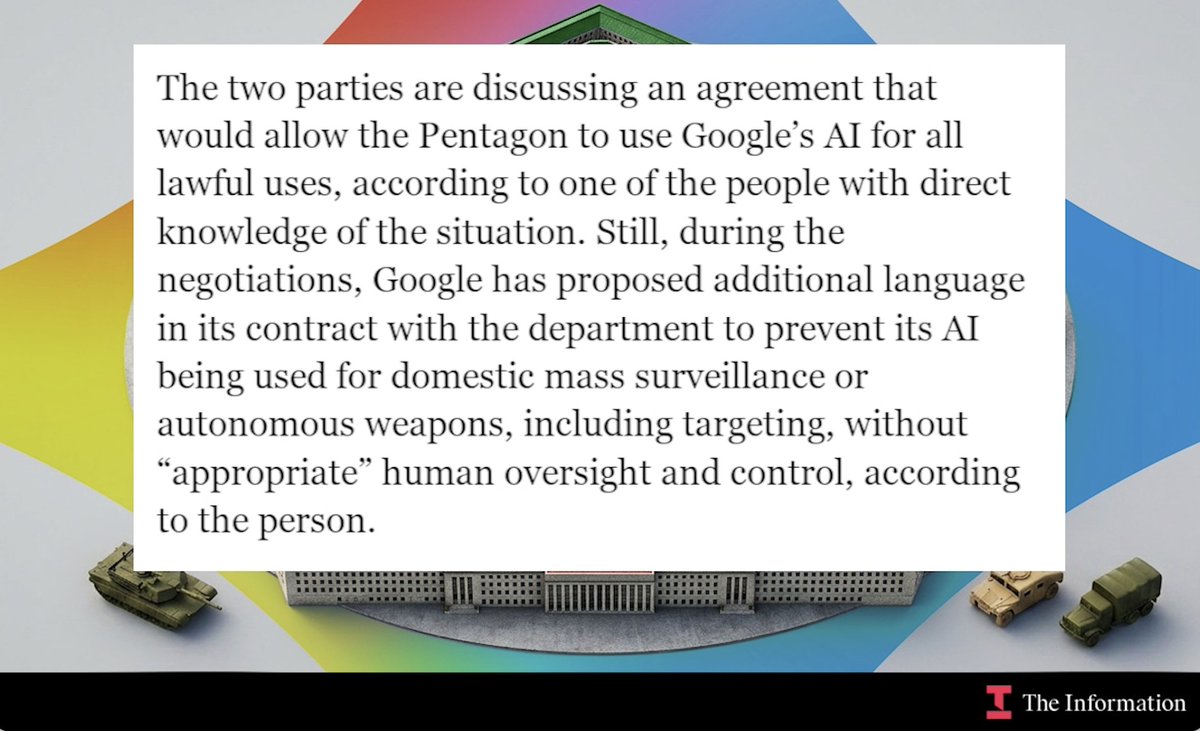

Contrasting sharply with Anthropic's experience, OpenAI appears to have successfully navigated similar ethical terrain to secure a significant agreement with the Department of Defense. This development is particularly notable given OpenAI's own well-documented ethical guidelines and safety principles, which include restrictions on certain military applications.

The apparent contradiction becomes more striking when considering that OpenAI CEO Sam Altman has been publicly advocating for AI safety regulations and ethical guidelines—positions that seemingly mirror those for which Anthropic reportedly faced consequences. This raises immediate questions about whether different standards are being applied to different companies, or whether the substance of their ethical frameworks differs in ways significant to government evaluators.

The Broader Context of Military AI Procurement

The Department of Defense has been increasingly vocal about its need to integrate cutting-edge AI capabilities while maintaining ethical standards. The Pentagon's Responsible AI Strategy and Implementation Pathway, released in 2022, emphasizes the need for "ethical principles and responsible practices" in military AI systems.

However, the practical implementation of these principles appears inconsistent. The reported differential treatment of Anthropic and OpenAI suggests that either:

- The companies' ethical frameworks contain substantive differences significant to military applications

- Negotiation strategies and relationship management differed substantially between the companies

- There is inconsistency in how the DoD evaluates and responds to ethical concerns from different vendors

The Real-World Cost of Ethical Stances

This situation highlights a fundamental tension in the AI industry: the potential conflict between ethical principles and commercial opportunities, particularly in the lucrative government contracting space. Anthropic's reported experience suggests that maintaining ethical boundaries can carry real financial and reputational costs, even when those boundaries align with publicly stated government values.

For AI safety advocates, this creates a concerning precedent. If companies that prioritize safety and ethics face disadvantages in major procurement processes, market forces may gradually push the industry toward less principled positions. This dynamic could undermine the very ethical frameworks that both companies and governments claim to support.

Industry Reactions and Implications

The tech community's response to these reports has been mixed but generally concerned. Some observers note that without full transparency about the specific points of contention in each negotiation, it's difficult to assess whether there's genuine inconsistency or simply different circumstances. Others point to the broader pattern of government contracting often favoring established relationships over principled newcomers.

For the AI industry specifically, this situation may force companies to reconsider how they structure their ethical guidelines and negotiation strategies. The apparent success of OpenAI's approach—whatever its specifics—may become a case study in how to balance principle with pragmatism in government relations.

Looking Forward: Transparency and Consistency

The most pressing need emerging from this situation is greater transparency in how government agencies evaluate and respond to AI companies' ethical frameworks. Without clear, consistent standards applied equally to all vendors, the procurement process risks appearing arbitrary or influenced by factors unrelated to technical capability or ethical rigor.

This incident also highlights the need for clearer dialogue between AI companies and government agencies about the practical implementation of ethical AI principles. As military applications of AI become increasingly sophisticated, finding common ground on safety and ethics will only grow more important—and potentially more contentious.

Source: Initial report from @kimmonismus on X/Twitter, with additional context from industry analysts and AI policy experts.