What Happened

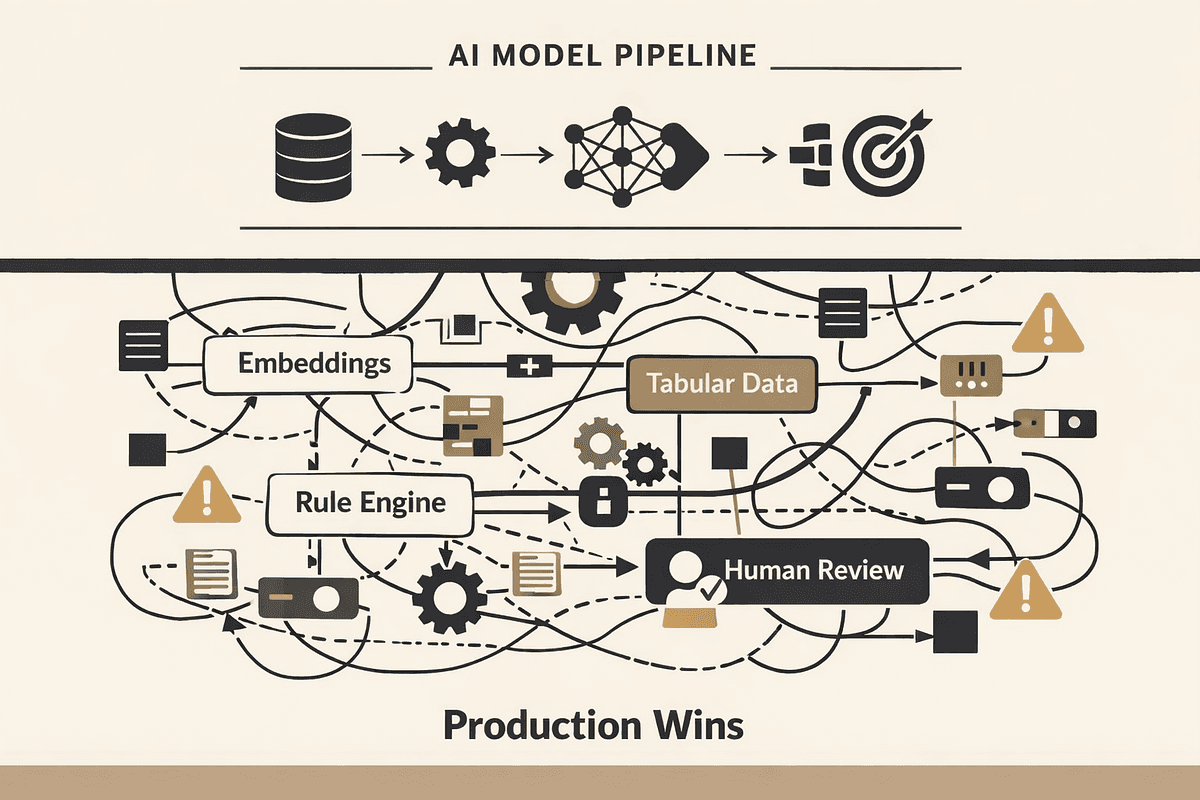

A technical article published on Medium, a platform that has seen a surge in expert AI content this week, makes a central claim: the ideological debate between deep learning and "classic" machine learning (like gradient-boosted trees) is over. The future of production ML is not a choice between these paradigms, but a pragmatic, often "ugly," hybrid.

The author, writing from an engineering perspective, contends that the most robust and effective systems in production today are heterogeneous stacks. They deliberately mix components like:

- Embeddings from deep neural networks (for capturing complex semantic relationships).

- Gradient-boosted trees (like XGBoost, LightGBM) for structured, tabular data where interpretability and precision on known features matter.

- Hand-crafted rules and heuristics for enforcing business logic, safety guards, or handling edge cases with certainty.

- Human-in-the-loop review for quality control, model refinement, and handling low-confidence predictions.

The argument is that betting everything on a single, monolithic deep learning model is often a recipe for production fragility, unexpected failure modes, and high operational cost. The hybrid approach is born from engineering necessity—reliability, maintainability, and cost-effectiveness—not academic purity.

Technical Details: Why Hybrids Win

The article implicitly breaks down the strengths and weaknesses that lead to this hybrid reality:

Deep Learning for Perception & Semantics: For unstructured data—images, text, audio—deep learning (particularly transformers and convolutional networks) is unparalleled at creating rich, dense representations (embeddings). In a hybrid system, these embeddings become powerful features fed into other components.

Classic ML for Structured Reasoning: For tabular data (customer purchase history, inventory levels, pricing matrices), gradient-boosted trees often outperform deep neural networks. They train faster, require less data, are more interpretable, and provide well-calibrated probability estimates. They excel at learning precise, non-linear interactions between known, curated features.

Rules for Certainty and Governance: No learned model can perfectly encode immutable business rules (e.g., "a promotional discount cannot exceed 80%"), legal requirements, or simple logical checks. A rules layer provides deterministic safety and ensures system behavior aligns with non-negotiable constraints.

Orchestration is Key: The complexity shifts from model architecture to system architecture. The challenge becomes designing the data flow: how features are computed, how models are chained or ensembled, how rules are applied, and where humans are injected. This is the domain of MLOps and ML engineering.

This perspective aligns with a broader industry trend we've covered, moving away from seeking a single "silver bullet" model and towards designing robust AI systems. It echoes themes from our recent article on the hidden bottlenecks of RAG deployments, where system design, not just model choice, determines success or failure.

Retail & Luxury Implications

For technical leaders in retail and luxury, this hybrid philosophy is not theoretical—it's the bedrock of current production AI. The monolithic AI model is a fantasy; the engineered AI pipeline is the reality.

Concrete Application Patterns:

Personalized Recommendation & Search: A hybrid system might use:

- A vision transformer to create embeddings of product images.

- An LLM or sentence transformer to embed product descriptions and customer reviews.

- A gradient-boosted tree model that takes these embeddings, plus structured customer data (past purchases, dwell time, demographic cohort), to score affinity.

- A rules layer to enforce business goals (e.g., "boost new collection items by 15% for VICs," "never show out-of-stock items").

Dynamic Pricing & Promotion:

- Tree-based models on structured data (inventory levels, competitor prices, seasonal trends, historical elasticity) form the core prediction engine.

- An NLP component analyzes sentiment from social media or news to adjust for brand perception risks.

- Rules hard-code margin floors, regulatory price caps, or channel-specific pricing strategies.

Fraud & Anomaly Detection:

- A graph neural network or embedding model identifies suspicious patterns in user behavior networks.

- A classic anomaly detection algorithm (like Isolation Forest) runs on transaction metadata.

- A rules engine flags transactions that hit known fraud patterns (e.g., rapid-fire orders from new accounts).

- Low-confidence cases are queued for human review by the loss prevention team.

Inventory Forecasting:

- Time-series models (ARIMA, Prophet) handle baseline seasonality.

- A tree-based model incorporates promotional calendars, weather forecasts, and economic indicators.

- A human planner can manually override forecasts for known one-off events (a major celebrity wearing the brand).

The key takeaway is that the choice is no longer "Do we use AI?" but "How do we orchestrate the right combination of techniques to solve this business problem reliably and efficiently?" The skill set in highest demand is shifting from pure data science towards ML engineering and MLOps—the ability to build, deploy, and maintain these complex hybrid systems.